Having to go through a massive log file in order to spot problems is every developer's greatest nightmare. There's a good chance the troubleshooting won't end there. They'll either have to follow the trail to several other log files, maybe on different servers, or they'll have to start from scratch. The log files could be in a variety of formats. This might continue indefinitely until the person loses all sense of self. To break this apparently never-ending cycle, you'll require log aggregation.

We will go over the following:

- What is Log Aggregation?

- Why Should You Aggregate Your Logs?

- Log Aggregation Methods

- What Kind of Data does Log Aggregation Collect?

- Why Log Aggregation is Important?

- What Should You Look for in a Log Aggregation Tool?

- Tools for Log Aggregation

What is Log Aggregation?

The initial step in log management is log aggregation. It can assist companies in gaining insight into their operations and application performance across a variety of infrastructures.

The process of gathering and centralizing diverse event log files from a variety of applications, services, and other sources is known as log aggregation. Applications generate large volumes of log files at a near-constant rate; log aggregation aims to organize and search this data in a centralized location. When it comes to optimizing their applications, tech experts without log aggregation have limited knowledge of log performance.

As a result, log aggregation is a step in the overall management process in which you combine several log formats from many sources into a single location to make it easier to analyse, search, and report on your data.

Why Should You Aggregate Your Logs?

Logs are critical to any system since they provide insight into what is going on and what your system is doing. The majority of your system's processes generate logs. The issue is that these files are frequently encountered on various platforms and in various formats. You can wind up with distinct logs hosted on different hosts if you have a range of hosts.

If you have an error and need to go through your logs to figure out what went wrong, you'll have to search through dozens, if not hundreds, of files. Even with good tools, this will take a long time and can easily frustrate even the most experienced system administrators. Log aggregation is a fantastic approach to collect all of these logs in one place.

The organization was able to easily and quickly troubleshoot problems by aggregating logs in a centralized location with near real-time access, as well as do trend analysis and spot abnormalities.

Log Aggregation Methods

You may aggregate logs using a few different methods:

- File Replication

You may simply copy your files to a central location using rsync and cron. This is a simple method that can help you gather all of your data in one place, but it isn't genuine aggregation. Furthermore, since you must adhere to a cron schedule, you will not be able to view your files in real-time. - Syslog, rsyslog, or syslog-ng

These instruct processes to transmit log entries to them, with the configuration directing these messages to a central place. You'll need to set up a central Syslog daemon somewhere on your network, as well as on the individual clients, to allow these clients to forward messages to the daemons. It's likely that Syslog already exists on your system, so all you have to do now is configure it properly and ensure that your central Syslog server is always available.

What Kind of Data does Log Aggregation Collect?

Event log files from applications and other sources inside the IT infrastructure are captured by log aggregation software solutions. When certain sorts of events occur within the application, event logs are automatically generated by the computer. Event logs can also be categorized based on the severity of the incident and the required reaction time. The following categories should be used to categorize event logs:

- Information

Changes in the state of the application or changes in the entities inside the application are documented in the informational log. Information logs are useful for determining what happened in the application over a given period of time. - Warnings/Application Malfunctions

If an application tries to perform an operation and fails, but still has the possibility to recover and deliver the service, a warning log may be generated. Although warning logs are not as critical as errors, they should be addressed as soon as possible to avoid a negative impact on user experience. - Errors/Application Failures

When the application encounters an error, it should generate a log containing the error classification automatically. An error indicates that the application is not working properly and that there is no way to fix it. Users in the production environment may be affected by the issue, resulting in service outages and a negative customer experience. To minimize the impact of any application problem that affects a crucial service, error logs must be treated immediately. - Positive Security Events

When a security event is completed successfully, most applications will generate a login response. When a user logs on to a computer, logs into a database or an application answers a security question, or completes another kind of authentication such as a time-based one-time password, biometric data, location, out-of-band authentication, and so on, this is what happens. - Negative Security Events

Log aggregation tools maintain track of failed security events in addition to logging success audits. A log will be generated if a user enters an invalid password, answers a security question incorrectly, or otherwise fails to authenticate access to the system.

Why Log Aggregation is Important?

Small businesses may overlook log aggregation, but as the firm grows, scattered logs in multiple locations become difficult to manage and follow. The majority of IT professionals and developers in charge of logging are accustomed to reporting basic environment and application errors to log files, but logs can be used for a lot more. System managers can use logs to identify probable server failures or establish the reasons for network outages if those environments are housed on a self-managed system.

If such environments are managed to host, either on-premise or in the cloud, logs can also assist SREs, operations engineers, or other application owners in identifying possible scalability issues or integration errors.

The ability to centralize logs is critical for efficient analysis, and a log aggregation technique can remove the need for substantial storage and log management across multiple locations. The centralized solution gives an overall overview of applications, infrastructure, users, and network resources using analysis tools.

Some organizations, where compliance is a priority, demand logging. For instance, PCI-DSS compliance requirement 10 stipulates that the company must "trace and monitor all access to network resources and cardholder data." When properly configured, centralized log aggregation provides full audit trails for all system components and applications that handle sensitive customer data. In the event that the company suffers a data breach, aggregated logs can be used to prove compliance and avoid significant fines and penalties.

What Should You Look for in a Log Aggregation Tool?

Consider a solution that is integrated with log management and has the following aspects when picking a log aggregation strategy:

- A Well-rounded Pipeline Library

Some tools include pre-built pipelines for each log source that may be tweaked to meet your organization's specific requirements. - Correlation with Other Telemetry

Some log aggregation tools automatically add metadata to your logs, such as tags or trace IDs, which can help you correlate your logs with data from other parts of your stack. - Control Over Indexing and Storage

Certain platforms allow you to ingest all of your logs while indexing and storing only the ones that are most important. This helps you avoid the cost-versus-visibility decisions that are commonly involved with log aggregation and management. - Live Tailing

Live tailing tools allow you to watch your whole ingested log stream in real-time, allowing you to keep track of important system events like deployments without needing to index everything. - Security Monitoring

Security monitoring tools let you apply threat detection criteria to your whole ingesting log stream, alerting you to potentially dangerous activity wherever in your system.

Tools for Log Aggregation

The following are some of the tools available:

- Atatus - Atatus Log management is delivered as a fully managed cloud service. It requires very little setup, little maintenance, and can be scaled to any size. So you can concentrate on your business rather than data pipelines.

- Apache Flume - This tool is capable of effectively collecting, aggregating, and moving large amounts of data. It can also be used to transport data between your various apps and store it on Hadoop's HDFS.

- Splunk - Splunk has been around for a long time and offers simple log aggregation, as well as a variety of other tools for viewing and analysing logs, as well as creating reports and searches. It's a simple but powerful application that allows you to quickly and simply collect logs across your whole IT network.

- Scribe - Facebook made this C++ tool available on GitHub. Scribe is a stable and scalable server that works with almost any language.

- Logstash - Logstash is a data processing pipeline that runs on the server and can handle data from a variety of sources. The open-source tool allows you to configure inputs, filters, and outputs, as well as access, browse, and search your logs.

- Chukwa - This Hadoop sub-project can collect and store your logs efficiently on HDFS.

- Graylog2 - Graylog2 uses elastic search or MongoDB to store your events. You get a user interface for analysing and searching your logs.

Conclusion

Log management is incomplete without log aggregation. Companies are continually building more complex infrastructures with a diverse set of software and applications, which makes them naturally more vulnerable to errors and faults.

Working with hundreds of log files on hundreds of servers makes detecting and resolving anomalies before they reach the end-user nearly impossible. You save time, money, and obtain deeper insights into your customers' activity by collecting logs.

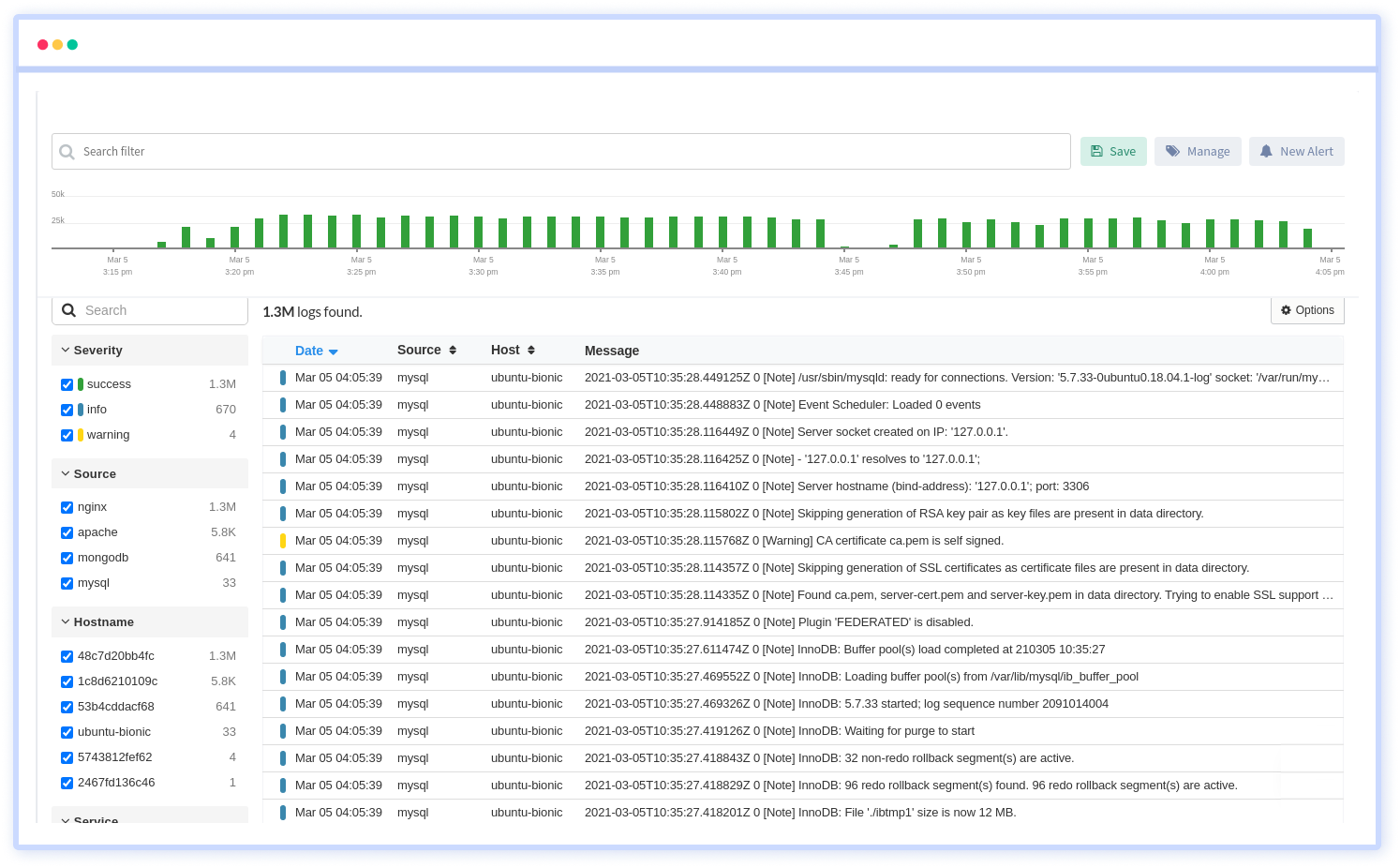

Atatus Log Monitoring and Management

Atatus is delivered as a fully managed cloud service with minimal setup at any scale that requires no maintenance. It monitors logs from all of your systems and applications into a centralized and easy-to-navigate user interface, allowing you to troubleshoot faster.

We give a cost-effective, scalable method to centralized logging, so you can obtain total insight across your complex architecture. To cut through the noise and focus on the key events that matter, you can search the logs by hostname, service, source, messages, and more. When you can correlate log events with APM slow traces and errors, troubleshooting becomes easy.