Log file analysis can be used to restrict access to a certain resource. Using the information in the log files, you can figure out which systems have access to resources like printers. Any violations of the limits will be recorded in the log files.

Log files can be used to determine which security infrastructure is ideal for your system's network. Log files documenting efforts to penetrate the security of your network demonstrate the need for a highly secure infrastructure.

We will cover the following:

- What is Log File?

- Types of Log Files

- Who Makes Use of Log Files?

- Why Log Files are Important?

- Challenges in Log Files

What is Log File?

A log file is a file that contains a list of events that a computer has "logged." They are records that include system-related information including internet usage.

The data comprises information about running applications, services, system errors, and kernel messages. Log files are commonly created during software installations and by Web servers, but they can also be used for a variety of other purposes.

It records every action that occurs in your application from the moment you start it to the moment you end it. Any calls you make to third-party APIs, as well as any background scripts, will be recorded here. This is where you'll discover what goes on behind the scenes of your application.

Log files are used by web servers to store information about website visits. The IP address of each visitor, the time of the visit, and the pages accessed are normally included in this data source.

The client log file may also maintain a note of what resources, such as images, JavaScript, or CSS files, were loaded during each visit. This information can be processed using website statistics software, which can then show in a user-friendly style.

For example, a user would be able to see a graph of daily visitors over a month and click on each day for further information.

Types of Log Files

Almost every component in a network generates a different form of log files in the database, which each component logs separately. As a result, there are many different sorts of logs, including:

- Event Logs

An event log is a high-level log that records network traffic and usage data such as incorrect password attempts, login attempts, and application events. - Server Logs

A server log is a text document that keeps track of actions on a certain server over some time. - System Logs

A system log, often known as Syslog, is a log of events that occur in the operating system. Startup notifications, system modifications, unexpected shutdowns, failures and warnings, and other critical procedures are all included. Syslogs are generated by Windows, Linux in the log directory, and macOS. - Authorization and Access Logs

Authorization and access logs contain a list of persons or bots who have accessed specific programs or files. - Change Logs

A change log is a list of all the modifications made to an application or file over time. - Availability Logs

System performance, uptime, and availability are all tracked in availability logs. - Resource Logs

Connectivity difficulties and capacity restrictions are recorded in resource logs. - Threat Logs

Threat logs are logs that contain information on the system, file, or application traffic that matches a firewall's security profile.

Who Makes Use of Log Files?

Log files can provide useful information to practically every job in an organization. The following are some of the most prevalent job-related use cases:

#1 ITOps

- Determine the infrastructure balance

- Workload management

- Maintain Uptime and avoid Outages

- Ensure the continuation of the business

- Cost and risk are reduced

#2 DevOps

- CI/CD Management

- Keep the application up and running

- Find critical application issues.

- Identify locations where application performance might be improved

#3 DevSecOps

- Encourage a shared sense of responsibility for application development and security log

- By identifying potential difficulties before deployment, you can save time, money, and danger of repetition

#4 SecOps/Security

- Discover information on an attack's 'who, when, and where'

- Recognize any questionable activity

- See if there are any increases in traffic that have been banned or authorized

- Methodologies such as the OODA Loop are being implemented

#5 IT Analysts

- Management of compliance and reporting

- OpEx and CapEx

Why Log Files are Important?

We require logs because they include information that cannot be accessed elsewhere. Let's imagine you made a change in DNS but failed to run the migration. This is likely to cause some issues. For a variety of reasons, you don't want to inform customers that a certain column in your table is missing.

To power essential business services, large IT organizations rely on a vast network of IT infrastructure and applications. The monitoring and analysis of application logfiles improve the network's observability, providing transparency and visibility into the cloud computing environment.

Observability, on the other hand, should never be viewed as a goal in and of itself. It should always be viewed as a means of achieving real-world business goals like enhancing system stability, meeting security and compliance goals, and driving revenue growth.

Log monitoring can be used by IT businesses to keep cloud computing systems secure and avoid data breaches. Unsuccessful log-in attempts failed user authentication, and unexpected server overloads are all things that can alert a security analyst that a cyber attack is underway. When these activities are recognized on the network, the finest security monitoring solutions can generate alerts and automate responses.

Logfile monitoring can also help IT organizations make better business decisions. The behavior of users within an application is captured in system log files, giving rise to the field of user behavior analytics. Developers can optimize an application by monitoring user behaviors within it to get users closer to their goals faster, enhancing customer satisfaction, and driving revenue in the process.

Challenges in Log Files

While log files appear to offer a limitless number of insights, there are a few key challenges that prevent organizations from realizing the full potential of log data.

- Volume

The volume of data gathered by logs has increased by orders of magnitude as a result of the cloud, hybrid networks, and digital transformation. How can an organization handle the huge volume of data produced by log files to quickly reap the full benefit afforded by log files if practically everything produces a log? - Standardization

Regrettably, not all log files have the same format. The data in a log might be structured, semi-structured, or unstructured, depending on the type of log. The data must be normalized to make it easily parsable to absorb and gain meaningful insights from all log files in real time. - Digital Transformation

Many organizations, particularly modest businesses and those with less established security operations, have monitoring and investigation skills that are lacking. Their IT environments' fragmented approach to log management makes detecting and responding to threats practically impossible.

Conclusion

In post-error investigations, log files are useful. You can use log files to figure out what's causing an error or a security breach. This is because the log files record data at the same time as the information system's activity.

When you access your logs, you should usually search for the phrase "error." Pay attention to the timestamp next to the mistake as you do so. Make that you're looking at the most current error that has been recorded.

Also, ensure sure your logs are configured at the start of a project. Some information is automatically logged, but if you want to keep an eye on specific issues, you'll need to do some custom configuration.

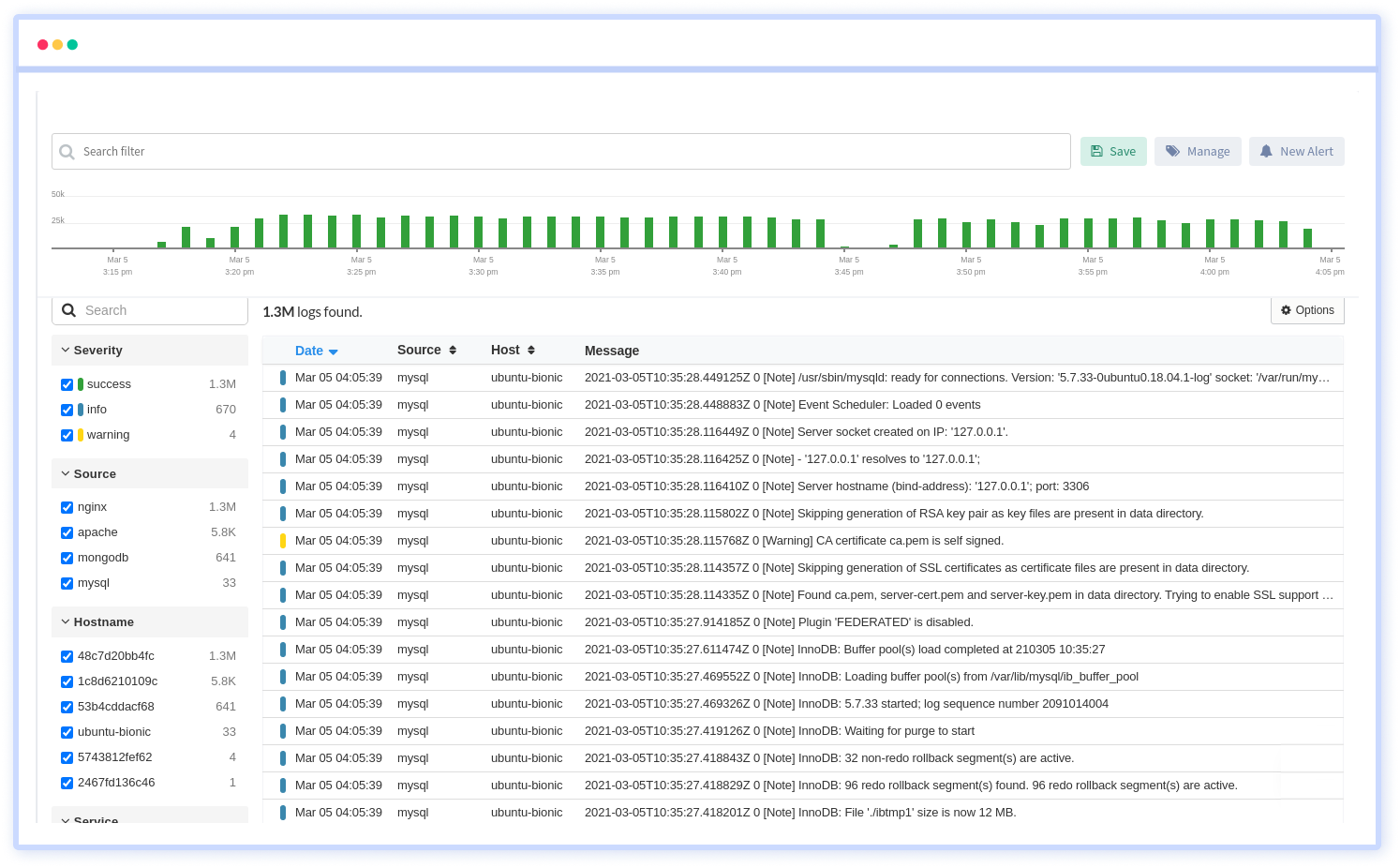

Atatus Log Monitoring and Management

Atatus is delivered as a fully managed cloud service with minimal setup at any scale that requires no maintenance. It monitors logs from all of your systems and applications into a centralized and easy-to-navigate user interface, allowing you to troubleshoot faster.

We give a cost-effective, scalable method to centralized logging, so you can obtain total insight across your complex architecture. To cut through the noise and focus on the key events that matter, you can search the logs by hostname, service, source, messages, and more. When you can correlate log events with APM slow traces and errors, troubleshooting becomes easy.