Apache vs NGINX: How to Choose The Right One?

Web servers are the backbone of the internet. Without web server software like Apache and NGINX, the internet might not be what it is today. However, they are no longer interchangeable.

The discussion between Nginx and Apache has been going on for a long time. Both of these servers are industry giants, collectively providing more than half of all web pages on the internet. Despite the fact that they're both in the same industry and serve comparable functions, each has its own set of dynamics for attaining its goal, which is to deliver web pages.

We will cover the following:

- What is a Web Server?

- Introduction to Apache

- Introduction to NGINX

- Apache vs NGINX

- Advantages & Disadvantages of Apache and NGINX

- Working with Both Apache and NGINX

- Apache vs NGINX: How to Choose

What is a Web Server?

A web server is a software and hardware that responds to client requests via the World Wide Web (WWW) using HTTP (Hypertext Transfer Protocol) and other protocols. A web server's primary responsibility is to show website content by storing, processing, and distributing web pages to users.

In addition to HTTP, web servers support FTP (File Transmission Protocol) and SMTP (Simple Mail Transmission Protocol) for email, file transfer, and storage.

Web server software manages how users access hosted content by connecting to the internet and allowing data flow to other connected devices. The client/server concept is demonstrated through the web server operation. All machines that host websites must have web server software installed.

In short, a web server is a machine that runs server software such as Apache or NGINX.

A web server can store website files as well as execute requests for access to them. This last operation, which is very crucial, is handled by the webserver software. For popular websites, the software you employ may be required to handle large amounts of requests in a short period of time, thus it must be capable.

NGINX and Apache both have the ability to scale and handle enormous quantities of requests. On a fundamental level, though, both server alternatives work differently.

Introduction to Apache

The Apache Software Foundation is a non-profit located in the United States that serves a variety of open-source software projects. It was founded on March 25, 1999, by a group of Apache HTTP Server developers.

The Apache Software Foundation (ASF) is a decentralised open-source developer community. The Apache License governs the distribution of the software they create, which is free and open-source (FOSS).

Apache projects use an open, pragmatic software licence and a collaborative, consensus-based development model. Each project is overseen by a self-selected team of technical specialists who are also active participants.

The ASF is only for volunteers who have made significant contributions to Apache projects. In that commercial assistance is provided without the risk of platform lock-in, the ASF is considered a second-generation open-source organisation.

The Apache Software Foundation's goals include giving legal protection to volunteers working on Apache projects and prohibiting other organisations from using the Apache brand name without permission.

When it comes to serving static website content, Apache is slower than Nginx. Because of its thread-based infrastructure, it can't handle a lot of traffic.

Introduction to NGINX

NGINX is a reverse proxy, load balancer, mail proxy, and HTTP cache all rolled into one web server. It is sometimes known as nginx or NginX. Igor Sysoev built the software, which was publicly released in 2004.

NGINX is a free and open-source web server that is distributed under the conditions of the 2-clause BSD licence. A large number of web servers employ NGINX, which is frequently used as a load balancer.

FastCGI, SCGI script handlers, WSGI application servers, or Phusion Passenger modules can all be used to deliver dynamic HTTP content across the network, and Nginx can also act as a software load balancer.

The software was built specifically for the C10K problem. Servers using thread-based infrastructure couldn't manage a huge number of concurrent requests (say 10,000) without running out of resources and disconnecting connections.

Igor Sysoev first released Nginx as open-source software in 2004 to address this issue. Nginx Inc was founded in 2011 to continue the development of open-source software as well as to offer commercial products, after quickly acquiring popularity and a significant client base.

Nginx handles requests using an asynchronous event-driven mechanism rather than threads. The modular event-driven architecture of Nginx can deliver predictable performance even under heavy demand.

Apache vs. NGINX

#1 Architecture

Nginx and Apache each have their own core structures for handling internet traffic and serving web pages.

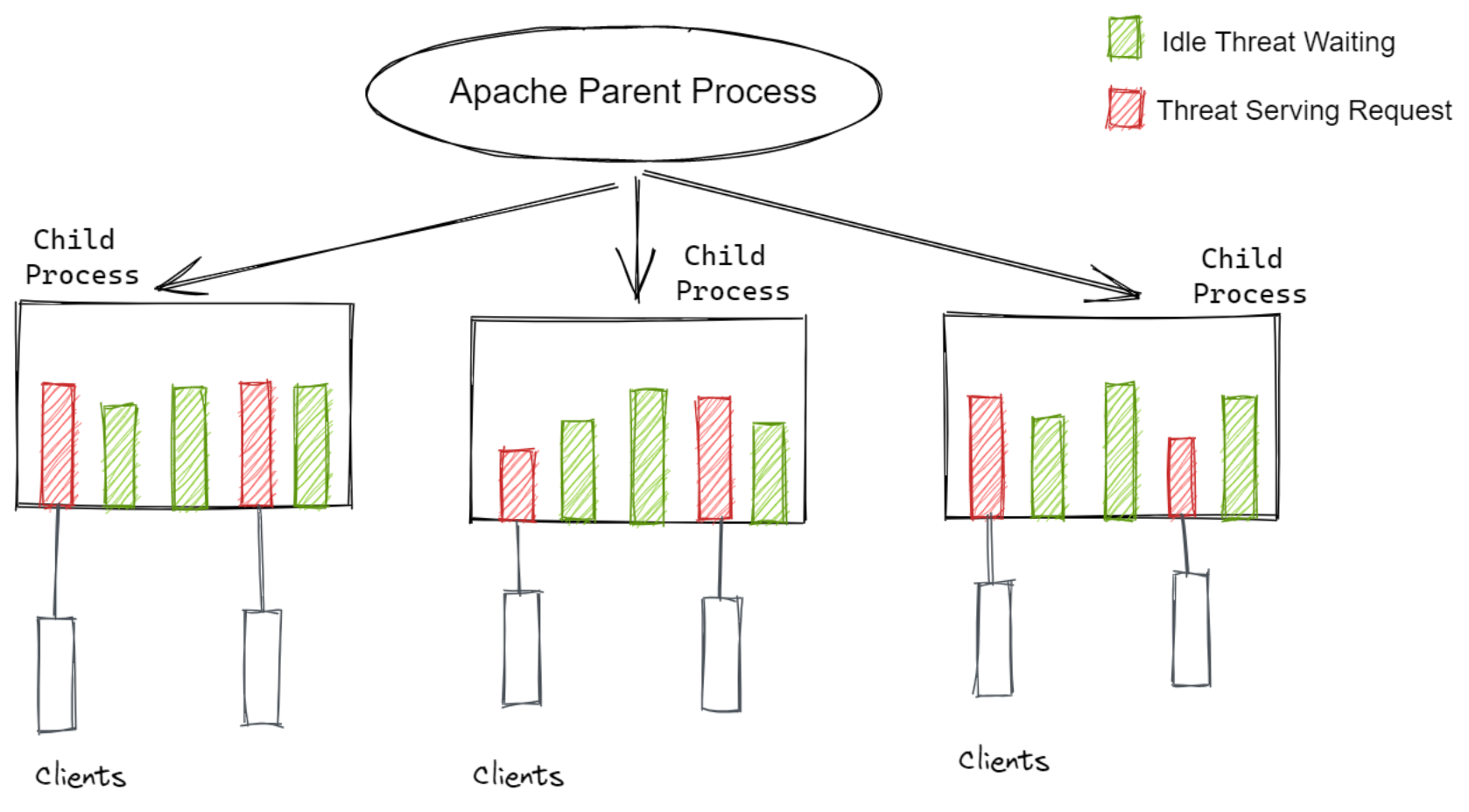

Apache

A process-based architecture was used in the development of Apache. This means that whenever a parent process gets a request, it starts a child process (a thread) to handle it. Each process has a single thread that can only handle one request at a time.

One significant disadvantage of this design is that each child thread consumes resources such as RAM.

Consider a traffic jam. Since there are so many requests, Apache will have to create new child processes to handle each one. The RAM will be filled with all of these new processes. There will be no more space to handle new requests once a specific limit has been reached.

In other words, Apache runs well when the number of requests received is less than the number of processes available. When the number of requests reaches this threshold, Apache's resources are depleted, and it begins to drop connections.

Perhaps this is why Apache was so popular at a time when there wasn't much internet traffic. To accommodate the ever-increasing load, Apache developers designed Multi-Processing modules (MPMs).

The prefork module, also known as MPM_prefork, is what we covered above. The following are the other two modules:

1. MPM_worker

The parent process spawns new child threads in this module, but there can be numerous threads per process this time. In other words, a single process can handle many requests. Because threads are more resource-efficient than processes, this module can scale better than the prefork module.

New connections can be handled faster in a worker process since it has more threads. New requests do not need to wait for a process to become available; instead, they can connect to an empty thread right away.

2. MPM_event

The key distinction between the Event and Worker modules is that the Event module creates separate threads for keep-alive connections whereas the Worker module does not.

A keep-alive connection is one that remains open after the first query has been processed. This allows several requests to be sent across the same connection without having to close and reopen it repeatedly.

Even if no active requests are being performed, a keep-alive connection keeps a thread open. The Event module allocates resources more efficiently by creating distinct threads for these and passing live requests to other threads.

The core design of Apache is a blocker module, which means that incoming requests must wait in a queue for processes to become available. This means it won't be able to scale as quickly as today's internet traffic.

As a result, if you're looking for a high-performance server, Apache is generally not the ideal option.

Despite the concerns mentioned above, Apache remains popular among developers. Its modular design allows it to be very adaptable and customizable. Third-party developers can simply improve their functionality by contributing to their source code.

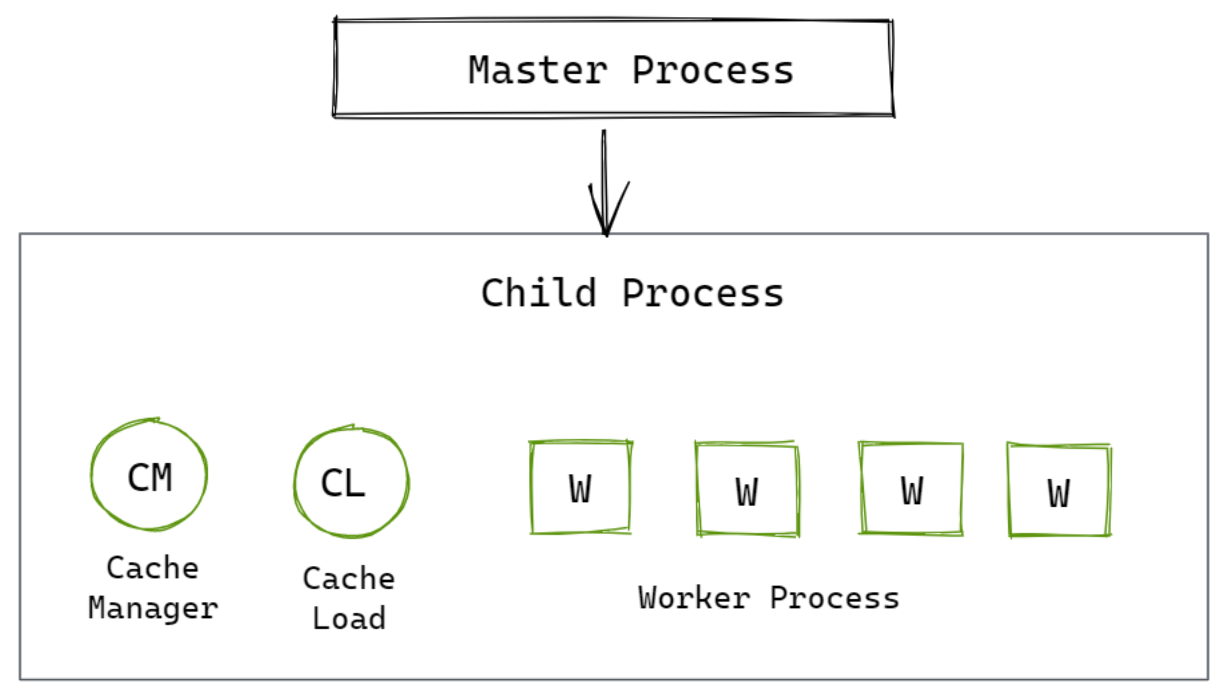

NGINX

Nginx was created particularly to address the resource-intensive and scalability issues that Apache's architecture caused. It has an event-driven, asynchronous, non-blocking architecture.

The following is how it works:

- It has a single master process that generates a number of child processes to handle various request types. Client requests are received by the master process, which transmits them to its child processes before moving on to receive more requests.

- The cache loader, cache manager, and worker process are three different sorts of child processes.

The cache loader and cache manager are used to load and store cache in order to serve static content more quickly.

All of the heavy liftings is done by the worker process. This is how it works:

- It works asynchronously, which means it doesn't wait for the client to finish processing a request before proceeding. The mechanism then goes on to other open requests or waits for new ones to come in.

- It is single-threaded, in the sense that it does not create new processes or threads in response to new requests. It has the capacity to handle thousands of queries at once.

- It is event-based, with port signals and notifications being utilised to reply to requests. This frees up resources, which can then be assigned as and when demands come in.

It's clear from the preceding description that Nginx's architecture employs a far more realistic mechanism for scaling. It employs an infrastructure that dynamically frees up processes to handle more requests rather than allocating resources to particular requests.

#2 Dynamic/Static Content

Apache

Static content is served by Apache using the standard file-based mechanism. However, due to everything we've talked about thus far, I wouldn't exactly call it "top of the line" when it comes to serving static content.

Its core architecture makes it difficult for it to provide good performance during a traffic surge.

When it comes to dynamic content, Apache handles it entirely within the server. It can simply integrate PHP reading modules into its worker processes, removing the need for any external software or components to interpret and execute dynamic content requests.

NGINX

When it comes to providing static content, Nginx is the best. Its event-based architecture efficiently utilises resources to serve both static and cached content. In fact, as compared to Apache, Nginx can serve 2.1 times more requests per second on average.

When it comes to dynamic content, Nginx does not handle it on the server. It can't read server-side languages like PHP on its own, thus it has to get help from another process. It sends the interpretation to the browser once it receives it.

This does not imply, however, that Nginx is slower than Apache at serving dynamic content. In fact, it matches Apache's performance in this area. The only minor disadvantage is that the technique used by Nginx may be a little more sophisticated from the administrator's perspective.

#3 Configuration

This criterion will help us understand the performance of Nginx vs Apache and how they handle requests.

Apache

Decentralized configuration is possible with Apache.

Inside a document directory, you'll find .htaccess distribution configuration files. These files include directives that apply to the current directory and its subdirectories.

Since these files are read or interpreted with each request, any changes made to them are immediately applied without the need to restart the server.

As a result, administrators can provide non-privileged users with some influence over their website's content without giving them full access to the configuration file.

NGINX

The architecture of Nginx does not allow for decentralised configuration. There's no such thing as a .htaccess file. While this limits its versatility, it also allows Nginx's configuration to serve requests more quickly.

Each time a request is made, Apache checks for .htaccess files in its directories and subdirectories. Nginx, on the other hand, searches the primary directory for the essential files. This is why, from a performance standpoint, Nginx's configuration architecture is superior.

If flexibility is more important to you, you should use Apache's configuration.

#4 Modules

Modules are add-ons that you can use in combination with your server software to increase its default capabilities.

Apache

Given Apache's age and popularity, it's no surprise that it has the upper hand when it comes to module selection versus NGINX.

Not only does Apache have more modules, but documentation and tutorials on how to utilise them are also easier to get by. Furthermore, Apache allows you to install, enable, and disable modules at your leisure, giving you a great deal of flexibility.

NGINX

NGINX does not provide the same benefit. Modules must be compiled into the NGINX core in order to be used. Furthermore, you can't disable modules once they've been compiled, making swapping choices considerably more difficult.

It's worth noting that NGINX Plus has a dynamic module capability that lets you disable and enable modules whenever you choose. However, even with Plus, those modules must be compiled with NGINX core before they can be used.

NGINX's documentation and module library are gradually growing, however, they still fall short of Apache in terms of volume. However, given how quickly NGINX is gaining market share, this is likely to change in the near future.

#5 Request Interpretation

The method of interpreting requests is an intriguing topic for discussion in the Apache vs. Nginx debate. Both of them handle and interpret requests in quite different ways. Their distinct ways define them from one another and make one slightly superior to the other.

Let's have a look at how!

Apache

The capacity to comprehend requests is provided by the file system location. As a physical resource on the file system, it may require a more abstract evaluation. It sends requests in the form of file system locations.

URI locations are used by Apache, but they are usually for more abstract resources. And Apache uses directory blocks under the document root for creating or configuring a Virtual host.

The usage of .htaccess files to override certain directory configurations reflects this preference for file system locations.

NGINX

Nginx was designed to function as a web server as well as a reverse proxy server. Nginx works mostly using your eyes due to the architecture request for them. When necessary, translate to the system.

It doesn't have a way to provide configuration. Instead, pass the URI of the file system directory. Nginx can easily work as a web and proxy server bypassing requests as URIs rather than file system locations. It's easy to set up because it lays out how to respond to different request patterns.

It waits until it is ready to serve the request before checking the file system. It explains why it doesn't use .htaccess files in any way.

Because Nginx interprets requests as URI locations, it may easily serve as a proxy server, load balancer, and HTTP cache in addition to being a web server.

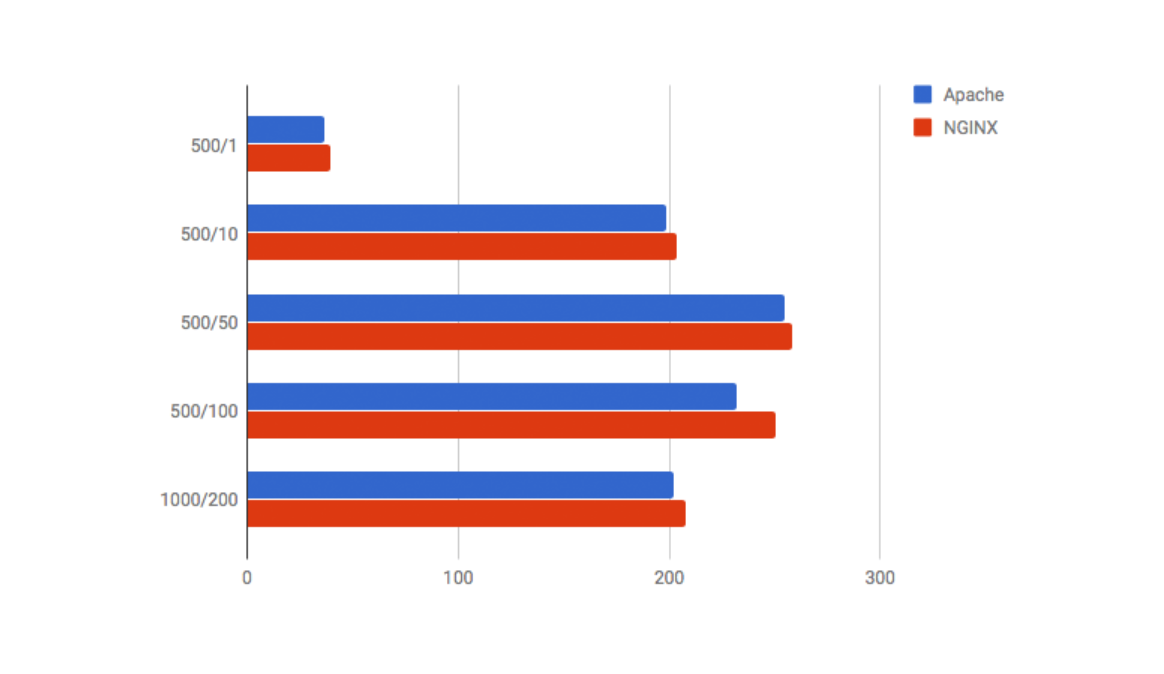

Also, in the Apache vs. NGINX battle, NGINX triumphs once more in terms of transfer rate (the speed at which data was sent from the server to the client). In most cases, Nginx wins the 500/100 by a significant margin.

#6 Support

Apache

Apache is extremely well documented, and answers to the majority of questions may be found there. Aside from that, it features a 'Users list' or 'Usenet groups' where you may ask industry experts questions and receive responses.

Commercial Apache httpd support is also available, even though the Apache Foundation does not keep track of it.

NGINX

While locating support documentation for Nginx used to be difficult due to the majority of it being written in Russian, it is now pretty simple.

Nginx's documentation and community help are improving as the company's customer base grows. Stack Overflow and mailing lists provide community support.

Nginx Plus and prebuilt open-source packages also have commercial support.

#7 Security

The security of Apache vs. Nginx is once again a point of contention. Both of these web servers, however, provide excellent enhanced security for their C-based code bases.

Apache

Apache ensures that all websites hosted on its server are free of malware and hackers.

It includes configuration guidelines for dealing with DDoS attacks, as well as the mod evasive module for responding to HTTP DoS, DDoS, or brute force attacks.

NGINX

The NGINX code base, on the other hand, is several orders of magnitude smaller, which is unquestionably a great gain from a security standpoint. A list of recent security advisories is also available on NGINX.

#8 Flexibility

When it comes to a web server, flexibility is one of the most critical considerations. The flexibility of Apache vs. Nginx offers several intriguing distinctions.

Apache

Riding modules can be used to make changes to the web server. Because dynamic module loading has been a feature of Apache for a long time, all Apache modules support it.

NGINX

However, this is not the case with NGINX. At the beginning of 2016, NGINX gained capability for dynamic module loading. Previously, the admin had to compile the modules into the NGINX binary.

Most modules do not yet allow dynamic loading, although they will most likely do so in the future.

Advantages & Disadvantages of Apache and NGINX

You should now be aware of the key differences between Apache and NGINX. Let's take a look at the advantages and disadvantages of each software.

Apache

Let's start with the most important advantages of adopting Apache:

- It might be less difficult to set up and configure

- .htaccess files provide you with more granular control over your server's configuration

- The module selection has improved, and you may now enable and disable modules at your convenience

- Using several modules, you may choose how to handle requests

The main disadvantage of utilising Apache over NGINX is that NGINX scales better. If your website is still in its early stages of development, Apache should be able to handle the demand.

However, if your website gets extremely popular, you may need to consider changing your server stack at some point. Switching to NGINX or utilising it as a reverse proxy for your Apache web server is one option.

NGINX

When compared to Apache, NGINX has two distinct advantages:

- Performance

- Scalability

To be more explicit, NGINX is superior in the following areas:

- Managing a large number of requests at the same time

- Increasing performance while using fewer hardware resources

- Serving static content more quickly

This is why so many people choose NGINX as a reverse proxy. NGINX, despite all of its performance advantages, is not without problems.

While having a single configuration file speeds up requests, it limits NGINX's flexibility when compared to Apache. This also applies to modules, because the open-source version of NGINX requires you to compile modules in order to utilise them — and you can't turn them off.

In fact, this implies that getting NGINX to work the way you want it to can be a lot more difficult than getting Apache to work.

If performance is your top issue, NGINX is the way to go. Popular websites will eventually need to bring in the big guns to handle tremendous traffic without downtimes, slow loading times, and other issues.

NGINX can also be a more cost-effective solution because it allows you to get higher performance with fewer hardware resources.

Working with Both Apache and NGINX

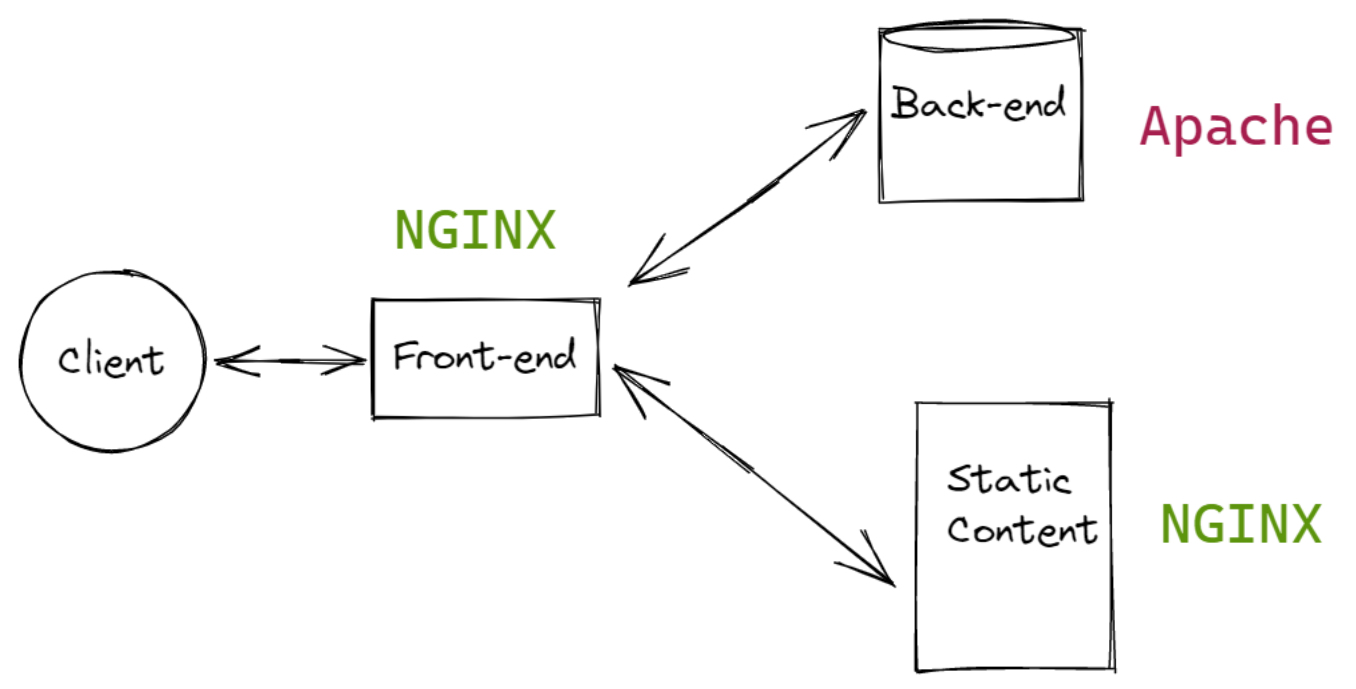

Now that we've looked at the benefits and drawbacks of NGINX and Apache, you should be able to decide whether Apache or NGINX is right for you. However, many users find that by combining the benefits of both servers, they can get the most out of them.

When utilising NGINX and Apache together, the traditional setup is to put NGINX ahead of Apache. As a result, it acts as a reverse proxy, allowing it to handle any client request.

What is the significance of this?

Because it takes advantage of the fast-processing speeds and the ability of NGINX to manage a large number of connections at once.

NGINX is a superb server for static content because files are sent directly and rapidly to the client. NGINX proxies requests for dynamic content to Apache for processing. The rendered pages will then be returned by Apache. Following that, NGINX can deliver content back to clients.

Many people think this is the best design since it allows NGINX to act as a sorting machine, handling all requests and forwarding on those that it can't handle natively. If you minimise Apache's request volume, you can reduce the amount of blocking that occurs while Apache threads or processes are busy.

Users can expand out using this arrangement by adding more backend servers as needed. NGINX may be easily set to pass to a number of servers, improving the configuration's performance and reducing the risk of failure.

Apache vs. NGINX: How to Choose

When Should You Use Apache Instead of NGINX?

- Apache .htaccess

The .htaccess file, which is supported by Apache, is not supported by NGINX. However, Apache gives you the ability to grant non-privileged users authority over several key components of your website. - In The Event of a Functional Limitation

You might wish to use Apache instead of Nginx if you have some limitations or need to use extra modules that Nginx does not allow.

When Should You Use NGINX Instead of Apache?

- To Process Static Content Quickly

Nginx can handle static files from a given directory considerably better than Apache. The upstream server processes aren't slowed down by the heavy, multiple static content requests because NGINX can handle them all at once. The total performance of backend servers is greatly improved as a result of this. - Great for High Traffic Websites

Nginx is extremely light and efficient when it comes to server resources. As a result, most web developers prefer NGINX to Apache. Today's organisations, in particular, have Magento Monitoring to work on a high-traffic website and are knowledgeable about Nginx.

And It’s a Wrap!!!

Apache and NGINX are both capable, adaptable, and powerful web servers. Choosing which server is ideal for your needs is primarily determined by analysing your specific requirements and testing the patterns you expect to observe.

A number of distinctions between these servers have a real impact on capabilities, performance, and the amount of time it takes to successfully deploy each solution.

After all, is said and done, no web server can fulfil everyone's needs 100% of the time, so it's important to use the option that best suits your business goals.

Monitor Your Entire Application with Atatus

Atatus provides a set of performance measurement tools to monitor and improve the performance of your frontend, backends, logs and infrastructure applications in real-time. Our platform can capture millions of performance data points from your applications, allowing you to quickly resolve issues and ensure digital customer experiences.

Atatus can be beneficial to your business, which provides a comprehensive view of your application, including how it works, where performance bottlenecks exist, which users are most impacted, and which errors break your code for your frontend, backend, and infrastructure.

#1 Solution for Logs, Traces & Metrics

APM

Kubernetes

Logs

Synthetics

RUM

Serverless

Security

More