A Guide to Kubernetes Core Components

In the ever-evolving landscape of modern software development and deployment, Kubernetes has emerged as a prominent solution to manage and orchestrate applications. This technology has redefined how applications are deployed and maintained, offering a flexible and efficient framework that abstracts the underlying infrastructure complexities.

In Kubernetes, you define how network traffic should be routed to different services and pods. This is similar to how a traffic management system routes vehicles along specific roads or lanes to reach their destinations.

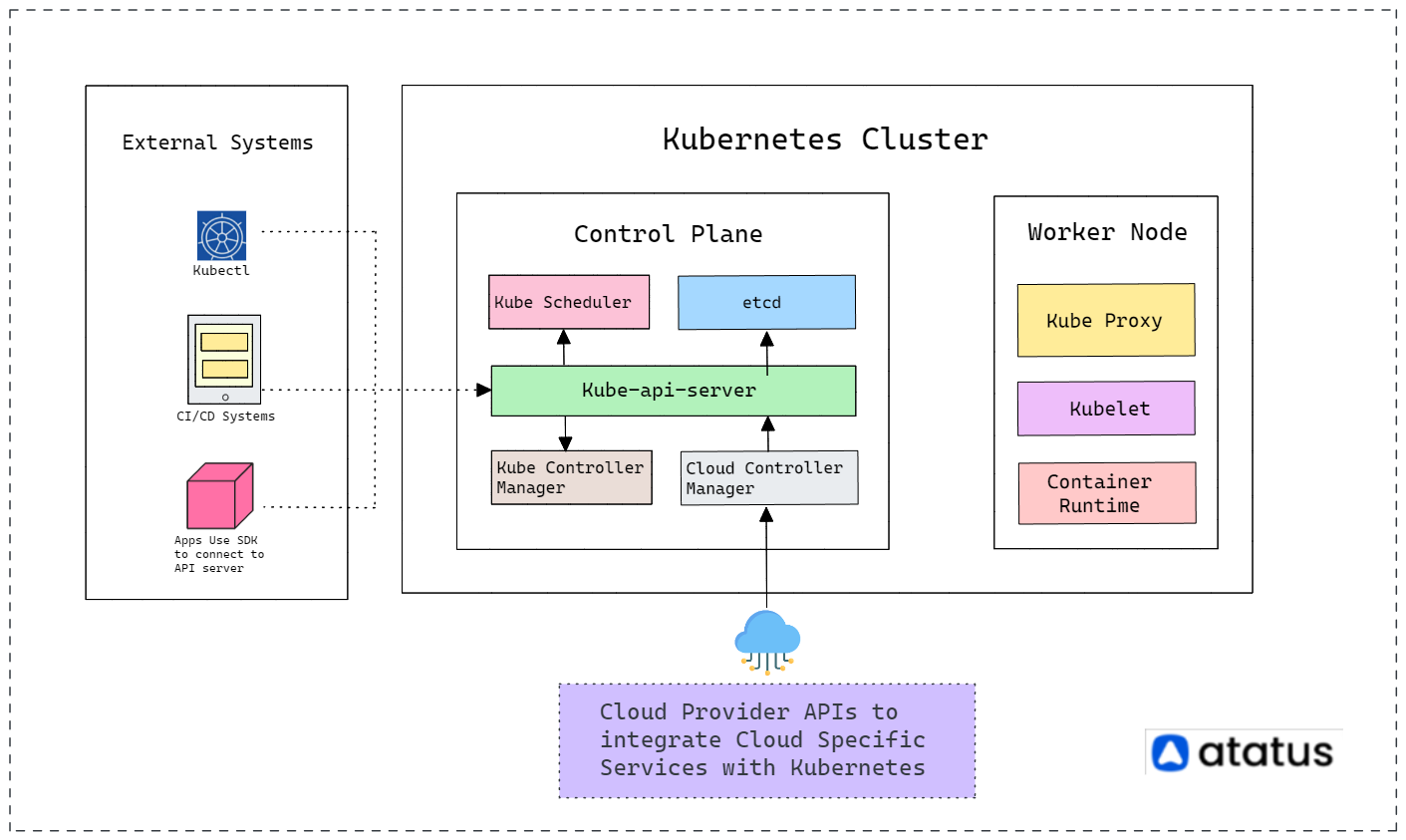

But what exactly are the key players that make Kubernetes work its magic? The key players that enable Kubernetes to work its magic are its essential components. These include the Master Node, which coordinates and manages the cluster, the Node where containers run, and Pods that house containers.

Services, Networking, Volumes, and ConfigMaps/Secrets further facilitate seamless communication, storage, and configuration management, all harmonizing together to orchestrate application deployment and management.

Get ready to explore the inner workings of Kubernetes components. We'll break down its key parts to reveal how it manages applications in containers, all explained in a way that's easy to grasp. Also, we'll guide you through setting up your own Kubernetes environment step by step!

Let's get started!

Table of Contents

Kubernetes Components

Kubernetes components form the fundamental building blocks, collectively establishing the foundation for deploying, overseeing, and expanding containerized applications in a Kubernetes cluster.

These constituent parts collaborate harmoniously to guarantee the seamless functioning of both the cluster itself and the applications hosted within it. The key components include:

- Control Plane

- Node Components

- Pods

- Service

- ConfigMap

1. Control Plane

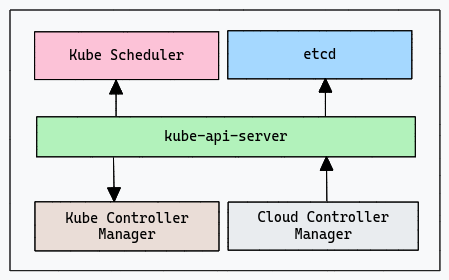

The Control plane assumes a central role, similar to an orchestrator in a distributed system, overseeing the operational flow. It serves as the command center for the Kubernetes cluster, coordinating and regulating various processes.

Comprising multiple essential components, this plane is responsible for maintaining the system's overall health and enabling effective management. The control plane includes, API server, etcd, Scheduler, and Controller manager. Now let's delve into each of these components one by one.

i.) API Server

The API Server functions as a vital communication hub within the Kubernetes ecosystem. It serves as the entry point for a variety of interactions, processing commands issued by users, administrators, and automated procedures. Its significance lies in its role as the gateway to the entire system.

All interactions with the cluster, whether it's deploying a new application, scaling resources, or updating configurations, are channelled through the API Server. The API Server's robustness and efficiency are fundamental to maintain the stability and functionality of the entire Kubernetes environment.

ii.) etcd

etcd is a distributed key-value store that acts as a highly reliable and consistent database for storing configuration data and other crucial information in a Kubernetes cluster. It's used to maintain the state of the cluster and provide a source of truth for the control plane components.

Whenever something changes in the cluster, like when a new app is added or an existing one is scaled up, these changes are noted down in etcd. Just like you would write down important notes to remember, etcd remembers all the changes happening in the cluster.

Etcd ensures high reliability by storing multiple copies of data, preventing loss in case of failures. Just as a backup safeguards against mistakes, etcd acts like an organized notebook for critical changes. It aids Kubernetes computers in staying coordinated, enabling seamless teamwork across the cluster.

iii.) Scheduler

The Scheduler in Kubernetes is an essential component responsible for making intelligent decisions about where to place newly created pods (groups of containers) within the cluster's nodes. It ensures efficient utilization of resources and maintains optimal performance.

Imagine you are managing a big warehouse (your Kubernetes cluster) where workers (pods) perform various tasks. The Scheduler is like a supervisor with a deep understanding of each worker's skills and the warehouse's layout.

The Scheduler evaluates the resources on each node, including CPU, memory, storage, and specialized hardware. If a node becomes unavailable or a pod fails, the Scheduler rearranges affected pods to healthy nodes for continuous operation.

Kubernetes offers customization options, enabling you to tailor the Scheduler or adopt alternative scheduling methods to match your unique requirements. This ensures effective resource utilization and adaptable scheduling strategies in the cluster.

iv.) Controller Manager

The Controller Manager in Kubernetes serves as an automated team of managers overseeing application states and scaling. From ensuring a set number of pods to managing updates and maintaining unique identities, it orchestrates efficient operations.

The Replication Controller ensures a specific number of app copies are always available. The Deployment Controller manages smooth app updates. The StatefulSet Controller maintains consistent identities for stateful apps. The Namespace Controller keeps apps organized, and the Job Controller handles specific tasks, guaranteeing efficient, organized, and reliable app management in your cluster.

Together, these controllers form the backbone of Kubernetes, orchestrating various aspects of application management. Their collective efforts ensure that your applications run smoothly, scale seamlessly, and remain organized within the cluster, contributing to a robust and reliable computing environment.

v.) Cloud Control Manager

The Cloud Controller Manager is an optional component within the Kubernetes Control Plane, specifically designed for clusters running on cloud providers like AWS, Azure, or Google Cloud. This manager acts as a bridge, interfacing between the generic Kubernetes control logic and the cloud-specific APIs, enabling seamless integration and harnessing cloud-native capabilities.

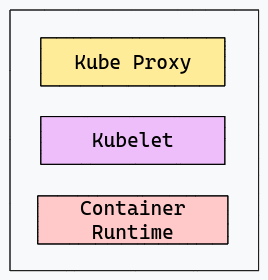

2. Node Components

In Kubernetes, a "Node" is a foundational unit that functions as a worker machine within the cluster. Nodes are responsible for executing applications and containers. Each Node possesses its allocated resources like CPU and memory. Nodes play a pivotal role in hosting and managing applications, ensuring efficient resource utilization, and contributing to the overall stability and performance of the Kubernetes ecosystem.

Subcomponents within a Node, such as the "Kubelet" and "Kube Proxy," further enhance its capabilities. The "Kubelet" ensures that containers run efficiently within "Pods," while the "Kube Proxy" manages networking and communication. These elements collectively enable Nodes to provide a stable environment for applications to thrive. Let's explore each of them in detail below.

i.) Kubelet

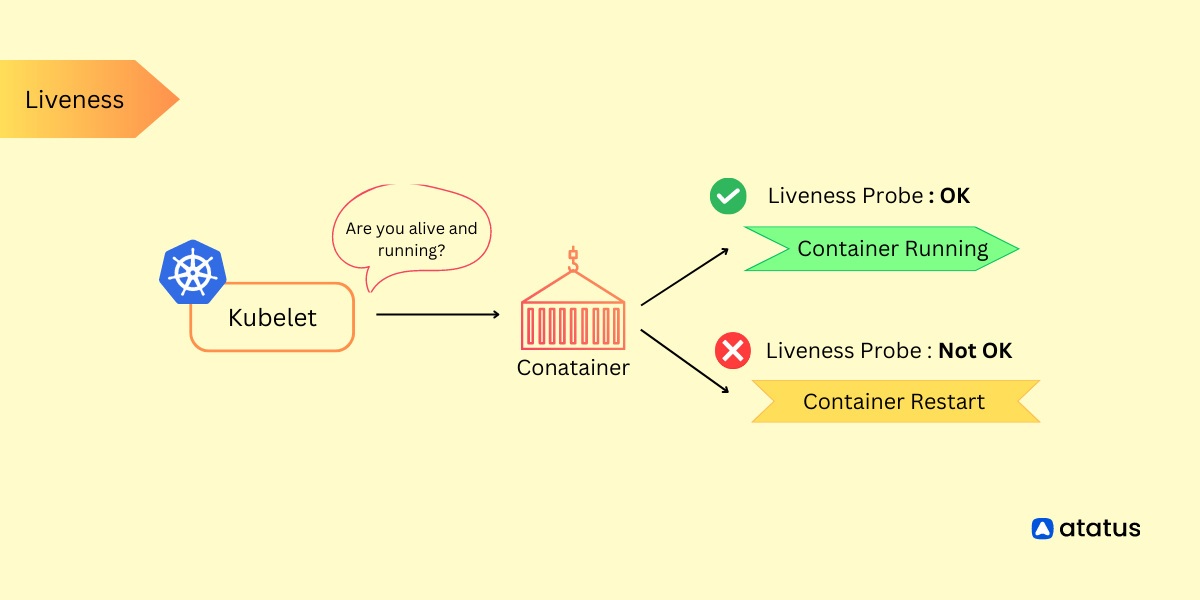

Kubelet is a core component in Kubernetes that operates within each Node of the cluster. Its primary responsibility is to ensure that containers are running and healthy within their designated Pods, which are the smallest deployable units in Kubernetes.

When you create a Pod with specific containers, Kubelet takes charge of making sure those containers are up and running. It communicates with the API Server to receive instructions on which containers should be in which Pods, then takes actions to ensure that the containers are in the desired state.

Kubelet also works closely with other components like the Container Runtime (e.g., Docker) to manage the lifecycle of containers. Through these interactions, the Kubelet contributes significantly to the seamless operation of applications in Kubernetes, bridging the gap between the control plane's directives and the practical execution of containerized workloads within the cluster.

ii.) Kube Proxy

Kube Proxy is a fundamental component in Kubernetes responsible for managing network communication between different services and Pods within the cluster. When two Pods in the cluster need to communicate, they can't directly connect to each other using their IP addresses because they might be on different Nodes.

This is where kube proxy steps in. It sets up rules and configurations to enable secure and efficient networking. Kube Proxy operates in different modes. One mode is "iptables," where it creates rules at the Linux kernel level to direct traffic. Another mode is "IPVS," which uses a more advanced technique for load balancing.

Additionally, Kube Proxy works with Services in Kubernetes. Services provide a stable IP and DNS name for a set of Pods. Kube Proxy ensures that when you access a Service, your request gets routed to the appropriate Pod, even if the Pod's location changes.

iii.) Container Runtime

The Container Runtime serves as the dynamic engine behind containers within Kubernetes. It takes your container images, which are packages containing your application and its dependencies, and transforms them into running instances. This component is crucial because it ensures your applications run smoothly in isolated environments, each with its own allocated resources like CPU and memory.

Containers share the same underlying operating system kernel, which makes them lightweight and efficient. The Container Runtime abstracts the complexities of managing these isolated environments, ensuring that applications don't interfere with each other.

In the Kubernetes architecture, this component is vital because it streamlines the deployment, management, and execution of containers, enabling efficient resource utilization and seamless coordination within the cluster. Different runtime options are available, allowing you to choose the one that best fits your needs while Kubernetes takes care of orchestrating and abstracting their functionalities.

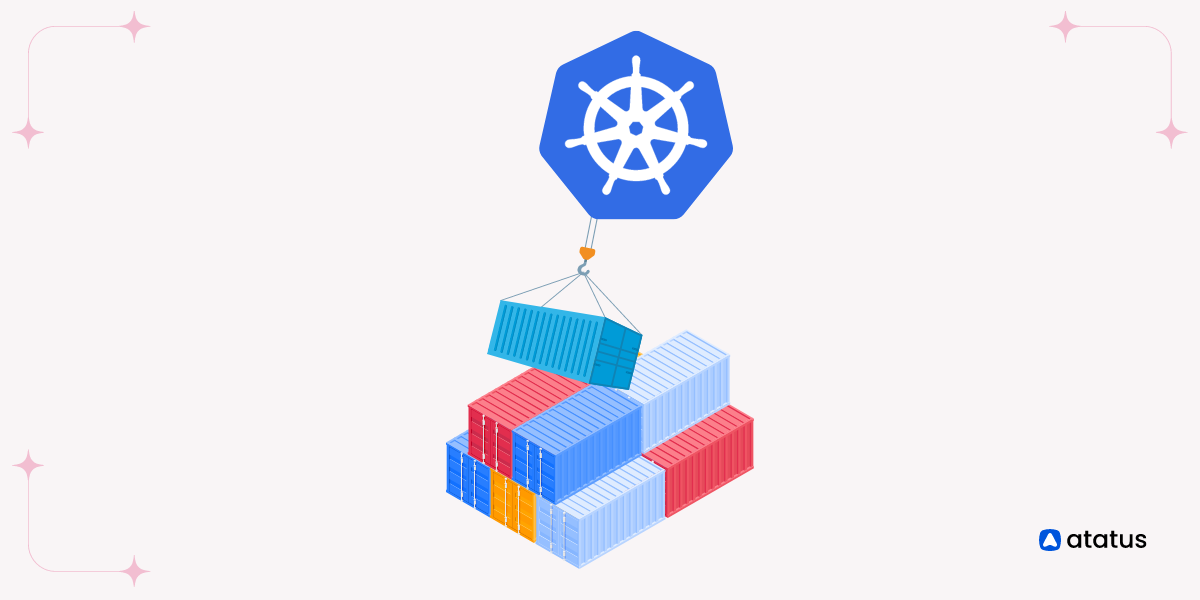

3. Pods

A Pod is the fundamental unit representing the smallest deployable concept. Each Pod is assigned a unique IP, enabling seamless communication among containers using localhost. This eliminates the need to expose internal ports externally. Containers within a Pod also share storage volumes, simplifying data exchange

Kubernetes manages the deployment and scaling of Pods. For instance, if you want three replicas of your application for load balancing, Kubernetes creates three identical Pods, each containing the same set of containers. This enhances application availability and resilience.

However, Pods are transient, and their life cycles are managed by controllers like Deployment. If a Pod fails, the controller ensures a new one is created to replace it, maintaining the desired state. Overall, Pods represent a vital component within the Kubernetes architecture, that facilitates container interaction, streamlined data exchange, and robust application reliability.

4. Service

In Kubernetes, a Service is a critical component responsible for enabling communication and load balancing between pods. It acts as an abstraction layer that provides a stable network endpoint for accessing pods, even as they scale or change. Services ensure that applications within the cluster can reliably interact with each other, abstracting away the underlying pod dynamics.

5. ConfigMap

ConfigMap in Kubernetes is a component used to store configuration data separately from application code. It allows you to manage various configuration settings, such as environment variables, command-line arguments, and configuration files, in a centralized manner. ConfigMaps help decouple configuration details from the application itself, simplifying updates and modifications without requiring changes to the application's code.

Kubernetes Components Best Practices

- Cluster Size Optimization: Maintain manageable cluster sizes to prevent wasted resources and operational complexities.

- Resource Allocation Precision: Set accurate resource requests and limits to ensure efficient sharing of resources.

- Proactive Health Monitoring: Establish readiness and liveness probes for app health checks and automated healing.

- Logical Resource Separation: Utilize namespaces to logically group applications, enhancing organization and resource isolation.

- Sensitive Data Protection: Safeguard sensitive data like passwords and APIs using Kubernetes Secrets instead of hardcoded values.

- Enhanced Deployment Control: Opt for Deployments for finer control over scaling, updates, and rollbacks compared to direct Pod definitions.

- Service Types: Choose the appropriate Service type (ClusterIP, NodePort, LoadBalancer) based on application requirements.

- Automated Scaling Agility: Enable Horizontal Pod Autoscaling to dynamically adapt pod counts according to CPU or custom metrics.

- Access Management: Enforce Role-Based Access Control (RBAC) to manage permissions and minimize security vulnerabilities.

- Data Safety Net: Regularly back up the cluster's control plane database (etcd) to streamline recovery during failures.

Conclusion

Delving into Kubernetes components and their Linux setup opens doors to a powerful technological orchestration. Kubernetes, functioning as a central control, assigns tasks to worker Nodes, similar to specialized workers in a team.

Pods, resembling integrated units, ensure seamless collaboration. Services and Networking facilitate communication, much like efficient channels for data transfer. While ConfigMaps and Secrets manage configurations and sensitive information, comparable to secure communication protocols.

Setting up Kubernetes on Linux involves orchestrating these components. The choice of Linux serves as the foundation, while Docker, the container manager, handles application isolation. kubectl, as a control tool, directs these actions.

As we conclude, grasping Kubernetes components and their Linux setup empowers us to orchestrate applications effectively. Whether a beginner or an expert, embrace Kubernetes as a framework to orchestrate digital efficiencies and navigate the technological symphony with expertise.

Monitor Kubernetes Nodes and Pods with Atatus

Ensure your nodes and pods are operating at their best by leveraging our advanced monitoring features. Stay ahead of issues, optimize performance, and deliver a seamless experience to your users. Don't compromise on the health of your Kubernetes environment.

- Effortless Node Insights: Gain real-time visibility into CPU, memory, network, and disk usage on nodes, preventing bottlenecks and failures.

- Precise Pod Monitoring: Monitor container resource usage, latency, and errors in pods, ensuring seamless application health.

- Seamless Integration: Atatus smoothly integrates with your Kubernetes setup, simplifying node and pod monitoring setup.

- Smart Alerts: Receive timely anomaly alerts, empowering you to swiftly respond and maintain a stable infrastructure.

- Enhanced Application Performance: Ensure consistently reliable application delivery, improving user experience and satisfaction.

Start monitoring your Kubernetes Nodes and Pods with Atatus. Try your 14-day free trial of Atatus.

#1 Solution for Logs, Traces & Metrics

APM

Kubernetes

Logs

Synthetics

RUM

Serverless

Security

More