Load Balancing 101: Understanding the Basics for Improved Web Performance

In today's digital landscape, where websites and applications are expected to handle increasing traffic loads, ensuring optimal performance and reliability is of paramount importance.

Load balancing, a technique used to distribute incoming network traffic across multiple servers, plays a critical role in achieving these goals.

Load balancers which are the key components in this process, help optimize resource utilization, enhance the scalability functions, and improve overall system efficiency.

In this article, we will explore the concept of load balancing and see how load balancers help businesses maintain high availability and performance round the clock.

Table Of Contents:-

- Need for Load Balancing

- Understanding Load Balancers

- Types of Load Balancers

- Load Balancing Algorithms

- How load balancing benefits businesses?

- How can you improve the capabilities of load balancers?

- How to choose the right load-balancing solution for your business?

Need for Load Balancing

With the exponential growth of internet usage and the increasing demands placed on web services, load balancing has become essential for maintaining high performance and availability. By distributing traffic evenly, load balancing helps to:

- Enhance scalability

- Optimize resource utilization

- Improve reliability

Load balancing allows organizations to easily add or remove servers as traffic demands fluctuate. This enables seamless scalability and accommodates growing user bases without causing service disruptions.

By efficiently distributing workloads, load balancing ensures that server resources are utilized to their full potential. This helps to prevent server overload, thereby maximizing the efficiency and cost-effectiveness of infrastructure investments.

Load balancers monitor the health and availability of servers in real-time. In the event of a server failure or outage, load balancers redirect traffic to other healthy servers, thereby ensuring uninterrupted service and minimizing downtime.

Understanding Load Balancers

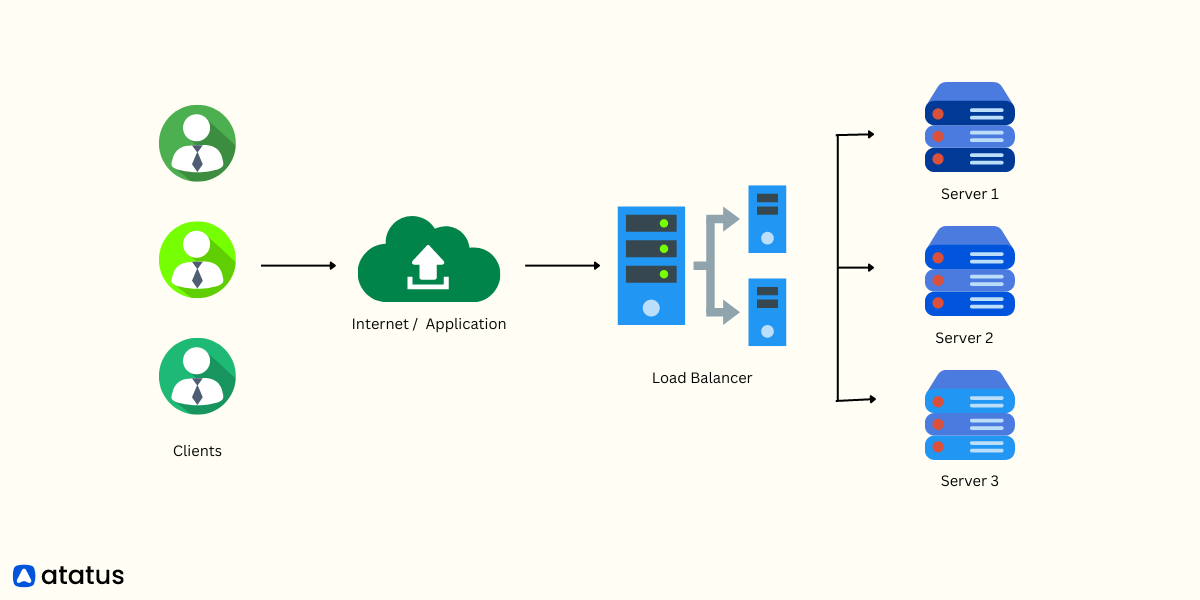

Load balancing is a technique used to evenly distribute incoming network traffic across multiple servers, ensuring that no single server is overwhelmed with excessive requests. By distributing the workload across multiple servers, load balancing improves the responsiveness, scalability, and availability of web applications.

Load balancers act as intermediaries between clients (such as users or devices) and servers, intelligently routing incoming requests to the most appropriate server based on predefined algorithms, rules, or metrics.

It prevents any single server from becoming a bottleneck and provides fault tolerance by redirecting traffic to functioning servers in the event of a failure.

Load balancers can be implemented at different layers of the network stack, including the application layer, transport layer, or network layer, depending on the specific requirements and architecture of the system. They can also provide additional functionalities such as SSL termination, session persistence, caching, and security features like firewalls and intrusion prevention systems. And you will learn about all them in the subsequent sections.

Types of Load Balancers

Load balancers can be implemented at different levels of the network stack, depending on the specific requirements of the system.

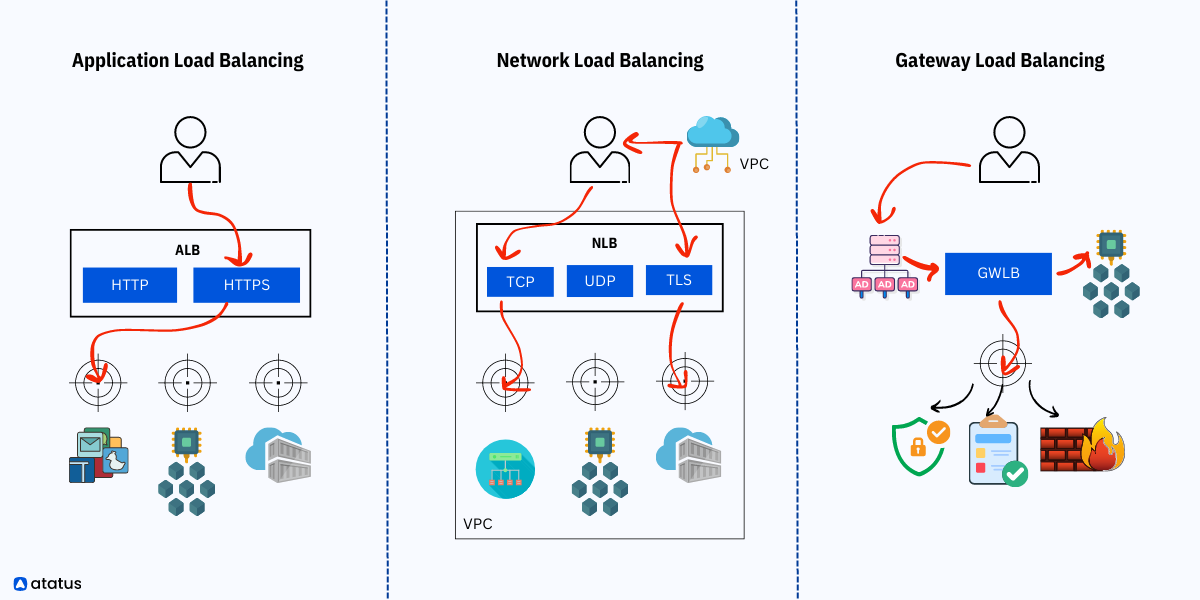

- Application Layer Load Balancers: These load balancers operate at the highest layer of the network stack and are capable of inspecting the content of the incoming traffic. They can make intelligent decisions based on application-specific data, such as HTTP headers or cookies. Application layer load balancers are commonly used for HTTP/HTTPS-based applications and offer advanced features like SSL termination and content caching.

- Transport Layer Load Balancers: Operating at the transport layer (Layer 4) of the network stack, these load balancers make routing decisions based on information in the network transport layer protocols (e.g., TCP or UDP). Transport layer load balancers are less application-aware compared to application layer load balancers but are still effective in distributing traffic across multiple servers.

- Network Layer Load Balancers: These load balancers operate at the network layer (Layer 3) and use network-level information, such as IP addresses, to distribute traffic. Network layer load balancers are typically used for routing traffic across multiple data centers or geographical locations.

- Multi-cloud load balancers: These load balancers operate across different cloud platforms, allowing businesses to take advantage of the strengths and resources offered by each provider. With multi-cloud load balancing, organizations can achieve enhanced performance by effectively managing and balancing traffic across multiple cloud environments. It provides flexibility, resilience, and the ability to leverage diverse cloud services, ensuring optimal resource utilization and delivering a seamless experience to users regardless of the cloud platform they are accessing.

Multi-cloud load balancers play a crucial role in enabling businesses to maximize the benefits of a multi-cloud strategy, reducing dependence on a single cloud provider, and promoting a highly available and scalable infrastructure.

Load Balancing Algorithms

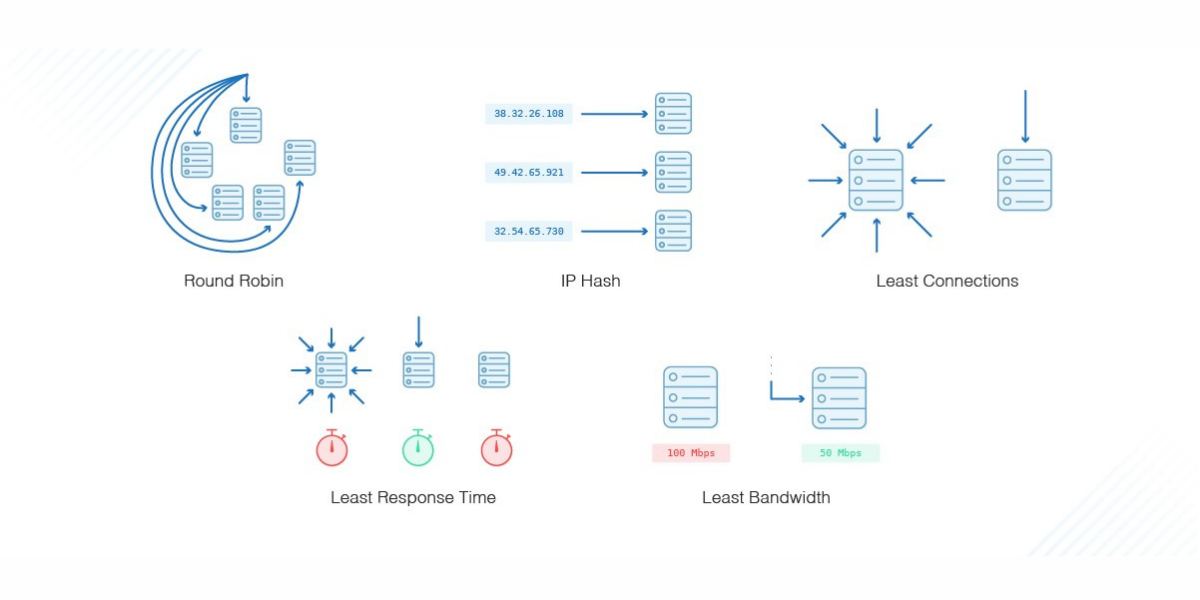

Load balancers employ various algorithms to distribute traffic across servers effectively. Here are some commonly used load-balancing algorithms:

- Round Robin: Traffic is distributed evenly across servers in a cyclic manner. This algorithm is simple and ensures an equal share of traffic for each server. However, it does not consider server load or capacity.

- Least Connection: Traffic is assigned to the server with the fewest active connections. This algorithm ensures that servers with lower loads receive more traffic, leading to a more balanced distribution.

- Weighted Round Robin: Servers are assigned different weights, and traffic is distributed proportionally based on these weights. Servers with higher weights handle more significant traffic loads.

- IP Hash: Traffic is distributed based on the source IP address of the client, ensuring that requests from the same IP address are always routed to the same server. This is useful for maintaining session persistence.

How Round Robin works?

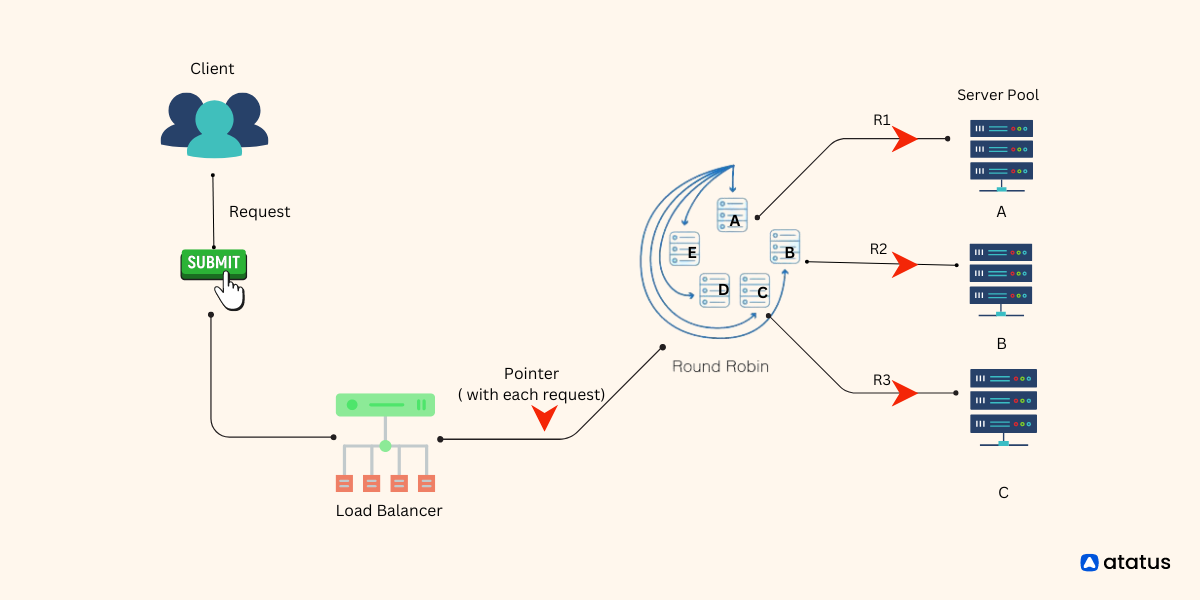

Round Robin is a simple and commonly used load-balancing algorithm that distributes incoming traffic across a group of servers in a cyclic manner. The algorithm ensures an equal share of the workload for each server in the pool. Here's how Round Robin works:

- Initially, a pool of servers is set up to handle incoming traffic. These servers are typically identical and capable of processing requests.

- When a client sends a request, the load balancer receives it and applies the Round Robin algorithm. The load balancer maintains a pointer that keeps track of the last server to which a request was sent.

- Using the pointer, the load balancer selects the next server in line based on the Round Robin sequence. For example, if there are three servers labeled A, B, and C, the load balancer may start by directing the first request to server A, then the second request to server B, and the third request to server C.

- The load balancer forwards the client's request to the selected server. The server processes the request and sends the response back to the client through the load balancer.

- After each request is processed, the load balancer increments the pointer, moving it to the next server in the sequence. If the pointer reaches the last server, it wraps around to the beginning of the sequence, ensuring a cyclic distribution pattern.

- The load balancer continues this process for subsequent requests, evenly distributing the traffic across all servers in the pool.

Benefits:

- Simplicity

- Equal Distribution

- Suitable for Stateless Applications

Considerations:

- Lack of Server Awareness

- No Session Persistence - hence cannot guarantee server continuity

- Limited Adaptability

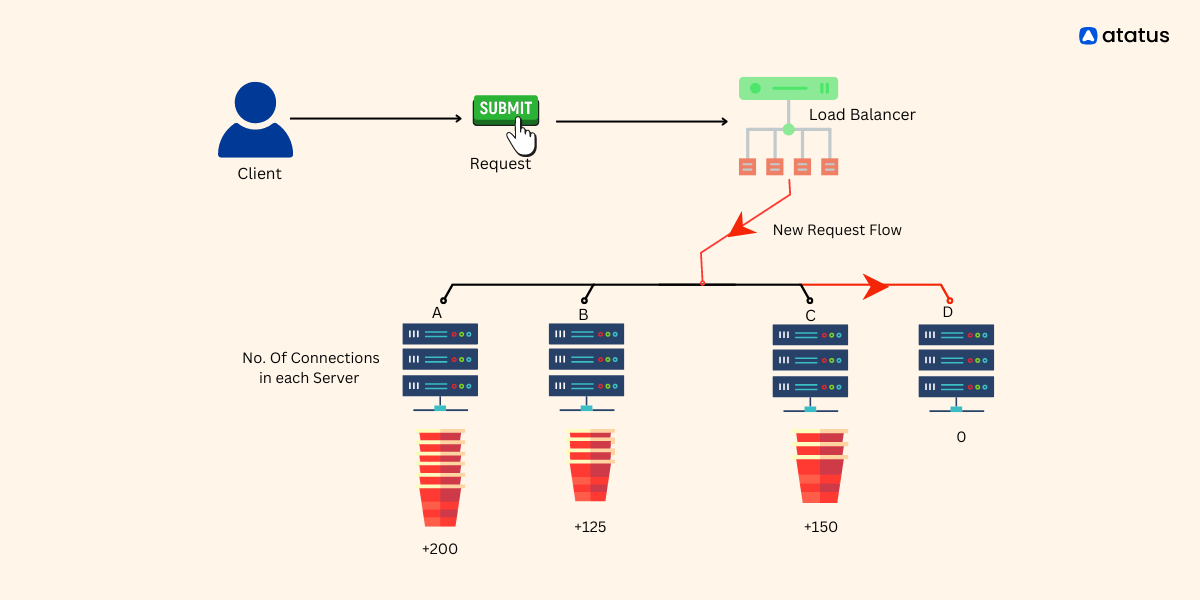

How least connection works?

The Least Connection load balancing algorithm is designed to distribute incoming traffic across servers based on the number of active connections each server is currently handling. The algorithm aims to direct traffic to the server with the fewest active connections, spreading the workload evenly across the server pool.

- When a client sends a request, the load balancer receives it and applies the Least Connection algorithm.

- The load balancer compares the number of active connections on each server and selects the server with the fewest connections. This ensures that the incoming request is directed to the server that currently has the least workload.

- The server processes the request and sends the response back to the client via the load balancer

- After the request is processed, the load balancer increments the connection count for the chosen server, reflecting the new active connection. This ensures that subsequent requests are distributed based on the updated connection count.

Benefits:

- Equal distribution, no overloading of servers

- Scaling based on request traffic

- Allows to maintain session state by directing requests from the same client to a single server

Considerations:

- Does not consider the duration or complexity of existing connections.

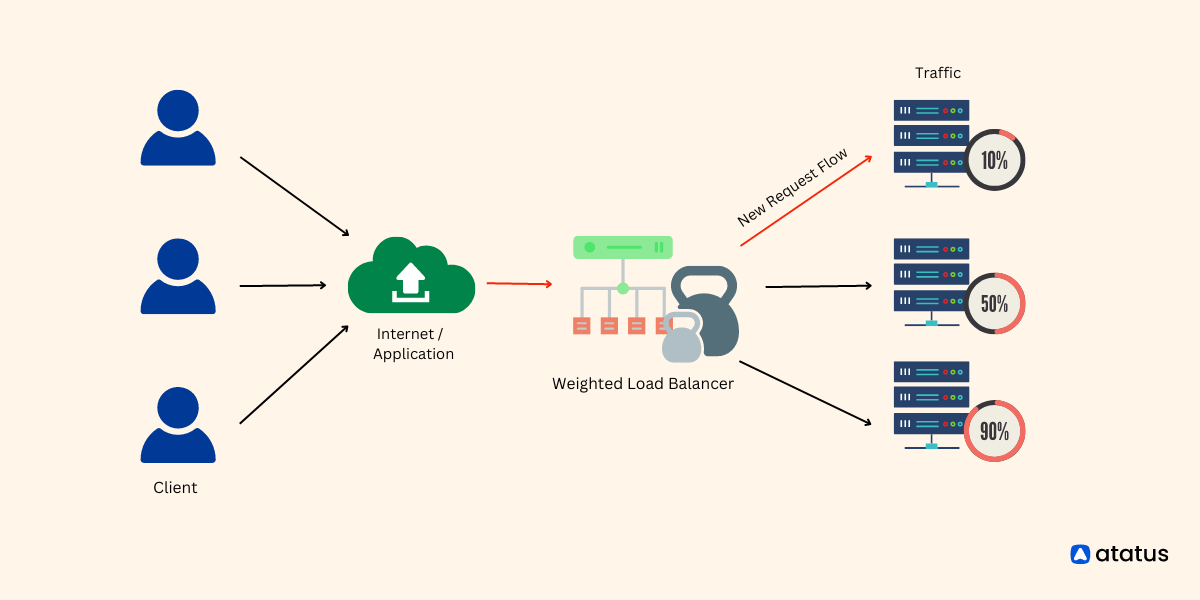

How Weighted Round Robin works?

Each server is assigned a weight value, indicating its capacity or performance capabilities. The algorithm uses these weights to determine the proportion of traffic that each server should receive.

- Initially, a pool of servers is set up to handle incoming traffic. Each server is assigned a weight value that represents its capacity or performance.

- The weight can be any positive integer, and servers with higher weights are assigned a larger proportion of traffic.

- The total weight of all servers in the pool is calculated by summing up the individual weights. This total weight is used as the basis for determining the proportion of traffic allocated to each server.

- The load balancer forwards the client's request to the selected server based on the weighted distribution ratio.

- The server processes the request and sends the response back to the client via the load balancer.

- The load balancer continues this process for each subsequent request, recalculating the weighted distribution ratio and selecting the server accordingly.

Benefits:

- Weight-based traffic assignment to servers

- Choosing servers based on their performance

- Adjust weights based on response time, performance of servers etc.

Considerations:

- As the number of servers increase, assigning and managing weights across them will get tiring.

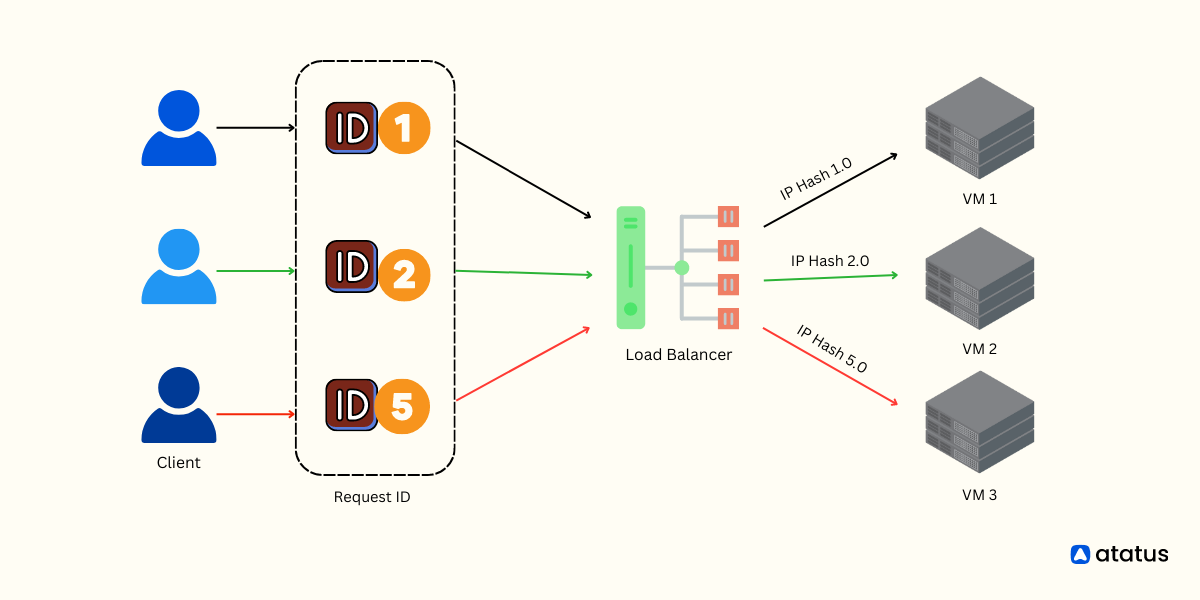

How IP Hash works?

IP Hash is a load balancing algorithm that distributes incoming traffic across multiple servers based on the source IP address of the client. It ensures that requests from the same IP address are consistently routed to the same server.

- The load balancer applies a hashing function to the client's IP address.

- The hashing function generates a unique value or hash based on the IP address.The load balancer uses the hash value to select a server from the server pool.

- The algorithm typically maps the hash value to one of the available servers.

- As long as the client's IP address remains the same, subsequent requests from that client will be consistently routed to the same server. This is achieved because the hash value generated from the client's IP address remains constant, and therefore, the load balancer consistently selects the same server.

Benefits:

- Session persistence

- Consistent connection for long-life request flows

- Limited failover in case of server failures

Considerations:

- If there are changes to the network infrastructure, such as network address translation (NAT) or changes in the client's IP address, the load balancer may need to update its mappings to ensure consistent routing.

How load balancing helps businesses?

Load balancing plays a crucial role in helping businesses optimize their operations and achieve various benefits. Here are some ways load balancing helps businesses:

- Load balancing distributes incoming traffic across multiple servers, ensuring that no single server becomes overwhelmed with excessive requests. This leads to improved response times and faster processing of client requests, resulting in enhanced performance and a better user experience.

- As businesses grow and experience increased traffic demands, load balancing allows for seamless scalability. Additional servers can be added to the load balancing pool, and the load balancer automatically distributes traffic across these servers.

- In the event of a server failure or outage, load balancers can detect the issue and automatically redirect traffic to healthy servers. This process ensures uninterrupted service, minimizes downtime, and prevents potential revenue losses and negative customer experiences.

- Load balancing contributes to business continuity by ensuring that applications remain operational even during peak loads or server failures. This resilience is essential for e-commerce platforms, online services, and any business that heavily relies on its digital infrastructure

- By using load balancers, businesses can easily add, remove, or replace servers without affecting the end-users. Load balancers provide a centralized point of control, allowing businesses to make changes to their infrastructure and applications while maintaining continuous service.

- Load balancers can provide an additional layer of security by acting as a reverse proxy and terminating SSL connections. This enables businesses to offload the SSL encryption/decryption process from the application servers, reducing the computational burden on the servers and improving their performance. Load balancers can also perform other security functions such as filtering and blocking malicious traffic, preventing distributed denial-of-service (DDoS) attacks, and offering improved protection for applications.

How can you improve the capabilities of load balancers?

- Application-Aware Load Balancing - Consider using application-aware load balancers that can understand the content and characteristics of the traffic they handle. This allows for more intelligent decision-making based on application-specific requirements, such as HTTP headers, cookies, or URL patterns. Application-aware load balancers can optimize performance by routing requests to servers that are best suited to handle specific application features or workloads.

- Load Testing and Monitoring - Regularly conduct load testing to assess the performance and capacity limits of your system.

- Dynamic Configuration and Auto-Scaling - Leverage dynamic configuration capabilities in your load balancer to adapt to changing traffic patterns. Implement auto-scaling mechanisms that can automatically adjust the number of servers based on demand. This ensures that you have the appropriate capacity to handle fluctuating traffic loads without manual intervention.

- Load Balancer Redundancy - Ensure high availability and fault tolerance by implementing redundant load balancers. Having multiple load balancers in an active-passive or active-active configuration helps distribute the load balancer's own workload and provides failover capabilities. Redundancy ensures that your load balancing infrastructure remains operational even if one load balancer fails.

- Consider Multiple Load Balancing Layers - For larger and more complex deployments, consider implementing multiple layers of load balancing. This can involve a combination of global load balancers that distribute traffic across different data centers or regions and local load balancers that balance traffic within each data center.

- Distributed Caching - Implementing distributed caching systems, such as Content Delivery Networks (CDNs) or in-memory caches, can significantly offload traffic from your backend servers. These systems store frequently accessed data closer to the end users, reducing the load on your servers and improving overall performance.

- Security Considerations - Load balancing can also contribute to enhancing security. Implement SSL termination on load balancers to offload SSL/TLS encryption and decryption processes from your servers, improving their performance. Utilize load balancers with built-in security features such as Web Application Firewalls (WAFs) or DDoS protection to safeguard against malicious attacks.

- Regular Review and Optimization.

How to choose the right load-balancing solution for your business?

Choosing the right load balancer involves considering various factors such as performance requirements, scalability needs, deployment environment, available features, and budget. Here are some key considerations and examples of popular open-source load balancers in the market:

- Evaluate the load balancer's performance capabilities and its ability to handle the expected traffic volume. Consider features like connection handling, throughput, and request per second (RPS) capacity. Examples of high-performance open-source load balancers include HAProxy and NGINX.

- Determine whether you require an on-premises load balancer or a cloud-native solution. For on-premises deployments, software load balancers like HAProxy and NGINX can be installed on dedicated hardware or virtual machines. For cloud environments, consider cloud provider-specific load balancing services such as Google Cloud Load Balancing or AWS Elastic Load Balancer.

- Ensure that the load balancer supports the protocols used by your applications, such as HTTP, HTTPS, TCP, or UDP. Also, consider the load balancing algorithms available, such as round-robin, least connection, or weighted distribution, and choose a load balancer that offers the appropriate balancing methods for your specific requirements.

- Consider the ease of configuration, management, and monitoring capabilities offered by the load balancer. Look for features like a user-friendly interface, centralized management, and integration with monitoring tools. Examples of open-source load balancers with management interfaces include HAProxy's HAProxy Enterprise and Traefik's Traefik Pilot.

- Evaluate if the load balancer provides additional features that align with your needs, such as SSL termination, session persistence, content caching, rate limiting, or web application firewall (WAF) capabilities. Open-source load balancers like Envoy and Traefik offer a wide range of advanced features and extensibility options.

- Consider the size and activity of the load balancer's open-source community, as well as the availability of documentation, forums, and community support. A thriving community ensures ongoing development, frequent updates, and access to valuable resources and knowledge-sharing. HAProxy, NGINX, and Envoy are examples of open-source load balancers with strong community support.

Some examples of popular open-source load balancers in the market include:

- HAProxy: A widely-used and highly performant TCP and HTTP load balancer with a rich set of features, including load balancing algorithms, health checks, SSL termination, and more.

- NGINX: Initially designed as a web server, NGINX also functions as a reverse proxy and load balancer. It offers advanced load balancing capabilities, caching, SSL termination, and extensive configuration options.

- Envoy: A modern, high-performance edge and service proxy that provides advanced load balancing, observability, and dynamic configuration capabilities. It is widely used in microservices and cloud-native architectures.

- Traefik: A cloud-native reverse proxy and load balancer built for modern containerized environments. It offers automatic service discovery, dynamic configuration, and integrates seamlessly with popular container orchestration platforms like Kubernetes.

Remember to thoroughly evaluate and test load balancers based on your specific requirements and conduct a proof-of-concept to ensure compatibility and performance before deploying them in production environments.

Conclusion

Load balancing and load balancers are critical components in optimizing the performance of web applications. By efficiently distributing incoming traffic across multiple servers, load balancers ensure that workloads are balanced, resources are optimized, and downtime is minimized.

With the increasing demands on web services, load balancing has become an indispensable tool for organizations seeking to provide a seamless and reliable user experience.

In conclusion, by implementing load-balancing solutions, businesses can enhance their operations, provide a seamless user experience, and maintain a competitive edge in the digital landscape.

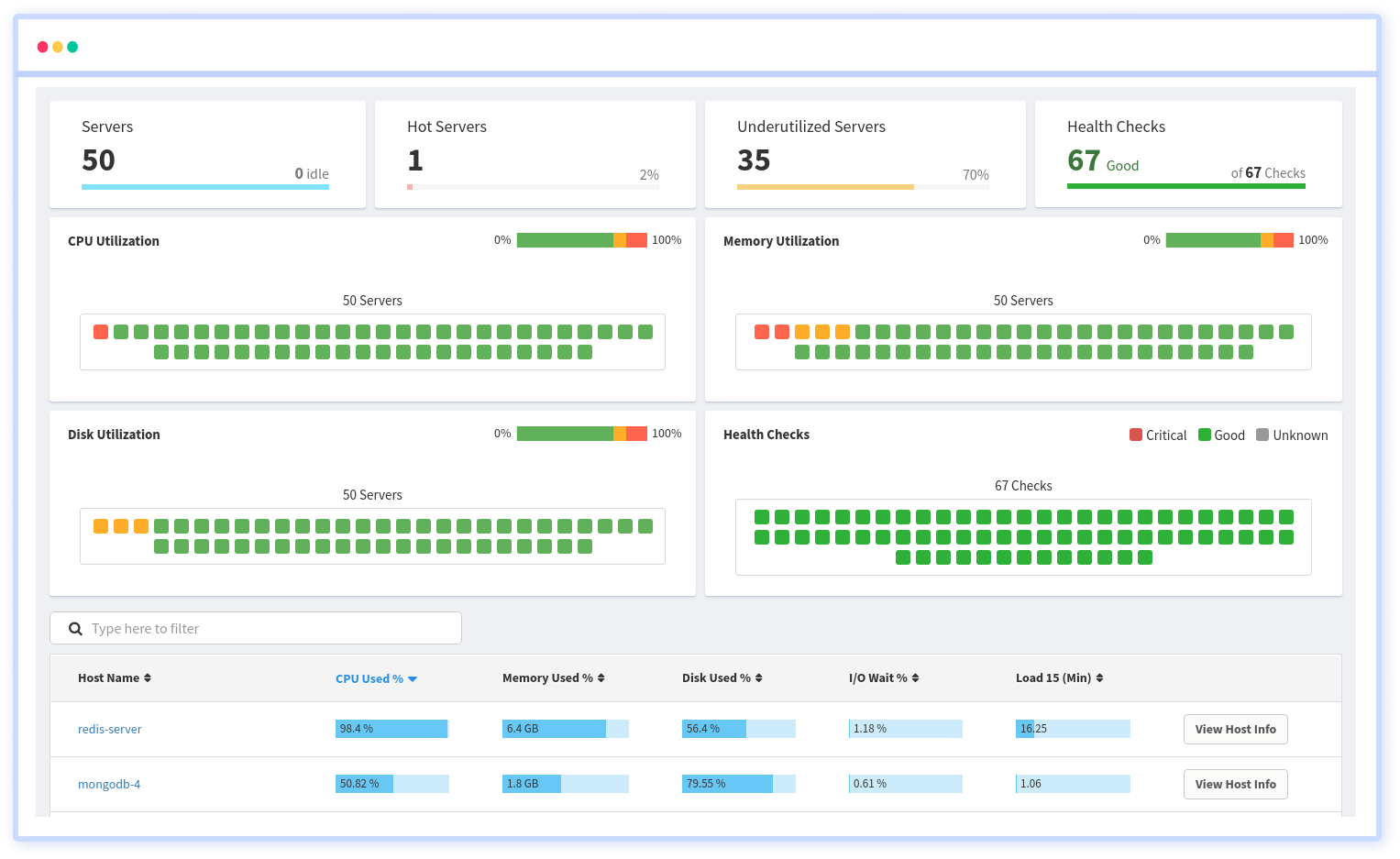

Infrastructure Monitoring with Atatus

Track the availability of the servers, hosts, virtual machines and containers with the help of Atatus Infrastructure Monitoring. It allows you to monitor, quickly pinpoint and fix the issues of your entire infrastructure.

In order to ensure that your infrastructure is running smoothly and efficiently, it is important to monitor it regularly. By doing so, you can identify and resolve issues before they cause downtime or impact your business.

It is possible to determine the host, container, or other backend component that failed or experienced latency during an incident by using an infrastructure monitoring tool. In the event of an outage, engineers can identify which hosts or containers caused the problem. As a result, support tickets can be resolved more quickly and problems can be addressed more efficiently.

Start your free trial with Atatus. No credit card required.

#1 Solution for Logs, Traces & Metrics

APM

Kubernetes

Logs

Synthetics

RUM

Serverless

Security

More

![New Relic vs Splunk - In-depth Comparison [2025]](/blog/content/images/size/w960/2024/10/Datadog-vs-sentry--19-.png)

![New Relic vs Sentry - Which Monitoring Tool to Choose? [2025]](/blog/content/images/size/w960/2024/10/VS--1-.png)