Since the beginning of the Docker containerization project in 2013, containers have grown in popularity, yet big dispersed containerized apps can be challenging to manage. Kubernetes made containerized systems easier to manage at scale, which helped it become a key component of the container revolution.

We will go over the following:

- What is Kubernetes?

- Why is Kubernetes Important?

- Features of Kubernetes

- How Does Kubernetes Work?

- Benefits of Kubernetes

What is Kubernetes?

Kubernetes, sometimes known as K8s for short, is an open-source solution for containerized online applications that automates deployment and dynamic scaling.

Containers, a Linux technology that divides applications into logical units for centralized and secure management, are used by Kubernetes. Containers are made to be transient. Since user data is stored outside of containers, they can crash or die without losing user data.

A cluster of servers can start new instances of an application when many users require it or run only a few instances of an application when only a small number of users require it because containerized applications are regarded as disposable.

However, Kubernetes makes it automatic. A sysadmin might do this manually or create a set of scripts to monitor traffic and take appropriate action.

Large-scale deployments are the focus of Kubernetes. The truth is that you generally don't need Kubernetes unless your web applications have so many visitors that performance suffers. The more popular Kubernetes gets, the easier it is to run it at home, and there are many solid reasons to do so.

Although Kubernetes makes managing containers simple, it is primarily a DIY toolset. While it is possible to download and use Kubernetes exactly as it is being created (and many people do), doing so frequently requires the eventual creation of custom tools to support your Kubernetes cluster.

Why is Kubernetes Important?

You can deploy and manage containerized, traditional, and cloud-native applications with Kubernetes, as well as microservices.

The development team must quickly develop new products and services to keep up with changing company demands. Beginning with container microservices, the cloud-native architecture enables quicker development and makes it simpler to refactor and improve already-existing applications.

- Applications shouldn't be anticipated to go offline for maintenance or updates given the increase in internet usage.

- Each business wants its deployments to scale by the demands of its users; for example, if more requests come in from users, more CPU and Memory should be provided to the deployment automatically; otherwise, the server may crash.

- Additionally, no one wants to pay more for CPU and Memory on cloud services if such needs are not always present. Therefore, there should be an intelligent system that efficiently manages CPU and Memory utilization according to need.

Kubernetes enters the picture at this point. It effectively handles all of the above needs and eases a significant amount of the developer's load.

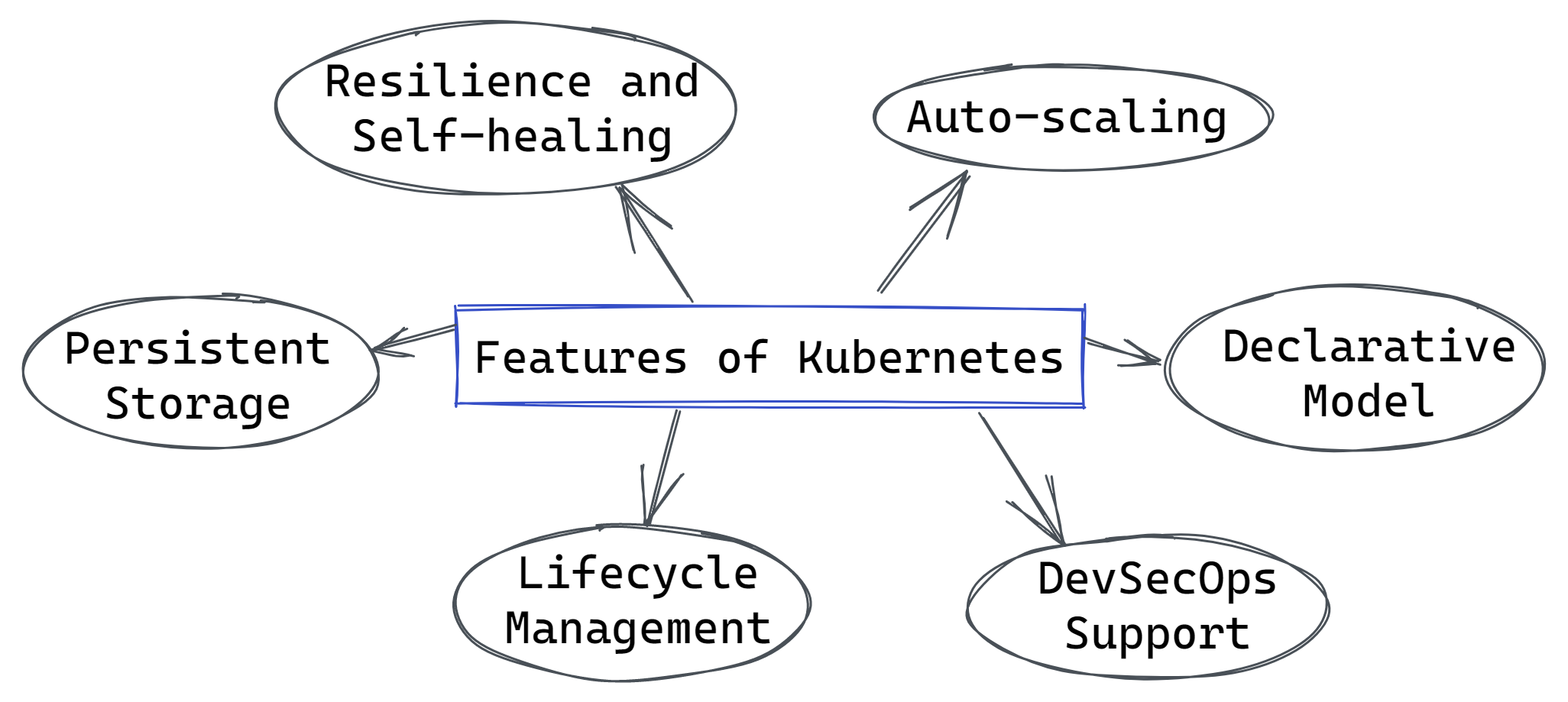

Features of Kubernetes

Numerous features of Kubernetes make it possible to manage K8s clusters automatically, orchestrate containers across different hosts, and optimize resource usage by making better use of infrastructure.

Some main features are:

- Auto-scaling

Automatically adjust the resources and utilization of containerized apps. - Declarative Model

Declare the desired state, and K8s will work in the background to uphold it and recover from any errors. - DevSecOps Support

DevSecOps is a cutting-edge security strategy that integrates security across the container lifecycle, automates container operations across clouds, and makes it possible for teams to produce secure, high-quality software more quickly. - Lifecycle Management

Automate updates and deployments with the ability to Rollback to previous versions and Pause and continue a deployment. - Persistent Storage

The capacity to dynamically mount and add storage. - Resilience and Self-healing

Application self-healing is provided through auto-placement, auto restart, auto replication, and auto-scaling.

How Does Kubernetes Work?

Organizations require management and automation due to the complexity of having so many containers across services and environments. Container running locations and methods are managed by Kubernetes using an open-source API. Scaling is made simpler by the ability to automate resource provisioning processes.

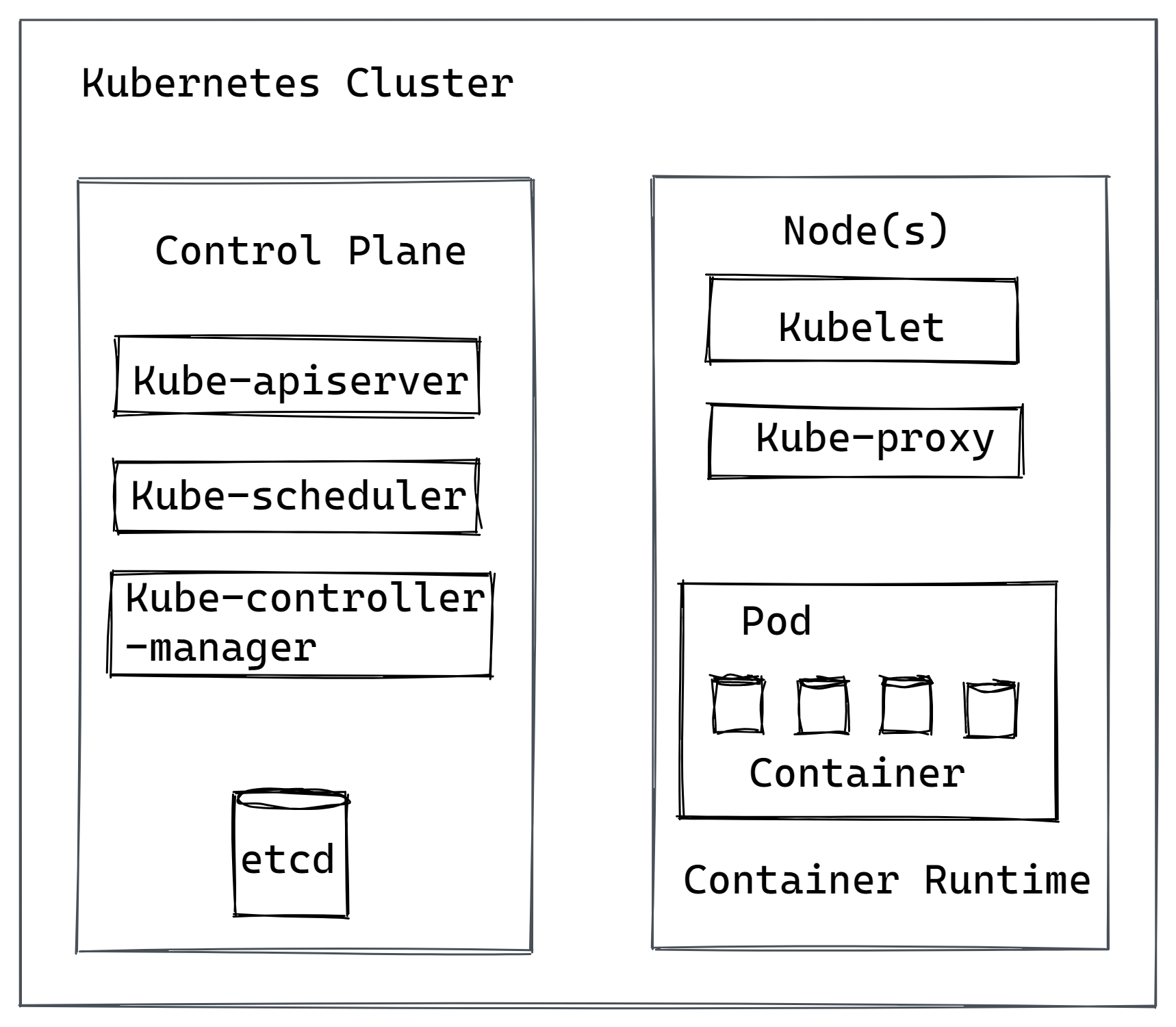

Operating systems, such as Windows and Linux, among others, are built on top of which Kubernetes operates. When Kubernetes is installed, it establishes a cluster, which, at its most basic, only consists of a manager called a control plane and a set of worker machines called nodes.

This cluster may be composed of virtual machines, physical machines, or both, and may communicate with one another across a common network. The cluster has every component and feature of Kubernetes.

Based on the requirements of each container as well as the available computing resources, Kubernetes maintains these clusters and schedules containers to operate as efficiently as possible. Orchestration is the process of controlling these clusters and the storage they demand.

The following key terms will help you understand Kubernetes' layers:

- Control Plane

The orchestration layer, or control plane, is a set of control procedures that serves as a Kubernetes cluster's communications coordinator. The Kubernetes API server, which enables developers to automate processes related to resource provisioning and management, is at the center of the control plane. All task assignments, including workload allocation and scheduling, as well as the beginning of applications, come from the control plane. In high-availability clusters, the Kubernetes control plane can run on numerous main nodes in addition to one main node. - Pods

A pod is the smallest component of the Kubernetes ecosystem. It is made up of one or more containerized applications, each with its IP address. Pods function inside nodes. - Nodes

Nodes are worker machines that carry out tasks as directed by the control plane. Each node needs to have a Kubelet, a communication process that enables the control plane to govern the nodes, as well as a container runtime, such as Docker, that accesses container images and runs the application. One or more pods can make up a node. - Cluster

A Kubernetes cluster is made up of a control plane and a collection of nodes that run containerized applications. - Services

Applications must be made accessible to users for them to use them. Developers configure a functional capacity as a service and add metadata known as key-value pairs in the form of labels and annotations if applications want to access a functional capacity that other applications own. A set of pods and a Kubernetes service can be connected using these labels and annotations. The automated process of finding a required service is provided by this architecture as a loosely coupled way of service discovery.

A Kubernetes cluster's control plane consists of four main parts that regulate communications, manage nodes, and monitor the cluster state.

- Kube-apiserver

The Kubernetes API.etcd is exposed by the kube-apiserver, as its name suggests. A key-value store that houses all information about the Kubernetes cluster. - Kube-scheduler

Keeps an eye out for newly created Kubernetes Pods that have no assigned nodes, and based on resources, policies, and "affinity" specifications, assigns them to a node for execution. - Kube-controller-manager

One binary, kube-controller-manager, contains every controller function of the control plane.

There are three main parts to a K8s node:

- Kubelet

A Kubernetes Pod agent that checks to see if the required containers are running. - Kube-proxy

A network proxy that keeps track of network policies and permits communication on each node in a cluster. - Container Runtime

The software in charge of managing containers. Any runtime compliant with the Kubernetes CRI (Container Runtime Interface) is supported by Kubernetes.

Moreover, you should be familiar with the following phrases:

- Kubernetes Service

A group of Kubernetes Pods that all carry out the same task is referred to as a Kubernetes service. Unique addresses are given to Kubernetes services, and they remain the same even as pod instances come and leave. - Controller

The Kubernetes cluster's controllers make sure that the actual running state is as similar as possible to the ideal state. - Operator

You can use Kubernetes Operators to organize domain-specific knowledge for an application like a run book. Operators make it simpler to deploy and manage applications on K8s by automating application-specific processes.

Benefits of Kubernetes

The Kubernetes platform has gained popularity because it offers a variety of significant benefits, including:

- API-based

The REST API is the backbone of Kubernetes. Programming can be used to manage every aspect of the Kubernetes system. - Cost Efficiency

Your IT spending is within your control because of Kubernetes' built-in resource optimization, automated scaling, and flexibility to execute workloads where they add the most value. - Integration and Extensibility

The solutions you already use, such as logging, monitoring, and alerting systems, may all be integrated with Kubernetes. A rich and quickly expanding ecosystem is being developed by the Kubernetes community, which is working on several open-source solutions that complement Kubernetes. - Portability

Containers can be moved between many environments, including virtual environments and bare metal. Since Kubernetes is supported by all of the main public clouds, you may use K8s to execute containerized apps in a variety of settings. - Scalability

Applications are built for the cloud-scale horizontally. Kubernetes uses "auto-scaling," automatically creating more container instances and expanding in response to demand. - Simplified CI/CD

The building, testing, and deployment of applications to production environments are all automated using the DevOps method known as CI/CD. Kubernetes and CI/CD are being integrated by businesses to build scalable, load-responsive CI/CD pipelines.

Conclusion

Learning Kubernetes has several advantages because it relieves developers of a lot of deployment-related burdens. It facilitates resource use intelligence, auto-scaling, and minimum maintenance downtime.

Without k8s, a lot of time and money would be spent on these activities, but with k8s, everything could be automated.

Monitor Your Entire Application with Atatus

Atatus is a Full Stack Observability Platform that lets you review problems as if they happened in your application. Instead of guessing why errors happen or asking users for screenshots and log dumps, Atatus lets you replay the session to quickly understand what went wrong.

We offer Application Performance Monitoring, Real User Monitoring, Server Monitoring, Logs Monitoring, Synthetic Monitoring, Uptime Monitoring, and API Analytics. It works perfectly with any application, regardless of framework, and has plugins.

Atatus can be beneficial to your business, which provides a comprehensive view of your application, including how it works, where performance bottlenecks exist, which users are most impacted, and which errors break your code for your frontend, backend, and infrastructure.

If you are not yet an Atatus customer, you can sign up for a 14-day free trial.