A Comprehensive Guide to MeiliSearch

MeiliSearch is a RESTful search API. It aspires to be a ready-to-use solution for everyone who wants to provide their end-users with a quick and relevant search experience.

Effective search engines can need a large investment of time and money. They are only available to businesses with the financial resources to create a custom search solution tailored to their specific requirements.

small-to-medium-sized businesses frequently utilize inadequate search engines, which incur hidden costs in terms of user experience and retention as a result of poor search fulfillment.

The reason why MeiliSearch: An open-source solution that can be used by anybody and is designed to suit a wide range of requirements. Installation requires very little configuration, yet it is highly customizable. It provides an immediate search experience with features such as typo correction, filters, custom ranks, and more.

We will go over the following:

- MeiliSearch’s Source Code

- MeiliSearch Core Concepts

- Features of MeiliSearch

- MeiliSearch Installation

- MeiliSearch Project Setup

- Data Set for Testing Purposes

- Creating the Blogs Index

- Uploading a Dataset in MeiliSearch

- Create an Index and Upload Some Documents

- Search for Documents

- How to Modify MeiliSearch Ranking Rules

- Frontend Integration

- Elasticsearch vs. MeiliSearch

- Benefits of MeiliSearch

- Limitations of MeiliSearch

#1 MeiliSearch’s Source Code

The fact that the MeiliSearch codebase is made up of 7,600 lines of Rust, excluding unit tests, was the first thing that drew my eye.

Even though competing products frequently have more features, Elasticsearch has almost 2 million Java lines, while Apache Solr, which uses Apache Lucene, has about 1.3 million, Groonga has 600K+ lines of C, Manticore Search has 150K lines of C++, Sphinx has 100K lines of C++, Typesense has 50K lines of C++ including headers, and Tantivy has 40K lines of code and is likewise written in Rust.

Bleve is a text indexing library for GoLang that has 83K+ lines of code and no client or server interfaces. However, there is a secondary search engine called SRCHX that may be used alongside it and is only a few hundred lines long in GoLang.

One of the reasons for the codebase's seeming tiny size is because areas of interest have been separated into distinct repositories. There are 17k lines of Rust code in the indexer milli, 1,200 lines in the tokenizer, and 3.5K lines of React code in the WebUI dashboard.

With their use of third-party libraries, the developers have gone out of their way to avoid reinventing the wheel. These include heed, which is used to wrap LMDB, Tokio, which is used for networking, Actix, which is their Web Framework, futures is used for streaming, and parking_lot, which is used for locking and synchronization.

Before settling on LMDB, the team explored Sled and RocksDB as alternatives for the embedded database backend. For this use case, they cited LMDB as having the best combination of performance and stability.

With its vast ecosystem of libraries, non-verbose syntax, and ability to build performant binaries, the decision to use Rust appears to have paid off well.

#2 MeiliSearch Core Concepts

These are the core concepts of MeiliSearch:

#1 Documents

A document is a collection of fields that make up an object. Each field is made up of an attribute and its value.

Documents are the core building elements of a MeiliSearch database, serving as containers for organizing data. A document must first be put into an index before it can be searched.

#2 Indexes

An index is a collection of documents that has its own set of parameters. In SQL, it's analogous to a table, and in MongoDB, it's comparable to a collection.

An index is defined by a unique identifier (uid) and contains the following data:

- There is one primary key

- Relevancy rules, synonyms, stop words, and field properties are all default settings that can be changed as needed

#3 Relevancy

Relevancy is a concept that refers to how accurate and effective search results are. Search results can be regarded as relevant if they are almost always appropriate, and vice versa.

MeiliSearch provides several features that allow you to fine-tune the relevance of your search results. Ranking rules are the most significant instrument among them.

#3 Features of MeiliSearch

MeiliSearch comes with all of its features pre-installed and maybe simply customized. Here are a few features you might want to try:

- Search as You Type

Also known as "quick search." While you're still typing in your query, you'll get results. When you type more text into the search box, the displayed results alter in real-time. - Filters

MeiliSearch lets you create filters to narrow down your search results based on user-defined criteria. - Faceting

Faceted search helps you to categorize search results and create user-friendly navigation interfaces. - Sorting

Users can choose which types of results they wish to see first by sorting search results at query time. - Ultra Relevant

The default relevancy rules in MeiliSearch are meant to provide an easy search experience with minimal setup. They can be tweaked to produce the best possible results for your dataset. - Typo Tolerant

Rather than letting typos ruin your search, MeiliSearch will always discover the results you're looking for. - Synonyms

Synonyms allow you to create a more personalized and intuitive search experience. - Highlighting

To make matches stand out, highlight query phrases. Users are not required to read the entire paragraph to locate a match. - Placeholder Search

MeiliSearch will provide all the documents in that index sorted by its custom ranking rules if you search without any query words. - Phrase Search

If you use double quotes (") around your search terms, MeiliSearch will only return documents that contain those terms in the order you specified. Users will be able to make more exact search searches as a result of this.

#4 MeiliSearch Installation

Make sure your Node.js installation is up and running. Sending API queries with a tool like a cURL is also beneficial.

Learn more about cURL commands.

After that, we'll need to interact with a MeiliSearch instance. There are various options for running a MeiliSearch instance:

- To establish a temporary instance for 72 hours, use the MeiliSearch sandbox

- Using a DigitalOcean droplet, create an instance

- Docker is used to running MeiliSearch

- Alternatively, the MeiliSearch documentation lists Debian, Ubuntu, Linux, and Mac OS installation choices

To secure our instance, we must create a master key that secures the API endpoints of the MeiliSearch instance. The MeiliSearch sandbox will give you one by default. You must manually specify a master key for options 2, 3, and 4.

Send the following request to display all indexes to test your installation. There should be no indexes in a new installation. As a result, the response is a null array.

Make sure you use your IP address instead of the IP address of the server. We don't need to add a port number :7700 to installations using DigitalOcean or the MeiliSearch sandbox.

curl http://127.0.0.1:7700/indexes --header 'X-Meili-API-Key: your-master-key'

Let's go on to setting up the project.

#5 MeiliSearch Project Setup

To begin, use npm to create a new project:

npm init -yAdd the meilisearch-js dependency after that:

npm install meilisearchFinally, make a file called index.js that contains all of our code. Make sure this file is located in the root directory of your newly created project.

touch index.js#6 Data Set for Testing Purposes

Of fact, testing the search engine with a small data set is pointless, given today's hardware capabilities, which allow datasets weighing a few hundreds of megabytes to be kept in memory with ease. So we decided to take a different route and discover a data collection that is primarily text and can be used in real-world scenarios.

Kaggle dataset, Cornell University arXiv index was my first option. According to Wikipedia, arXiv is an open-access repository of electronic preprints (also known as e-prints) that have undergone moderation but not full peer review before being posted.

It contains publications in the fields of astronomy, mathematics, physics, electrical engineering, computer science, statistics, mathematical finance, quantitative biology, and economics that are accessible via the internet.

The Kaggle dataset is just a copy of the entire arXiv, and it comprises an index of publications with information such as author, title, category, and a brief excerpt. The dataset is in JSON format and weighs around 2.7 GB. All you have to do now is download and unzip it.

The dataset can be downloaded from GitHub.

#7 Creating the Blogs Index

To make a blogs index, we'll add our blogs.json data to it so that we can search and alter it afterward.

We must include the meilisearch package at the top of our index.js file to interact with a MeiliSearch instance:

const MeiliSearch = require('meilisearch')We'll now utilize the main function that supports the async/await syntax. The main function will be used to update code snippets throughout this lesson.

We must first establish a connection with the MeiliSearch instance before we can interact with it.

const main = async () => {

const client = new MeiliSearch({

host: 'https://sandbox-pool-123.ovh-fr-2.platformsh.site',

headers: {

'X-Meili-API-Key': 'your-master-key'

}

});

}

main()Let's start by making an index. All methods for communicating with the API of our MeiliSearch instance are exposed via the client object.

const main = async () => {

const client = new MeiliSearch({

host: 'https://sandbox-pool-123.ovh-fr-2.platformsh.site',

headers: {

'X-Meili-API-Key': 'your-master-key'

}

});

// Create an index named 'blogs'

await client.createIndex('blogs')

}

main()We must run the index.js file to construct the index:

node index.jsWe won't repeat all of the code for brevity.

Let's check to see if the blogs index was correctly built by listing all indexes.

const indexes = await client.listIndexes()

console.log(indexes)The results will come like this:

[{

name: 'blogs',

uid: 'blogs',

createdAt: '2020-12-04T17:27:43.446411126Z',

updatedAt: '2020-12-04T17:51:52.758550969Z',

primaryKey: null

}]The prizes index has yet to be assigned a primary key by MeiliSearch. Since our data set contains an id field, MeiliSearch will automatically select the primary key when we add data in the next stage.

#8 Uploading a Dataset in MeiliSearch

Using a tool like a cURL to upload a huge dataset to your MeiliSearch server is the easiest option. Make sure you run this command in the same directory as the blogs.json dataset. Ensure the data is uploaded to the correct index: /indexes/blogs/. If you've configured this, enter your master key once more.

curl -i -X POST 'https://meilisearch-sandbox.site/indexes/blogs/documents' \

--header 'content-type: application/json' \

--header 'X-Meili-API-Key: your-master-key' \

--data-binary @blogs.jsonLet's go over our indexes again to see if our data was correctly uploaded. This time, the id property should be listed in the main key field.

node index.js#9 Create an Index and Upload Some Documents

Let's make a searchable index. Use this movie database if you require a sample dataset.

curl -L 'https://bit.ly/2PAcw9l' -o movies.jsonMeiliSearch can handle several indexes containing various types of documents. Before delivering documents to it, it is necessary to construct an index.

curl -i -X POST 'http://127.0.0.1:7700/indexes' \

--data '{ "name": "Movies", "uid": "movies" }'You're ready to send data to the server now that it's aware of your brand new index.

curl -i -X POST 'http://127.0.0.1:7700/indexes/movies/documents' \

--header 'content-type: application/json' \

--data-binary @movies.json#10 Search for Documents

In command line

Your documents are now known to the search engine, which can provide them via an HTTP server.

You can considerably benefit from using the jq command-line tool to read server answers.

curl 'http://127.0.0.1:7700/indexes/movies/search?q=dark+knight&limit=2' | jqThe results will come like this:

{

"hits": [{

"id": "165",

"title": "Batman Begins",

"poster": "https://m.media-amazon.com/images/M/MV5BOTY4YjI2N2MtYmFlMC00ZjcyLTg3YjEtMDQyM2ZjYzQ5YWFkXkEyXkFqcGdeQXVyMTQxNzMzNDI@._V1_.jpg",

"overview": "After witnessing his parents' death, Bruce learns the art of fighting to confront injustice. When he returns to Gotham as Batman...",

"release_date": "2005-06-17",

},

{

"id": "232",

"title": "The Dark Knight",

"poster": " https://m.media-amazon.com/images/M/MV5BMTMxNTMwODM0NF5BMl5BanBnXkFtZTcwODAyMTk2Mw@@._V1_.jpg",

"overview": "After Gordon, Dent and Batman begin an assault on Gotham's organized crime, the mobs hire the Joker, a psychopathic criminal mastermind...",

"release_date": "2008-07-18",

},

{

"id": "24445",

"title": "The Dark Knight Rises",

"poster": " https://m.media-amazon.com/images/M/MV5BMTk4ODQzNDY3Ml5BMl5BanBnXkFtZTcwODA0NTM4Nw@@._V1_FMjpg_UX1000_.jpg ",

"overview": "Bane, an imposing terrorist, attacks Gotham City and disrupts its eight-year-long period of peace. This forces Bruce Wayne...",

"release_date": "2012-07-20",

}

],

"offset": 0,

"limit": 3,

"processingTimeMs": 1,

"query": "dark knight"

}Use the Web Interface

It also provides an out-of-the-box web interface that allows you to interactively test MeiliSearch.

In your web browser, go to the root of the server to access the web interface. http://127.0.0.1:7700 is the default URL. To visit MeiliSearch, simply open your web browser and type in the address. This will take you to a page with a search bar where you can search the index you've chosen.

The returned result has the following properties:

- hits – contains the items that are relevant to the search query

- nbHits – reflects the total number of items that match

- processingTimeMs – reflects the time it took to retrieve the search result in milliseconds

- query – is the search query we gave to MeiliSearch

#11 How to Modify MeiliSearch Ranking Rules

MeiliSearch uses the following order for ranking rules by default:

- typo prioritizes documents that match your query terms and have the fewest mistakes

- words prioritize documents that match all of your query terms above documents that just match a few

- proximity prioritizes documents based on how near your search query terms are to one another

- attribute determines which field your search query matched in. If you have a ranking order for the title, description, and author attributes, matches in the title field will be given more weight than matches in the description or author fields

- words position prioritizes documents that have your search keywords towards the start of the field

- exactness prioritizes documents that are the most closely related to your query

The getSettings method can be used to get the ranking rules:

const index = client.getIndex('blogs')

const settings = await index.getSettings()

console.log(settings)

The output looks like this: {

rankingRules: ['typo',

'words',

'proximity',

'attribute',

'wordsPosition',

'exactness'

],

distinctAttribute: null,

searchableAttributes: ['*'],

displayedAttributes: ['*'],

stopWords: [],

synonyms: {},

attributesForFaceting: []

}#12 Frontend Integration

We may utilize the Instant MeiliSearch repository to integrate MeiliSearch into our application, which allows developers to integrate without having to reinvent the wheel. It's built on the Algolia open source library, which includes a lot of predefined behavior that we may tweak to fit our needs. It's quite cool and can help you save a lot of time, especially when creating personalized search experiences.

On the other hand, we have complete control over the development of totally tailored integration. Because exposing MeiliSearch to the public exposes it to malicious users, we urge everyone to use a proxy or just communicate with it on the backend side when exposing it to the client directly.

#13 Elasticsearch vs. MeiliSearch

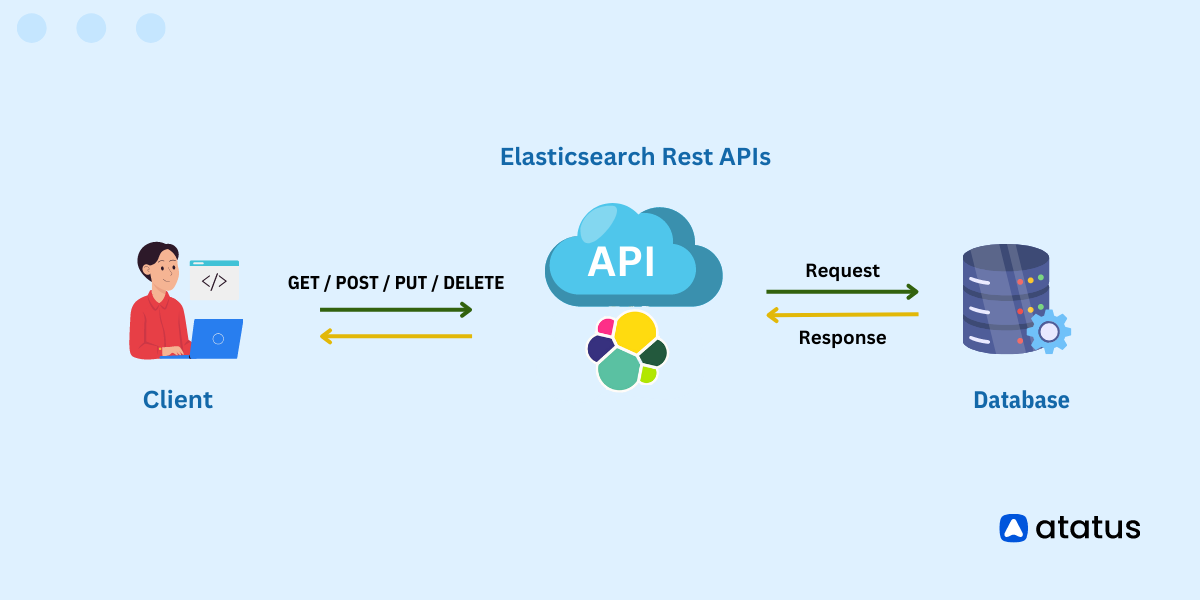

Elasticsearch is a backend search engine that is often used to generate search bars for end-users, even though it was not built for this purpose originally. MeiliSearch is a general-purpose search engine, that focuses on a narrow range of features.

Elasticsearch is a search engine that can handle large volumes of data and do text analysis. You must spend time learning more about how Elasticsearch works internally to adapt and adjust it to your needs to make it useful for end-user searches. MeiliSearch is designed to provide end-users with fast, reliable search results. Processing complicated queries or analyzing very huge datasets, on the other hand, is not possible.

If you want to deliver a complete immediate search experience, Elasticsearch can be too slow at times. When compared to MeiliSearch, it is substantially slower in returning search results. MeiliSearch is an excellent solution if you require a quick and easy way to set up a typo-tolerant search bar that includes prefix searching, makes searching straightforward for users, and delivers results quickly and with near-perfect relevancy.

#14 Benefits of MeiliSearch

Ease of use was a fundamental priority from the outset, and it continues to drive MeiliSearch development today.

Simple and Intuitive

MeiliSearch requires relatively little configuration for developers to get up and running. A RESTful API is used to communicate with the server.

The search experience should be straightforward for end users so they can concentrate on the results. With a response time of fewer than 50 milliseconds, MeiliSearch strives to provide an intuitive search-as-you-type experience.

Highly Customizable

MeiliSearch comes with default options that satisfy the demands of most projects right out of the box. Searching, on the other hand, is still very configurable.

The returned results are sorted using ranking rules, which are a set of sequential rules. Existing rules can be deleted, new ones added, or the order in which they are executed changed.

You can also tweak the search parameters to narrow down your results even more. Filters and faceting are something we endorse.

Front-facing Search

When it comes to creating a wonderful search experience for your end-users, MeiliSearch wants to be your go-to search backend. It's not intended for massive data sets (more than 10 million documents) or industrial applications.

Anti-pattern

MeiliSearch should not be used as your primary data storage system. Only the data you want your users to search through should be in MeiliSearch. It becomes less relevant as the amount of data has grown. Queries for MeiliSearch should be sent straight from the front end. The more proxy between MeiliSearch and the end-user, the slower queries and, as a result, the search experience will be.

#15 Limitations of MeiliSearch

- The largest dataset against which MeiliSearch is being formally tested includes 120 million documents. Although the software could support more, this is the largest instance of it that we could uncover.

- The database size of MeiliSearch is something to keep an eye on, as it's limited to 100 GB by default. Overriding settings can be passed in at launch to adjust this.

- There is also a hard-coded limit of 200 indices, with each attribute's first 1,000 words being indexed.

Summary

You must set a primary key when building a MeiliSearch Index, which is a unique value that identifies a document across the whole index. An integer or a string value made up of alphanumeric characters, hyphens (-), and underscores (_) can be used as the main key.

Another thing to keep in mind is that the indexing process is currently single-threaded. It requires roughly 800 megabytes of RAM to store 88,000 documents, while the index on disc needs about 2,2 gigabytes. So we're talking about an 8x increase in data size for keeping it in memory versus a 20x increase for storing it on disc.

However, the setup time is relatively short, and the procedure is responsive immediately, allowing you to begin querying right away.

MeiliSearch appears to be quite promising, and we are confident that it has a bright future ahead of it. Developers at MeiliSearch concentrated on blazing fast performance; now they need to focus on features that will attract more users.

Monitor Your Entire Application with Atatus

Atatus provides a set of performance measurement tools to monitor and improve the performance of your frontend, backends, logs, and infrastructure applications in real-time. Our platform can capture millions of performance data points from your applications, allowing you to quickly resolve issues and ensure digital customer experiences.

Atatus can be beneficial to your business, which provides a comprehensive view of your application, including how it works, where performance bottlenecks exist, which users are most impacted, and which errors break your code for your frontend, backend, and infrastructure.

#1 Solution for Logs, Traces & Metrics

APM

Kubernetes

Logs

Synthetics

RUM

Serverless

Security

More