Jitter vs Latency – What are the Differences and Why Those Things Matter

The jitter and latency are the characteristics related to the flow in the application layer. Jitter and latency are the metrics used to assess the network's performance. The major distinction between jitter and latency is that latency is defined as a delay via the network, whereas jitter is defined as a change in the amount of latency.

Increases in jitter and latency have a negative impact on network performance, therefore it's critical to monitor them regularly. When the speed of the two devices differs, latency and jitter increase; congestion causes buffers to overflow, and traffic bursts.

In this article, we'll go through the differences between jitter and latency in further detail.

- What is Jitter?

- What is Latency?

- Effects of Jitter and Latency on Network

- Causes of Jitter

- How to Reduce Jitter

- Causes of Latency

- Ways to Reduce Latency

- Tools to Monitor Jitter and Latency

What is Jitter?

Jitter is a phenomenon in which data packets are delayed in transmission due to network congestion or, in rare cases, route modifications. This is frequently the source of buffering delays in video streaming, and extreme levels of jitter can even result in dropped VoIP calls.

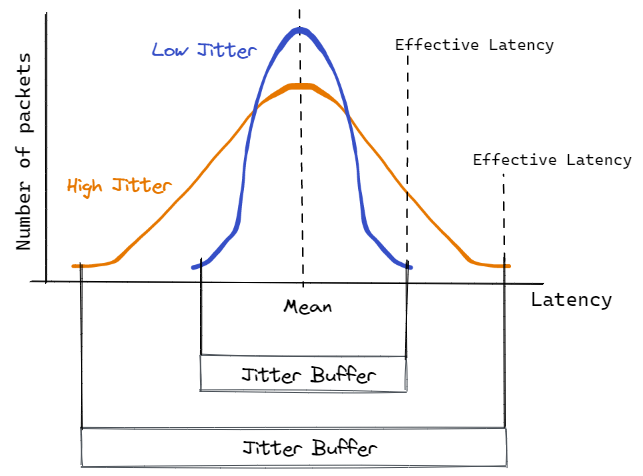

Since all incoming packets have the same delay, the first type of delay would not affect applications like audio and video. However, in the second case, the packet's varied delay is unacceptable, and it also results in packets arriving out of order. The term "high jitter" denotes a significant variance in delays, whereas "low jitter" denotes a minor change.

At the same time, some average jitter is considered to be acceptable because it does not interfere with your browsing or viewing experience. A jitter of 30 milliseconds or less is typically regarded as acceptable because it is barely apparent, but anything above this affects your browsing experience, as well as your calls. When downloading files, however, even higher levels of jitter are barely visible.

What is Latency?

The time delay between an action and a response to that action is known as latency. It is the time that has gone from the time a packet is delivered from the source to the destination and the time it takes for the acknowledgment from the destination to reach the source in the realm of networking. In this context, it refers to the time it takes to go from source to destination and back.

To give you an example, latency is the amount of time it takes for a website to load after you click the URL.

Internet data should theoretically travel at the speed of light, therefore there should be no delays. However, infrastructure, equipment, and sometimes even the distance between the source and destination computers limit its adoption. The good news is that there are a variety of methods for lowering latency, which, like jitter, can influence your internet experience.

Let's take a look at some related concepts like bandwidth and throughput, which are sometimes used interchangeably with delay.

The maximum amount of data that can move through a network at any given time is called bandwidth, but the actual amount of traffic that passes through the network is called throughput. In other words, if there are no latency issues, bandwidth remains constant throughout, but zero-latency is nearly unattainable, therefore throughput will always be lower than bandwidth.

Effects of Jitter and Latency on Network

Jitter and latency have a variety of effects on a network and, as a result, on the functioning of a business. Some instances are as follows:

- Bad Communication

Latency and jitter in a network can be a huge barrier to a company's communications performance. This is especially true when the data packets being transported must arrive intact for the communicated data to make sense.

VoIP is the case at hand. Nothing is more irritating than stuttering voice calls and overlapping chats. In the worst-case scenario, the conversation becomes completely incomprehensible, and the call may even be dropped.

Jitter, which transmits packets out of order, is typically to blame.

- Timeouts

Some applications poll a connection or destination host for a set amount of time before disconnecting the connection and displaying a "timeout" message.

If these timeouts occur when mission-critical applications try to connect to a server, for example, it might signal financial disaster for companies that rely on online transactions from their customers.

- Network Bottlenecks

Jitter can create latency and vice versa, as we've observed.

Jitter is caused by packets being sent at irregular intervals, which causes the buffers in the connecting hardware to fill up while waiting for the entire data to arrive. This creates overall delay, or latency, by slowing down traffic for packets that don't even require buffering.

Let's look at the common causes of jitter and latency and various strategies to troubleshoot them to acceptable levels.

Causes of Jitter

What causes jitter on a network is the first step toward resolving it. Jitter can be caused by some factors, which are as follows:

- Congestion

Congestion occurs when a network receives an excessive amount of data. This is especially true when the available bandwidth is limited and multiple devices are attempting to send and receive data through it at the same time.

- Hardware Issues

High jitter can be caused by older network equipment such as wifi, routers and cables that were not built to handle huge quantities of data.

- Wireless Connections

Jitter can be caused by poorly designed wireless systems, routers with low signal strength, or remaining too far away from the wireless router. Wired connections are the best option to handle key applications like video conferencing and VoIP in general.

- Lack of Packet Prioritization

Some applications, like VoIP, can be given priority, ensuring that those packets are not impacted by network congestion. There's always a potential that calls will be dropped if you don't set this priority.

Let's look at some approaches to how to fix this network jitter and keep it within acceptable bounds now that you know the various reasons.

How to Reduce Jitter

The solution will, in most cases, be determined by the problem's root cause. The following are various board solutions for reducing network jitter.

- Improve Your Internet

One of the most direct ways to combat network jitter is to improve your internet connection. As a general guideline, you should check that your upload and download speeds are sufficient to handle high-quality VoIP calls. The connection speeds you'll need to be determined by how many VoIP calls you'll be making. For example, an office with ten concurrent calls will require far fewer internet resources than an office with 200 concurrent calls.

A good rule of thumb is that for every 1 Mbps of bandwidth, you can sustain 12 calls.

This is just the tip of the iceberg in terms of elements to consider for the optimum end-call performance, but it provides you an indication of how much of your time and resources should be devoted to the internet.

- Jitter Buffers

The usage of a jitter buffer is an effective approach to eliminating jitters. This approach is used by many VoIP providers nowadays to prevent dropped calls and audio delays.

The goal of this jitter buffer is to purposely delay incoming audio packets to allow enough time for the receiving device to receive and sequence the data packets, allowing the user to have a smooth call experience.

These jitter buffers, however, are difficult to construct. If your buffer is tiny, there's a good risk that the extra data packets will be rejected, lowering the overall call quality. On the other side, a large buffer can cause conversational delays and hamper the experience.

- Bandwidth Testing

A bandwidth test, in which files are transmitted over a network to the destination and the time is taken for the destination computer to download the files is calculated, is a useful technique to figure out what's causing the jitter. This indicates the speed at which data is transferred from the source to the destination.

You can pinpoint the issue based on the results. Most of the time, it's a combination of excessive bandwidth utilization and outdated cables, therefore limiting bandwidth usage and replacing cables can help you address the problem. Similarly, scheduling updates and prioritizing tasks during busy business hours can help you handle this challenge.

It's worth noting that certain VoIP equipment operates at a significantly higher frequency than others, often at 5.8GHz rather than 2.4GHz, which can cause jitter on your network.

- Upgrade Network Hardware

Finally, changing your network hardware can help you avoid network jitter. The infrastructure that supports your network can make a significant impact on network performance. This is especially true if you're working on a network with a lot of old hardware. Though this isn't a solution, it can make a significant difference in older networks.

It's a good idea to pay specific attention to components like routers when upgrading your hardware because they constitute the backbone of your network's performance. Spending a little more money on a high-quality router with extensive QoS features can dramatically improve your network performance.

Causes of Latency

The ping rate is typically used to describe latency, which is measured in milliseconds. We want zero latency in an ideal world, but in the real world, we can settle for minimal latency issues. Let's look into what's causing these delays so we can respond appropriately.

- Distance Between Source and Destination

The distance between the source and destination computers is one of the most significant causes of latency. If you live in Los Angeles and request information from a server in New York City, for example, you can expect to receive it soon.

But what if certain data has to be obtained from Chicago? The service will be disrupted.

However, these delays are so minor that they are barely noticeable. Therefore, the longer the distance, the more data packets must travel via numerous networks and hop between multiple routers. Even if one network is overburdened or an old router is present, the pace of travel may be hampered. This is why latency is so affected by distance.

To solve his dilemma, service providers keep the same data on servers in multiple cities around the world, allowing them to swiftly respond to requests from anywhere. However, this is insufficient to resolve the issue.

- Type of Data

If the distance is one side, the type of data sought is the other side of the coin. Text packets, in general, are much faster than bandwidth-intensive information like videos since the former can travel swiftly even over congested networks.

- End-User Devices

Because of the restricted CPU and memory of older browsers and operating systems, they are slower. As a result, if the end user's equipment is outdated, it may have a restriction on how much data it can handle at once, affecting the viewing experience.

In addition to these variables, underlying hardware such as cables, firewalls, device limits, congested bandwidth, old technology, and incorrect codecs can all affect delay.

Ways to Reduce Latency

The solution is determined by the problem's root cause. However, the first step is to determine the rate of transmission latency. To do so, open a Command Prompt window and type "tracert" followed by the desired destination.

This command will report all of the routers that the packet passes through, as well as the time it takes to hop from one router to the next. Your overall latency is the total time of these individual hops. This will also offer you a clearer picture of where the delays occur, allowing you to take corrective action.

The following are some of the probable answers.

- Subnetting

Subnetting is the method of grouping endpoints that frequently connect with one another. In this way, you're breaking the network down into smaller, more frequently communicated groups, lowering bandwidth congestion and latency. This procedure also makes your network easier to manage, especially if it spans multiple geographical locations.

- Traffic Shaping

As the name implies, traffic shaping is a strategy for controlling bandwidth allocation so that mission-critical components of your business have continuous and uninterrupted network connectivity.

The congestion management approach controls the flow of data packets inside a network by giving priority to vital applications while delaying less important data packets to make room for the critical data packets.

Just to clarify, data transfer throttling is the process of restricting the flow of packets into a network, whereas rate limitation is the process of allowing packets to flow out of a network.

- Load Balancing

Another frequent approach is load balancing, which distributes incoming network traffic over several servers on the backend to better handle the increased activity. A load balancer can be thought of as a traffic officer that sits at the packet entry point and sends them to other servers that can handle them. This is done so that bandwidth allocation, speed, and utilization are all maximized.

These are some of the most prevalent ways of reducing latency. Often, these solutions also address the issue of jitter, especially if the causes of both latency and jitter are linked.

- Set Up a Content Delivery Network (CDN)

Using a Content Delivery Network (CDN) is one of the most effective ways to troubleshoot network latency. To make better use of your networking resources, a CDN employs content caching, connection optimization, and progressive image rendering.

For example, content caching involves the CDN creating copies of web pages before caching and compressing them using Points of Presence (POPs). This means that visitors to your website will have a shorter round-trip time to access the content.

Similarly, your connection is optimized using a variety of techniques. One of the most basic is that CDNs employ a Tier 1 network to route traffic through the fewest possible hoops. This reduces the round-trip time and provides a better user experience for the end-user.

Tools to Monitor Jitter and Latency

Many tools monitor jittering and latency for you and tell you what's causing the issue. This simplifies your job because all you have to do now is implement the proper solution to resolve the issue.

Here's what we think are the finest tools for dealing with jitter and latency:

- SolarWinds VoIP and Network Quality Manager

This is a sophisticated solution from SolarWinds that analyses every aspect of traffic and network performance indicators like jitter and latency to provide you with thorough information. Furthermore, its superior skills pinpoint the actual reason, allowing you to correct the problem straight immediately. - Paessler Router Traffic Grapher

PRTG is a useful tool for monitoring many parts of your network because it is based on sensors, which allows you to utilize one sensor per device to track a specific metric like jitter. - PingTrend

This is a simple application with a user-friendly interface for measuring and displaying key parameters like jitter and latency.

Conclusion

Although there are some similarities between jitter and latency, they are totally different in reality. Understanding the essence of each of these concepts can be quite useful in finding and detecting solutions. If you want your VoIP services to run smoothly, you'll need to be sure that jitter and latency aren't wreaking havoc on your network.

While latency and jitter can be bothersome at home, they can lead to a loss of productivity and loss of revenue in the business, so staying on top of it is critical.

Monitor Your Entire Application with Atatus

Atatus is a Full Stack Observability Platform that lets you review problems as if they happened in your application. Instead of guessing why errors happen or asking users for screenshots and log dumps, Atatus lets you replay the session to quickly understand what went wrong.

We offer Application Performance Monitoring, Real User Monitoring, Server Monitoring, Logs Monitoring, Synthetic Monitoring, Uptime Monitoring, and API Analytics. It works perfectly with any application, regardless of framework, and has plugins.

Atatus can be beneficial to your business, which provides a comprehensive view of your application, including how it works, where performance bottlenecks exist, which users are most impacted, and which errors break your code for your frontend, backend, and infrastructure.

If you are not yet an Atatus customer, you can sign up for a 14-day free trial.

#1 Solution for Logs, Traces & Metrics

APM

Kubernetes

Logs

Synthetics

RUM

Serverless

Security

More