Kubernetes Load Balancers: A Beginner's Guide

In the earlier days of the internet, organizations faced resource allocation challenges when running applications on physical servers. They initially resorted to running individual applications on separate physical servers, which was costly and not scalable.

As the internet continued to evolve, organizations sought more efficient ways to manage their applications and workloads. Virtualization emerged as a solution, allowing multiple virtual machines to run on a single physical server, providing better resource utilization and scalability.

However, managing these virtual machines still required manual intervention and lacked the agility needed for modern, cloud-native applications.

This is where Kubernetes comes into play. One of its key features is its load balancer capabilities, which play a pivotal role in ensuring optimal resource allocation and high availability for applications in a modern cloud-native environment.

Well! In this blog post, let us explore Kubernetes load balancers and the related topics.

Table of Contents

- What is a Load Balancer?

- How does a Load Balancer Works?

- Components of Kubernetes Services

- Load Balancers and Ingresses

- Load Balancing Algorithm

- Optimal Approaches for Managing a Kubernetes Load Balancer

What is a Load Balancer?

A Kubernetes load balancer is a tool or mechanism that helps evenly distribute network traffic to applications running in Kubernetes clusters. It ensures that these applications remain responsive and available, even when multiple instances of them are running.

It distributes incoming traffic across multiple containers, ensuring that applications remain available even if some containers fail or need to be scaled up or down.

This load balancing helps optimize the use of resources and improves the overall performance and reliability of applications in a Kubernetes environment.

How does a Load Balancer Works?

Imagine you have a big website with lots of people visiting every minute. If all the requests go to just one computer, it can get overwhelmed and crash. That's where a "Load Balancer" comes in. It's like having a dynamic traffic manager for your website or application.

In Kubernetes, one can achieve load balancing using a "Load Balancer Service". Firstly you need to define a rule that tells Kubernetes to set up a Load Balancer for your application. For instance, let's say you have an e-commerce website,

apiVersion: v1

kind: Service

metadata:

name: e-commerce-service

spec:

selector:

app: e-commerce-app

ports:

- protocol: TCP

port: 80

targetPort: 8080

type: LoadBalancer

After applying this configuration to your Kubernetes cluster, Kubernetes automatically interacts with your cloud provider to create an external Load Balancer. This Load Balancer listens for incoming web traffic on port 80 and directs it to the pods running your e-commerce application on port 8080.

As your website becomes more popular or as you add more pods to handle the load, the Load Balancer adapts by distributing traffic across the available pods, ensuring high availability and a smooth user experience.

Thus load balancer ensures high availability and efficient load distribution, making it a valuable tool for applications like e-commerce websites that experience fluctuating and high demand from external users.

Components of Kubernetes Services

In Kubernetes, a service is a fundamental resource that helps facilitate network communication and load balancing among the containers within a cluster. Here is an overview of Kubernetes services:

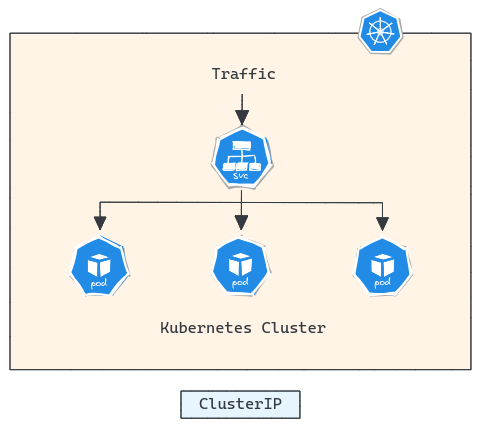

1. ClusterIP

ClusterIP is a Kubernetes service type that provides a stable, internal IP address within the cluster for a set of pods. It is used for inter-pod communication, allowing pods within the same cluster to access the service without exposing it to external traffic.

apiVersion: v1

kind: Service

metadata:

name: my-service

spec:

selector:

app: my-app

ports:

- protocol: TCP

port: 80

targetPort: 8080

type: ClusterIP

This Kubernetes code defines a ClusterIP service named my-service that selects pods labelled with app: my-app, exposes port 80, and forwards traffic to those pods on port 8080.

Disadvantages:

- Limited External Access

- Manual Load Balancing

- Complex Routing Requirements

- No Session Affinity

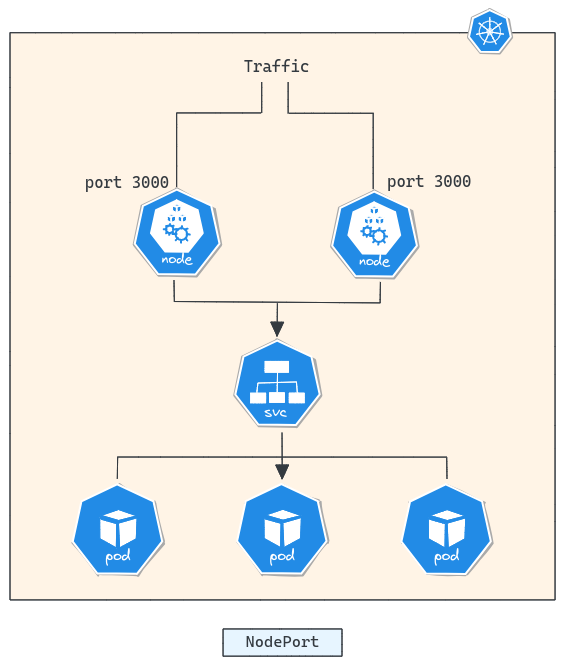

2. NodePort

NodePort is a Kubernetes service type that exposes services to external clients by binding a specific port on each node's IP address to the service. It is used when services within a Kubernetes cluster need to be directly accessible from outside the cluster.

Disadvantages:

- Limited Load Balancing

- Manual Configuration

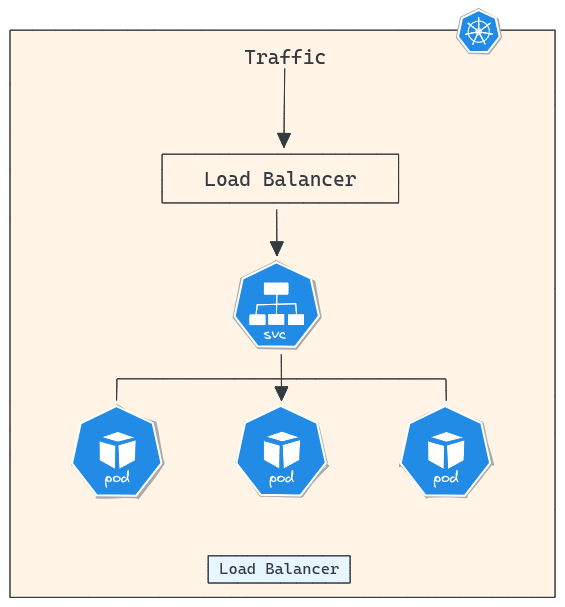

3. Load Balancer

Load Balancer in Kubernetes is a service type that provides external access to services by automatically configuring a cloud or hardware load balancer. Load balancers are more efficient and beneficial for externally accessible services compared to NodePort or ClusterIP services, as they handle traffic distribution, provide automatic failover, and integrate with cloud provider features for high availability.

- Minimizes application downtime and improves reliability

- Automatic distribution of incoming traffic

- Allows mapping of external and internal ports

- Simplified SSL certificate management

4. Ingress

Ingress in Kubernetes is an API object that serves as a layer 7 load balancer, enabling the management of external access to services within a cluster by defining routing rules for HTTP and HTTPS traffic. Ingress controllers implement these rules, allowing traffic to be routed based on host, path, and other criteria, while also handling SSL termination for secure connections.

Ingress plays a crucial role in directing network traffic to services based on pre-defined routing rules or algorithms. Additionally, it facilitates the external accessibility of pods, typically through HTTP, and offers flexibility in choosing various load distribution strategies to align with your specific business objectives and environmental requirements.

Load Balancers and Ingresses

Load Balancers and Ingresses are two ways to make your services accessible to the internet in a Kubernetes environment. Load Balancers work well when you have just a few services to share, but they have limitations when you have many services. They can't do things like routing traffic based on specific parts of a web address (like the path or domain), and they depend on the cloud provider's technology.

In contrast, Ingress provides more control. It lets you set rules for how incoming internet traffic should be directed, like sending requests to the right service based on the web address you're using.

This means you can use a single Load Balancer to manage traffic for multiple services, making things simpler. Plus, Ingress supports extra features like handling SSL security, rewriting web addresses, and controlling how fast requests are allowed. It's a more flexible and powerful way to manage internet traffic for many services in Kubernetes.

Load Balancing Algorithm

A load balancing algorithm is a set of rules or procedures used in computer networking to distribute incoming network traffic or workload across multiple servers or resources. These algorithms help distribute the "load" or demand in a way that prevents bottlenecks and ensures a smoother, more responsive experience for users or clients.

1. Round-Robin

Round-Robin is the simplest of these algorithms. This approach ensures an even distribution of requests across all available servers, making it an excellent choice when all servers have similar capabilities, and you want to balance the load uniformly.

| L4 Round Robin | L7 Round Robin |

|---|---|

| Operates at the transport layer (Layer 4), considering IP addresses and port numbers | Operates at the application layer (Layer 7), analyzing the content and application-specific data |

| Offers basic load distribution but lacks application-awareness and advanced routing capabilities. | Provides more advanced and intelligent traffic routing, making it suitable for sophisticated applications and services. |

| Simpler and less complex compared to L7 load balancing | More complex and capable of handling intricate routing scenarios |

| Basic load balancing, common in networks | Best for complex apps, uses application data |

2. Ring Hash

Ring Hash Load Balancing is a method that assigns connections to servers based on a distinct key. This approach is highly effective for situations involving numerous servers and dynamic content where maintaining a balanced load is critical.

A significant advantage of this method is that it doesn't necessitate a full recalculation of the distribution when servers are added or removed, thus minimizing disruption to existing connections.

Nevertheless, it's important to be aware that the Ring Hash technique can introduce some latency to request processing, and it generates relatively large lookup tables, potentially affecting performance by not fitting efficiently into the CPU cache.

3. Least Connection

Least Connection Load Balancing is a technique that guides incoming traffic towards servers with the fewest active connections. This method adapts to the status of each server, providing an effective means of load distribution by favoring servers with lower connection counts.

It excels at balancing workloads, especially when servers exhibit varying performance or health. However, when all servers are in a similar state and have equivalent connection counts, the load distribution remains uniform among them.

Optimal Approaches for Managing a Kubernetes Load Balancer

When it comes to implementing load balancers in a Kubernetes environment, optimizing their configuration is essential for ensuring efficient load distribution and the seamless operation. Here are few optimal approaches for better management.

1. Choose the Right Load Balancer Type

The most crucial aspect in choosing the right load balancer is aligning it with your application's specific requirements, including traffic types and protocols. Prioritize the load balancer's scalability and performance capabilities to handle your expected traffic volumes effectively. Additionally, consider the level of control and customization needed to meet your application's unique demands.

2. Key Considerations for Load Balancer Enablement

Enabling the load balancer, specifying its type, and leveraging cloud-specific features through annotations are integral to optimal load balancer management within Kubernetes, directly influencing how external traffic is routed to your services and applications.

3. Enabling Probes for Kubernetes Load Balancing

Configuring readiness and liveness probes in your Kubernetes deployments is essential. Readiness probes determine if an application is ready to serve traffic, facilitating effective load balancer placement. Liveness probes assess a pod's health and trigger restarts when necessary, ensuring continuous operation and high availability, even in the presence of application issues.

4. Regularly Updating and Testing Configuration

Regularly updating and testing your load balancer configuration in a Kubernetes environment is essential for maintaining optimal performance, security, and reliability. Regular updates and testing help prevent issues and vulnerabilities from impacting your production environment.

5. Documentation and Alerts

Proper documentation is essential to describe load balancer configurations and service details, aiding understanding and troubleshooting. Implementing alerting systems is vital to proactively detect and address issues, ensuring the stability and reliability of load balancing in Kubernetes.

Conclusion

Kubernetes, the prominent container orchestration platform, offers a comprehensive set of service concepts and features that streamline load balancing. Kubernetes Services, in combination with Load Balancers and Ingresses, provide a robust framework for the orchestration of network traffic within containerized applications.

Load balancing algorithms further fine-tune this process. Choosing the right load balancing method and staying mindful of container dynamics is key to efficient application infrastructure in the evolving landscape of cloud-native computing.

Atatus Kubernetes Monitoring

With Atatus Kubernetes Monitoring, users can gain valuable insights into the health and performance of their Kubernetes clusters and the applications running on them. The platform collects and analyzes metrics, logs, and traces from Kubernetes environments, allowing users to detect issues, troubleshoot problems, and optimize application performance.

You can easily track the performance of individual Kubernetes containers and pods. This granular level of monitoring helps to pinpoint resource-heavy containers or problematic pods affecting the overall cluster performance.

#1 Solution for Logs, Traces & Metrics

APM

Kubernetes

Logs

Synthetics

RUM

Serverless

Security

More