9 Popular Kubernetes Distributions You Should Know About

Kubernetes has become the go-to platform for container orchestration, allowing teams to more efficiently manage their containerized applications.

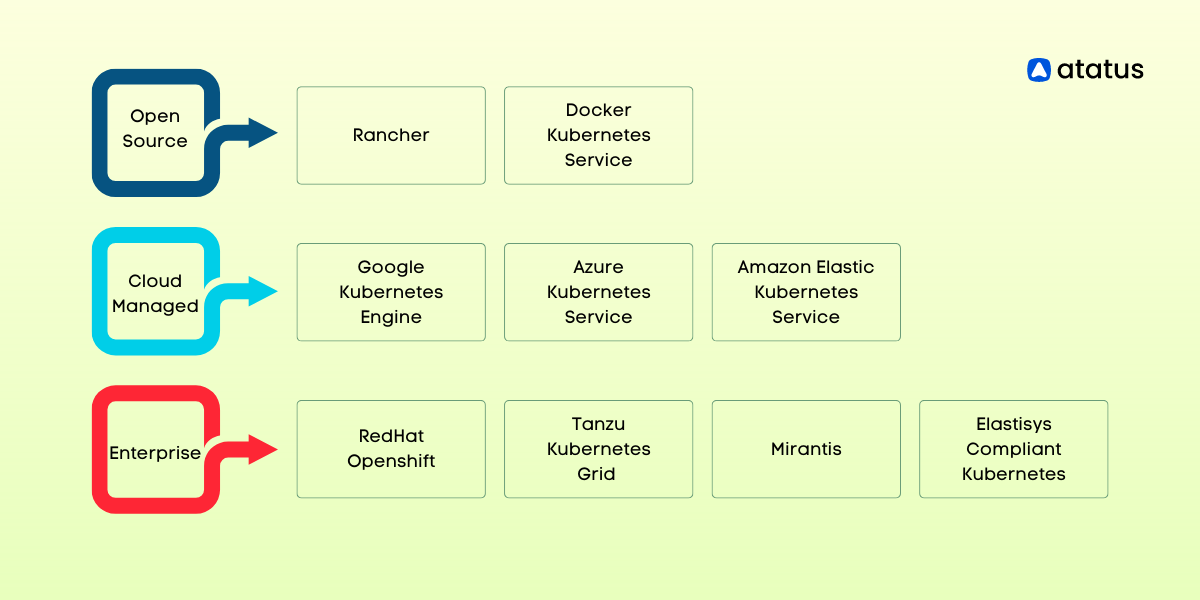

Vanilla Kubernetes, as well as managed Kubernetes, are the two options available when building up a Kubernetes system. A group of programmers using vanilla Kubernetes must download the source code files, follow the code route, and set up the machine's environment.

However, managed Kubernetes is already pre-compiled and pre-configured in tools that increase specific emphasis areas, like storage, security, deployment, tracking, etc. Kubernetes packages are another name for managed Kubernetes versions.

Kubernetes was created by Google, a pioneer in search engine technology. The initiative is now looked after by the Cloud Native Computing Foundation. Developers can create apps using the microservices design with Kubernetes. Kubernetes has a number of advantages, including mobility and immutability.

Development teams must customize Kubernetes versions in order to use the Kubernetes environment. An open-source container orchestration system called Kubernetes is used to automate the administration, scalability, and distribution of software.

With its ability to automate container deployment, scaling, and management, Kubernetes has been embraced by organizations across the world. However, choosing the right Kubernetes distribution can be a challenge, with several options available in the market.

In this blog post, we will explore the seven most popular Kubernetes distributions that are currently dominating the market.

- Google Kubernetes Engine (GKE)

- Azure Kubernetes Service (AKS)

- Rancher

- Red Hat OpenShift

- Docker Kubernetes Service (DKS)

- Amazon Elastic Kubernetes Service (EKS)

- VMware Tanzu Kubernetes Grid (TKG)

- Mirantis

- Elastisys Compliant Kubernetes

Components of Kubernetes Distribution

Kubernetes Distributions should contain particular elements in order to effectively develop and oversee a Kubernetes ecosystem. Among the essential elements of a Kubernetes Distribution are:

- Container run time

- Storage

- Networking

1. Container Runtime

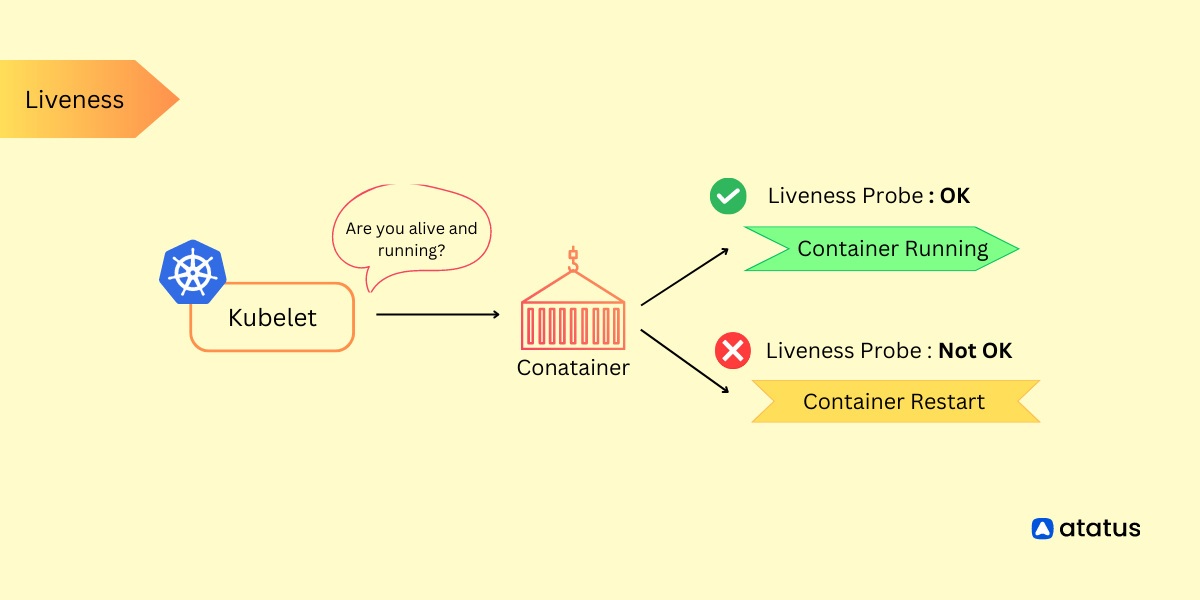

The most fundamental Kubernetes objects, PODs, which house containers, are managed by the Container Runtime Engine. On the physical/virtual computer where they are housed, the container runtime engine basically aids in container creation and management.

Any container runtime may accommodate Kubernetes applications because Kubernetes employs the Container Runtime Interface (CRI) to manage cluster resources.

Different Kubernetes distributions may offer support for a variety of container runtimes. Some well-known container runtimes include Docker, CRI-O, Apache Mesos, CoreOS, rkt, Canonical LXC, and frakti.

2. Storage

Containers are temporary in nature, meaning that they only continue to function as long as the operation they are operating does. Once the procedure they are conducting finishes, a container will shut down.

While fulfilling user requests, the majority of containerized apps generate and handle enormous amounts of data. Since storage provides a means of preserving this data, it is crucial for Kubernetes apps.

The Container Storage Interface (CSI) of Kubernetes makes it simple for outside companies to develop storage options for containerized apps. While some Kubernetes Distributions develop their own storage products, others combine with already available options from third parties.

GlusterFS, Portworx, Rook, OpenEBS, and Amazon ElasticBlock Storage (EBS) are a few examples of popular storage options for Kubernetes.

3. Networking

Kubernetes apps are usually divided into microservices that operate in containers and are housed in various PODs on various computers. The seamless contact and interplay between various containerized components are made possible by networking implementations.

Each distribution may depend on a networking solution to allow contact between pods, services, and the internet. Networking within Kubernetes is an herculean job. Several well-liked networking solutions are Flannel, Weave Net, Calico, and Canal.

9 Popular Kubernetes Distributions

Diverse flavors of the Kubernetes platform are offered by popular Kubernetes distributions, each customized to meet various preferences and requirements. Below, you'll find a list of seven well-known Kubernetes distributions that address a wide range of use cases:

1. Google Kubernetes Engine (GKE)

Google Kubernetes Engine (GKE) is a managed service from Google Cloud that helps you deploy and manage applications using Kubernetes, a system for managing containerized applications.

- GKE takes care of complicated tasks like setting up and upgrading Kubernetes clusters, so developers can focus on their apps.

- It automatically adjusts the size of your applications based on how much they're being used, which saves resources and keeps things running smoothly.

- GKE works well with other Google Cloud services, so you can use things like storage and machine learning easily.

- It's built with strong security features, like automatic updates and repairs, and it makes sure only the right people can access your apps.

- You can use GKE to run your apps in different places for better reliability and recovery options.

- GKE can create private clusters that give your apps an extra layer of protection.

- If you're using Google Cloud's hybrid and multi-cloud platform called Anthos, GKE helps you manage your apps consistently across different environments.

- It's friendly for DevOps practices because it works with popular tools, has a dashboard to monitor things, and provides command-line tools.

2. Azure Kubernetes Service (AKS)

Azure Kubernetes Service (AKS) simplifies Kubernetes cluster management within Azure's cloud environment. It automates cluster setup, freeing up developers to focus on coding and app deployment.

AKS dynamically scales apps based on demand, optimizing performance. Integration with Azure services, like Active Directory and Virtual Networks, streamlines development, while its networking capabilities adapt to intricate setups.

AKS accommodates both Windows and Linux containers, serving diverse workloads. Multi-zone deployments ensure high availability. Security features, such as role-based access control and Azure Active Directory integration, bolster applications.

With Azure Dev Spaces offering a developer-friendly space for quick testing and iteration, AKS empowers businesses to harness Kubernetes without complexities.

3. Rancher

For software teams seeking to handle Kubernetes applications anywhere—private cloud, public cloud, or on-premises—Rancher provides a complete stack. The stack gives administrators and developers a collection of tools to launch and control Kubernetes resources in any system, making it easier to manage workloads that are containerized.

Rancher stack makes it simple to supply resources for containerized apps by packaging core services in a portable layer. Rancher offers multi-cluster deployment in addition to infrastructure management by utilizing the application directory, container scheduling & orchestration, as well as high-level business grade control.

Rancher supports all container runtimes, but the Rancher Kubernetes Engine requires Docker to manage containers. (RKE). Rancher can use network adapters that Kubernetes supports because it complies with the Container Network Interface architecture. Rancher provides Persistent Storage Services over stateful apps in addition to implementing Block Storage through its own storage solution, Longhorn.

For multi-cluster Kubernetes apps operating in mixed cloud settings, Rancher is thought to be the best option. It is also strongly advised when cluster managers must give end users direct control over namespaces and groups.

4. Red Hat OpenShift

OpenShift enables the management of containers created in Docker and controlled by Kubernetes on-premises. It was created by the Red Hat Linux team as a component of a collection of container management tools that enables the on-premises creation of cloud-enabled services that can be distributed anywhere.

Admins can create and test apps on the platform's user-friendly UI before deploying them to the cloud. The platform is open-source. Numerous benefits are provided by OpenShift, including enhanced security, streamlined cloud application administration, and fast application builds. Our Kubernetes data show that it is the most widely used choice for hybrid and multi-cloud installations.

Built on top of Docker and Kubernetes is OpenShift. The Open Container Initiative, however, is supported, enabling interaction with various container runtimes. Red Hat OpenShift Container Storage, which offers dynamic and readily accessible permanent storage for containerized apps, is the foundation of OpenShift. The Software Defined Networking (SDN) technology used by OpenShift provides modules for configuring overlay networks for Kubernetes groups.

Large production environments that need thorough testing before any modifications to apps are made can use Red Hat OpenShift. It provides platform-as-a-service, software-as-a-service, and infrastructure-as-a-service features that enable agile development and are helpful in many applications, including those in the fields of healthcare, banking, and transportation, among others.

5. Docker Kubernetes Service (DKS)

DKS, a feature of Docker's business edition, was created to make operating and configuring Kubernetes-based enterprise apps less difficult. Development teams can incorporate Kubernetes into every step of the DevOps process, from the laptop to the production environment, with the help of the Docker Kubernetes Service. DKS makes Kubernetes apps secure throughout their lifetime in addition to streamlining Kubernetes operations.

Organizations can speed up application development with Docker Kubernetes Engine, enjoy the flexibility of vendor and infrastructure choices, and use Containerd to deploy Kubernetes Container Runtime Interface (CRI).

Although constructed on top of the Docker Engine, DKS complies with CRI and supports a variety of runtimes that do. Depending on the OS and tasks, DKS supports a variety of storage devices using a pluggable design.

DKS uses a number of storage devices, including aufs, overlay2, devicemapper, zfs, and btrs. Through its pluggable component, the Docker Engine uses adaptable networking that supports numerous drivers. Bridge, host, overlay, macvlan, and other third-party network modules are among the devices that are supported.

6. Amazon Elastic Kubernetes Service (EKS)

Because it provides High Availability by operating many cases of the Kubernetes Control Plane in various regions, the Amazon Elastic Kubernetes Service is highly well-liked. On-premises as well as in the AWS cloud, Kubernetes apps can be easily deployed, managed, and scaled thanks to the service.

AWS provides a number of autoscaling options, such as Fargate and EKS Managed Node Groups, which enable the supply of processing resources on demand. The Kubernetes control plane receives the most recent security updates from the EKS server immediately, fixing significant problems that guarantee the application's continued security.

Teams can develop and distribute applications anyplace, on the public/private cloud or on-premises, thanks to EKS's compliance with OCI, CRI, CNI, and CSI standards. In addition, AWS provides a wide range of integrated networking and storage options that function well with EKS-based apps, preventing vendor lock-in. Web apps, hybrid distribution, machine learning, and batch processing are some of the best AWS-EKS use cases.

7. VMware Tanzu Kubernetes Grid (TKG)

With an emphasis on modernizing application development and infrastructure virtualization, VMWare Tanzu is a suite of Kubernetes-specific products that makes development and management easier. Organizations can use VMWare Tanzu to speed up the creation and delivery of applications by using a collection of container images that administrators build and update.

This enables businesses to gain:

- Developer experience automation

- Legitimate open-source containers

- Industry-ready Kubernetes Runtime

- Multi-cluster administration integrated

As a singular point of access for all teams monitoring and analyzing cluster application & infrastructure metrics, VMWare Tanzu also allows full-stack observability.

Teams can use containers built-in Docker or any other runtime engine because Tanzu supports both OCI and CRI-compliant runtimes. Tanzu uses vSphere to handle storage, and this software's CNS-CSI driver enables it to support all ephemeral and permanent storage Kubernetes storage options.

Tanzu uses vSphere for Kubernetes to deploy VMware's NSX Container Networking Solution, which offers full-stack networking features. Teams are now free to use any native Kubernetes networking option.

8. Mirantis

The open-source Openstack platform from Red Hat is sold by Mirantis, which is primarily used to run Infrastructure-as-a-Service (IaaS) services on real or virtual machines. Previously referred to as Docker Enterprise, the Mirantis Kubernetes Engine enables DevOps teams to create and distribute code more quickly to private as well as public clouds.

The Openstack framework gains a number of bespoke databases, message queuing, staging elements, and orchestration capabilities thanks to Mirantis. This offers unified cluster operations over multi-cloud apps and removes complexity in architecture and operations. The Mirantis Kubernetes Engine is a market-leading Kubernetes version due to its ease of use and usefulness.

Given that Mirantis complies with the Open Container Initiative (OCI) standard, any container runtimes should function as intended. Additionally, Dockershim, the Kubernetes component allowing Mirantis to execute Docker containers, has built-in support.

Teams using Mirantis remain capable of handling Docker instances despite Kubernetes' announcement to stop supporting Docker as of version 1.2 without making significant configuration adjustments or patching Kubernetes.

Software-defined storage, in specifically Ceph for the block as well as object storage, is the main technology used by the Mirantis Cloud Platform.

An integrated, distributed framework for high-performing, self-healing, and scalable storage is offered by Ceph, an open-source software-specified storage option. Calico, which allows various networking topologies and permits highly scalable networks, is the primary CNI plugin for the Mirantis Kubernetes Engine.

9. Elastisys Compliant Kubernetes

A managed Kubernetes service called Elastisys provides round-the-clock assistance for the complete lifetime of an application. The platform includes built-in CNCF-approved open-source tooling and a security component that enables teams to take full advantage of Kubernetes while also implementing stringent security policies.

By enabling people and organizations to combine self-built infrastructure with managed tools for better compliance and security, compliant Kubernetes open-sourced the Elastisys distribution within November 2020.

The present distribution ensures security conformance throughout the complete Software Development Life Cycle by addressing GDPR, PCI-DSS, ISO-27001, SOC2, and HIPAA standards. Elastisys Compliant Kubernetes has advantages like automation as well as platform observability in addition to security and compliance.

Cloud-native and Elastisys Compliant Kubernetes supports CRI and OCI runtimes. This implies that it can be used to coordinate the development and deployment of containers by any such runtime engine. Additionally, it enables every networking and storage driver that Kubernetes has to give, providing a full cloud-native experience.

Conclusion

By utilizing pre-built settings, Kubernetes distributions assist teams in managing Kubernetes groups effectively. More distributors are entering the market as Kubernetes usage continues to increase.

Some of the most well-liked Kubernetes distributions have been discussed in this piece, along with their characteristics and appropriate use cases. These should be taken into account when choosing a tool to handle the Kubernetes-based workloads in your organization.

Developers now use Kubernetes as their go-to tool for container management at scale. The Google-developed open-source container management system is esteemed, well-supported, and still developing.

Additionally vast, intricate, and challenging to install and setup, up Kubernetes. In addition, the end consumer is left to do the bulk of the labor. Therefore, the best course of action is to look for a comprehensive container solution that incorporates Kubernetes as a supported, managed component rather than picking up the pieces and trying to go it alone.

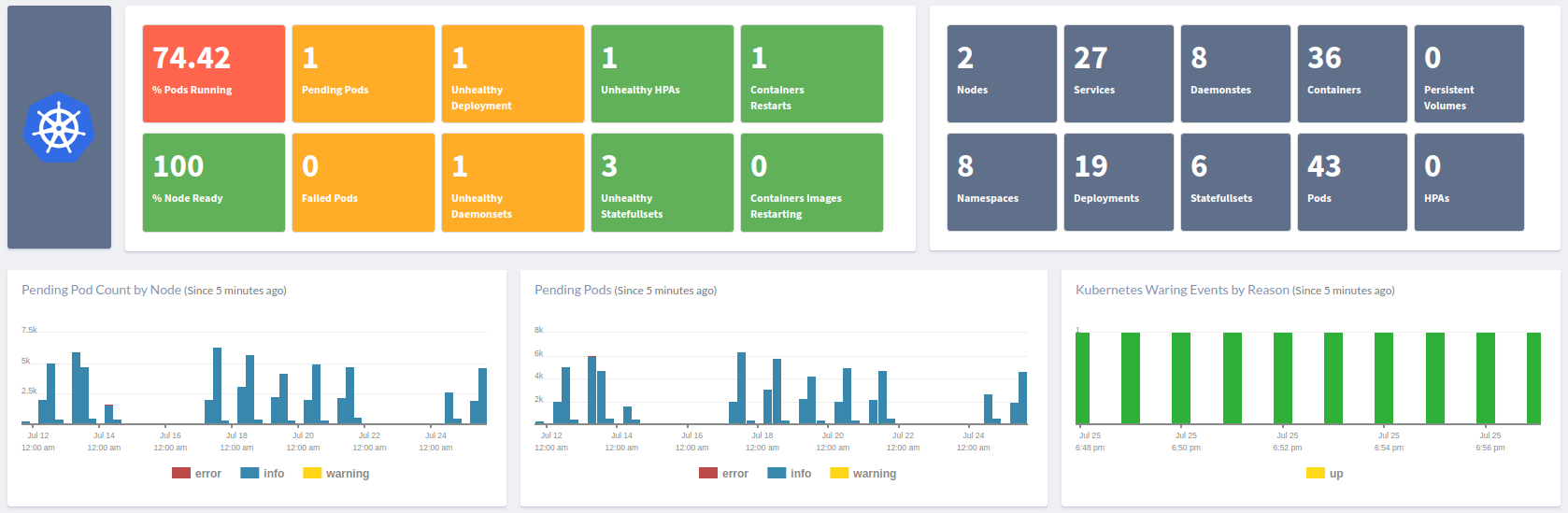

Atatus Kubernetes Monitoring

With Atatus Kubernetes Monitoring, users can gain valuable insights into the health and performance of their Kubernetes clusters and the applications running on them. The platform collects and analyzes metrics, logs, and traces from Kubernetes environments, allowing users to detect issues, troubleshoot problems, and optimize application performance.

You can easily track the performance of individual Kubernetes containers and pods. This granular level of monitoring helps to pinpoint resource-heavy containers or problematic pods affecting the overall cluster performance.

#1 Solution for Logs, Traces & Metrics

APM

Kubernetes

Logs

Synthetics

RUM

Serverless

Security

More