Kubernetes Security: 9 Best Practices for Keeping It Safe

Kubernetes dominates the container orchestration market in every way. According to the latest State of Kubernetes and Container Security study, 88% of enterprises utilise Kubernetes to manage a portion of their container workloads.

Kubernetes and other orchestration systems have given software deployment and management a new level of robustness and customization. They also brought attention to the current security landscape's shortcomings.

It is a complicated technology that needs a lot of setup and management. You must address critical architectural weaknesses and platform dependencies by applying security strategies to keep Kubernetes workloads safe, especially in a production environment.

In this article, we will cover best practices to secure Kubernetes Security:

- Role-Based Access Control (RBAC)

- Keep Your Policies Safe

- Configuration Validation

- Enhance Pod Security

- Keep Secrets as Secret

- Secure etcd with TLS, Firewall and Encryption

- Through Containerization

- Through the Use of Service Meshes

- Activate Audit Logging

#1 Role-Based Access Control (RBAC)

A system that runs on various devices and has many interconnected microservices controlled by hundreds of users and utilities is a logistical headache.

Maintain careful control over the permissions that users are allowed. The role-based access control (RBAC) mechanism is advocated by Kubernetes. Because of the role-based access management mechanism, no user has more permissions than they require to efficiently do their tasks. RBAC employs an API group to drive permission choices using the Kubernetes API, which is all about automation in Kubernetes.

RBAC can be simply enabled by using a Kubernetes command with the authorization mode flags 'RBAC'. Consider the following scenario:

kube-apiserver --authorization-mode=Sample,RBAC --other-options --more-optionsUsing four special-purpose objects, the Kubernetes API allows you to declare the access privileges to a cluster.

- ClusterRole and Role

These two objects use a set of rules to define access permissions. These rules can be defined for the whole Kubernetes cluster using the ClusterRole object. - ClusterRoleBinding and RoleBinding

These two objects can grant permissions to certain groups of users based on the permissions defined in a ClusterRole/Role. ClusterRoleBinding assigns permissions to a group of users across an entire cluster for a specific role.

#2 Keep Your Policies Safe

The security of Kubernetes is dependent on network policy, which is similar to RBAC. Rather than giving access to resources, network policy determines who is allowed to communicate with others in your cluster. By default, all applications in a Kubernetes cluster can see everything else in the cluster.

Your task is to create a network policy that prohibits communications between cluster components that have no business communicating with one another. Even if an attacker gains access to your network, it will be limited to a single job and will not be allowed to spread throughout the cluster. Cluster ingress and egress can also be managed via network policy.

Consider an air traffic controller who is very choosy about who is allowed to land at — and who is not allowed to leave — his airport. Partner IP addresses should be whitelisted, and internal-only applications should only accept traffic from IP addresses inside your firewall. Similarly, whitelisting approved domains for outbound traffic is a smart idea.

Hackers may try to gain access to your cluster and then transfer data to an external URL, which is obviously bad news. A solid network policy with strict ingress and egress rules will reduce the risk of this happening. Furthermore, implementing an ingress policy that limits how much traffic a user may consume before being turned off will help avoid DDoS attacks.

#3 Configuration Validation

DevOps teams are frequently understaffed, with insufficient resources to manually inspect each change made. As a result, it's unreasonable to expect them to identify every issue — even though it'd be terrible if they didn't. This is where the use of the appropriate tools to monitor and validate your setups comes into play.

There are systems that provide improved insight, prioritised recommendations, and collaboration tools to those implementing and managing Kubernetes. Software, like many other parts of the business, may often fill in the gaps that we are unable to fill ourselves. Kubernetes is no exception, and implementing the proper validation can help ensure that your configurations are safe.

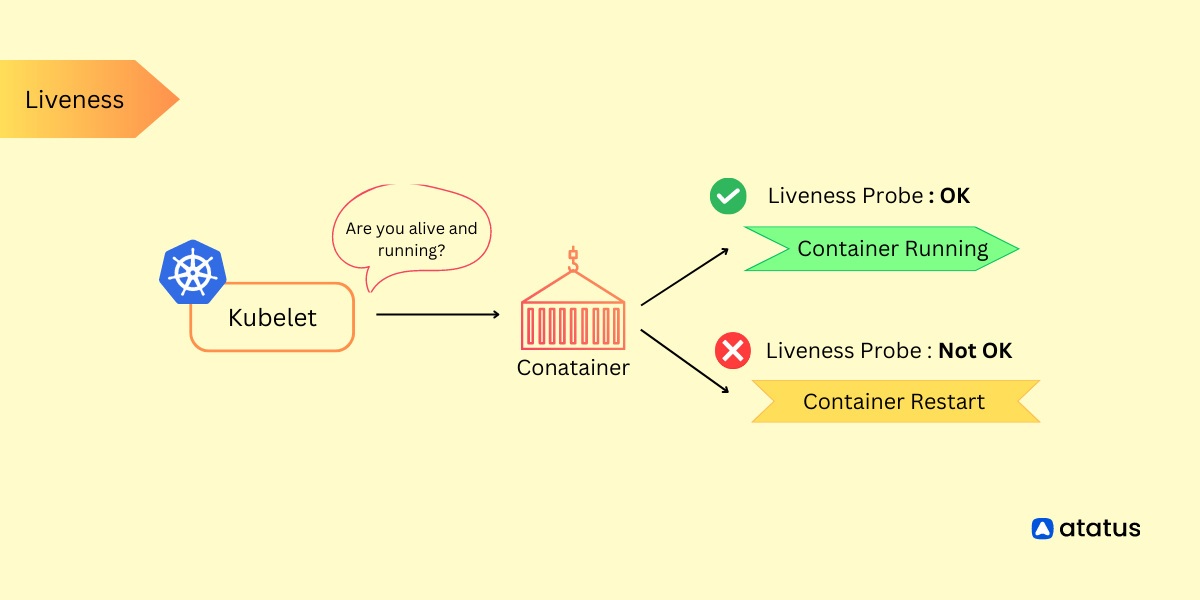

#4 Enhance Pod Security

Since containers share the same kernel, additional technologies are required to improve container isolation. User programs and system services are contained by security modules such as AppArmor and systems that implement access control regulations such as SELinux. They're also utilised to restrict file access and network resources.

The set of resources available to a container within the system is limited by AppArmor. Each profile can operate in one of two modes:

- Enforcing mode, which prevents access to prohibited resources

- Complains mode, which merely logs violations

The logging and auditing capabilities of your systems are considerably improved when potential errors are reported in a timely manner.

SELinux manages resource allocation without relying on the Linux ACM (Access Control Mechanism). It is not dependent on setuid/setgid binaries and does not recognise a superuser (root).

Without SELinux, securing a system relies on the appropriate configuration of privileged applications and the kernel. A misconfiguration in these sections could put the whole system at risk. The correctness of the kernel and its security-policy setting determine the security of a system based on an SELinux kernel.

Attacks continue to be a serious concern. Individual user programs and system daemons, on the other hand, do not have to jeopardise the security of the entire system when using SELinux.

#5 Keep Secrets as Secret

In Kubernetes, a secret is a tiny object that stores sensitive data like a password or token.

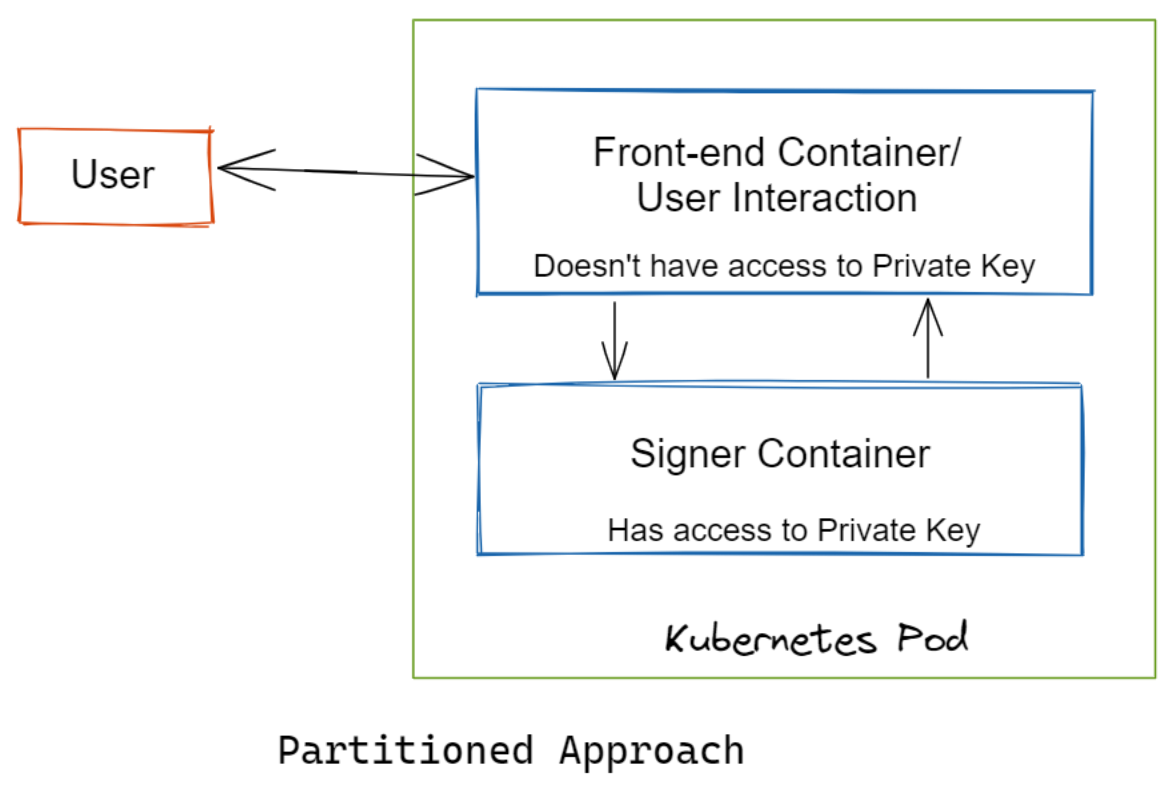

Despite the fact that a pod cannot access another pod's secrets, it is critical to maintaining the secret separate from an image or pod. Otherwise, everybody who could see the image would also be able to see the secret. Complex apps that handle several processes and are accessible to the public are particularly vulnerable.

The secret volume in a pod's volume must be requested by each container in the pod. By separating operations into separate containers, you may reduce the danger of secrets being revealed. Use a user-interactive front-end container that can't see the private key.

Add a signer container to it, which has access to the private key and can respond to basic signing requests from the front end. In order to breach your security protections, an attacker must conduct a series of sophisticated tasks using this partitioned technique.

#6 Secure etcd with TLS, Firewall and Encryption

Since etcd keeps the cluster's state and secrets, it's a valuable resource and a tempting target for hackers. Unauthorized users who obtain access to etcd have the ability to take control of the entire cluster. Malicious individuals can utilise read access to elevate privileges, which is harmful.

Use the following configuration options to set up TLS for client-server connection with etcd:

- --cert-file=

SSL/TLS communication with etcd requires a certificate - --key-file=

The key to the certificate (not encrypted) - --client-cert-auth

Specify that etcd should look for a client certificate certified by a trusted CA in incoming HTTPS requests - --trusted-ca-file=

a trustworthy certification authority - --auto-tls

For client connections, use a self-signed auto-generated certificate

Use the following configuration settings to configure TLS for etcd for server-to-server communication:

- --peer-cert-file=

For SSL/TLS connections between peers, a certificate is used - --peer-key-file=

The key to the certificate (not encrypted) - --peer-client-cert-auth

When this option is enabled, etcd examines all incoming peer requests for valid signed client certificates. - --peer-trusted-ca-file=

a trustworthy certification authority - --peer-auto-tls

For peer-to-peer connections, use auto-generated self-signed certificates

Install a firewall between the API server and the etcd cluster as well. For instance, run etcd on a separate node and configure a firewall on that node using Calico.

For etcd secrets, enable encryption at rest:

Encryption is required for the security of etcd, however, it is not enabled by default. You can enable it by giving the –encryption-provider-config option to the kube-apiserver process. You'll need to choose an encryption provider and define your secret keys in the setup.

#7 Through Containerization

Containerization is the foundation of Kubernetes. Docker, for example, wraps your application in layers of containers that act like a regular server but don't require any complicated setup or administration.

Trouble with Docker

Kubernetes security can be jeopardised if containerization isn't done correctly. Let's take Docker as an example. Layers make up Docker applications. This means they're made in the same way as a pastry. The most basic language support is provided by the innermost layer, while subsequent layers, or pictures, add capability.

There is nothing to prevent the inner layer from altering without warning because each Docker layer is maintained in a Docker Hub and under the control of a central repository owner. In the worst-case scenario, a hacker may modify a Docker image with the purpose of causing a Kubernetes security breach.

Docker Image Fixes

Changing the way Docker layers are tagged can solve the problem of changing Docker layers. The latest tag is usually assigned to each Docker layer, indicating the most recent update in Docker Hub.

You can replace the latest with a version-specific identifier like node:14.5.0. You can use this to prevent the inner layers from altering and ensure the security of your application.

You can reduce the hazards of image hacking in a couple of ways. You can start by cloning official images to your local repository. Second, vulnerability scanning tools can be used to check Docker images for security vulnerabilities. Docker has its own vulnerability scanner, however, it's only available to Pro and Team plan customers.

#8 Through the Use of Service Meshes

Most websites in the early 2000s employed 3-tier structures, which were vulnerable to attacks. The introduction of containerized solutions like Kubernetes has improved security but also has demanded creative ways for scaling applications in a secure manner. Fortunately, we have service meshes to assist us.

A service mesh works to separate security concerns from the application you're using at the time. Instead, through the usage of a sidecar, security is passed to the infrastructure layer. Encrypting traffic in a cluster is one of the capabilities of a service mesh. This reduces the danger of data breaches by preventing hackers from intercepting traffic.

Service meshes in Kubernetes are often connected using the service mesh interface. This is a standard interface that includes security and features for the most typical use cases.

Observability can also be aided via service meshes. In this scenario, observability entails monitoring how traffic flows between services.

#9 Activate Audit Logging

Make sure audit logging is turned on and that you're keeping an eye on any strange or unauthorised API calls, particularly authentication failures. The status message for these log entries is "Forbidden." If you don't authorise, it's possible that an attacker is attempting to utilise stolen credentials.

You can use the –audit-policy-file flag when delivering files to kube-apiserver to enable audit logging and specify which events should be logged. None, Metadata only, Request (which logs metadata and requests but not responses), and RequestResponse (which logs all three) are the four logging levels available.

Managed Kubernetes providers can give their customers access to this information through their dashboard and set up alerts for permission failures.

Conclusion

Kubernetes may operate as a lighthouse for developers who are drowning in the complexities of server management, guiding them safely over the high seas of CI/CD.

Kubernetes and containerization are quickly gaining popularity as a means of deploying and scaling applications. However, as the popularity of DevOps grows, so do the security threats, especially if teams aren't consistently following best practices.

They need to become more comfortable with security best practices. If they haven't already, IT managers should work with their teams to ensure that the best practices in this piece and others become standard operating procedures.

Monitor Your Entire Application with Atatus

Atatus is an Observability Platform that lets you review problems as if they happened in your application. Instead of guessing why errors happen or asking users for screenshots and log dumps, Atatus lets you replay the session to quickly understand what went wrong.

We offer Application Performance Monitoring, Real User Monitoring, Serverless Monitoring, Logs Monitoring, Synthetic Monitoring, Uptime Monitoring, and API Analytics. It works perfectly with any application, regardless of framework, and has plugins.

Atatus can be beneficial to your business, which provides a comprehensive view of your application, including how it works, where performance bottlenecks exist, which users are most impacted, and which errors break your code for your frontend, backend, and infrastructure.

If you are not yet a Atatus customer, you can sign up for a 14-day free trial.

#1 Solution for Logs, Traces & Metrics

APM

Kubernetes

Logs

Synthetics

RUM

Serverless

Security

More