Optimizing Workloads in Kubernetes: Understanding Requests and Limits

Kubernetes has emerged as a cornerstone of modern infrastructure orchestration in the ever-evolving landscape of containerized applications and dynamic workloads.

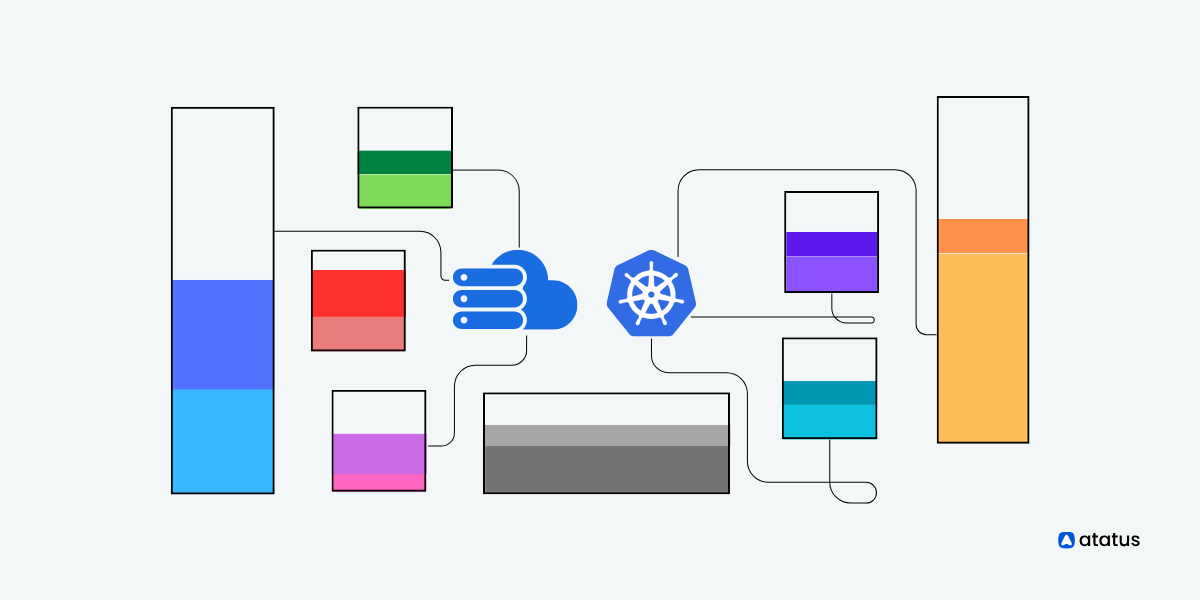

One of the critical challenges Kubernetes addresses is efficient resource management – ensuring that applications receive the right amount of compute resources while preventing resource contention that can degrade performance and stability.

This is where the concepts of "resource requests" and "resource limits" come into play, serving as guiding principles for orchestrating workloads within a Kubernetes cluster.

In this article, we will explore Kubernetes resource requests and limits – two essential mechanisms that dictate how containers acquire and consume CPU and memory resources.

These mechanisms provide the framework for orchestrating the precise allocation of resources, enabling your applications to run smoothly and optimally, even in the face of dynamic fluctuations in demand.

As we delve into the intricacies of resource requests and limits, we'll uncover their underlying functions, explore their impact on cluster performance, and highlight best practices for harnessing their potential to optimize application efficiency.

Table Of Contents:-

- Types of Resources - CPU and Memory

- What is Resource "Requests" Command?

- What is Resource "Limits" Command?

- Key Functions of Requests and Limits in Kubernetes

- More on Resource Quota and Limit Ranges

- Best Practices for Setting Up Requests and Limits

Types of Resources - CPU and Memory

Indeed, when it comes to resource management in Kubernetes, the two primary types of resources that are commonly allocated and managed are CPU and memory. Here's a breakdown of each:

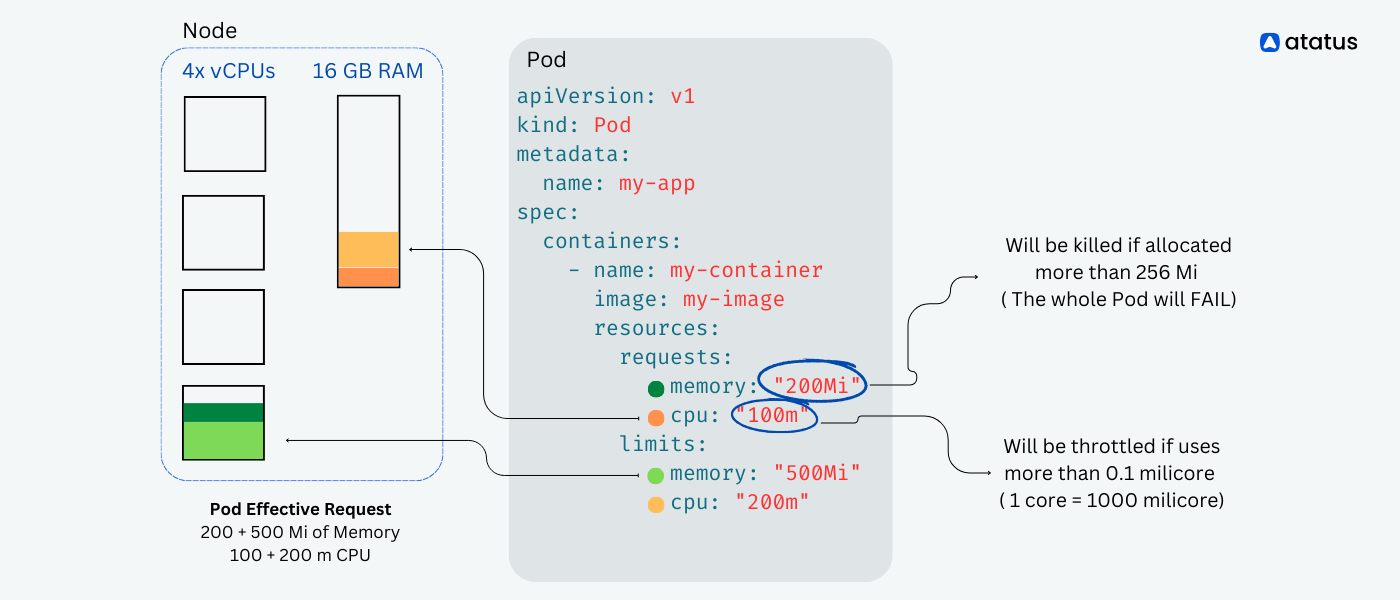

In Kubernetes, CPU resources are measured in "millicores" or "mCPU," which is a unit that represents a thousandth of a CPU core. For example, "100m" represents 100 milliCPU, which is equivalent to 0.1 CPU core.

CPU resources are important for tasks that involve computational processing, such as running application code, handling requests, and executing various tasks within containers.

When it comes to CPU requests, you should keep in mind that if you put in a value larger than your biggest node, your pod will not be scheduled. Suppose your Kubernetes cluster is comprised of dual core VMs, but you have a pod that uses four cores. Your pod will never run!

For most apps, it is recommended to keep CPU requests at '1' or below, and run more replicas to scale it out unless they are specifically designed for multiple cores (such as scientific computing and some databases). This gives the system more flexibility and reliability.

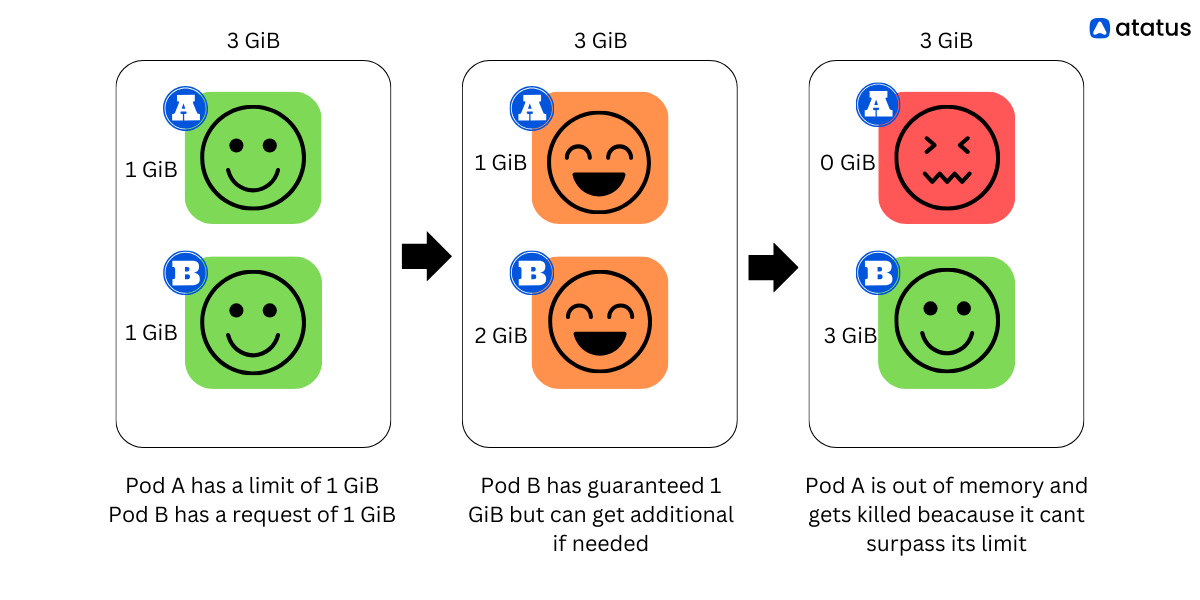

Memory (RAM - Random Access Memory) is the volatile storage used by applications to store data that is actively being used or processed. In Kubernetes, memory resources are typically measured in bytes or binary multiples (KiB, MiB, GiB).

Memory resources cannot be compressed, unlike CPU resources. As it is not possible to throttle memory usage, containers that go over their memory limits will be terminated. Pods managed by Deployment, StatefulSet, DaemonSet or another type of controller will spin up an extra pod.

Resource requests and limits are used for both CPU and memory resources in Kubernetes to ensure that containers get the necessary resources for proper operation and to prevent resource contention.

What is Resource “Requests” Command?

In Kubernetes, resource requests and limits are parameters used to manage and control the allocation of compute resources (such as CPU and memory) for containers running within pods. These parameters help ensure efficient resource utilization, prevent resource contention, and maintain stable performance of applications in a cluster.

Resource Requests is the amount of a specific resource (such as CPU or memory) that a container specifies as its minimum requirement to run properly.

When you set a resource request, Kubernetes scheduler uses this information to find a suitable node for placing the pod. Nodes are selected based on their available resources that match or exceed the requested resources.

- Requests help ensure that pods are placed on nodes with adequate resources to meet their baseline operational needs.

- If a pod's resource requests cannot be met by any available nodes, the pod will remain in a pending state until sufficient resources become available.

Let's assume you have a Python application that requires a certain amount of CPU and memory to run efficiently. Here's how you can specify resource requests for this application:

apiVersion: v1

kind: Pod

metadata:

name: my-python-app

spec:

containers:

- name: python-app-container

image: python-app-image

resources:

requests:

memory: "256Mi" # Request 256 megabytes of memory

cpu: "200m" # Request 200 milliCPU (0.2 CPU)In this example:

- memory: "256Mi" specifies that the container should request 256 megabytes of memory.

- cpu: "200m" specifies that the container should request 200 milliCPU (which is equivalent to 0.2 CPU).

These requests indicate to the Kubernetes scheduler that this container needs at least 256MB of memory and 200 milliCPU to run effectively. The scheduler uses this information to find a suitable node to place the pod.

You can adjust these values based on your application's requirements and performance characteristics. Additionally, if you're using Kubernetes manifests for deploying, you can apply this YAML definition using the kubectl apply -f <filename> command, where <filename> is the name of the YAML file containing the Pod definition.

What is Resource “Limits” Command?

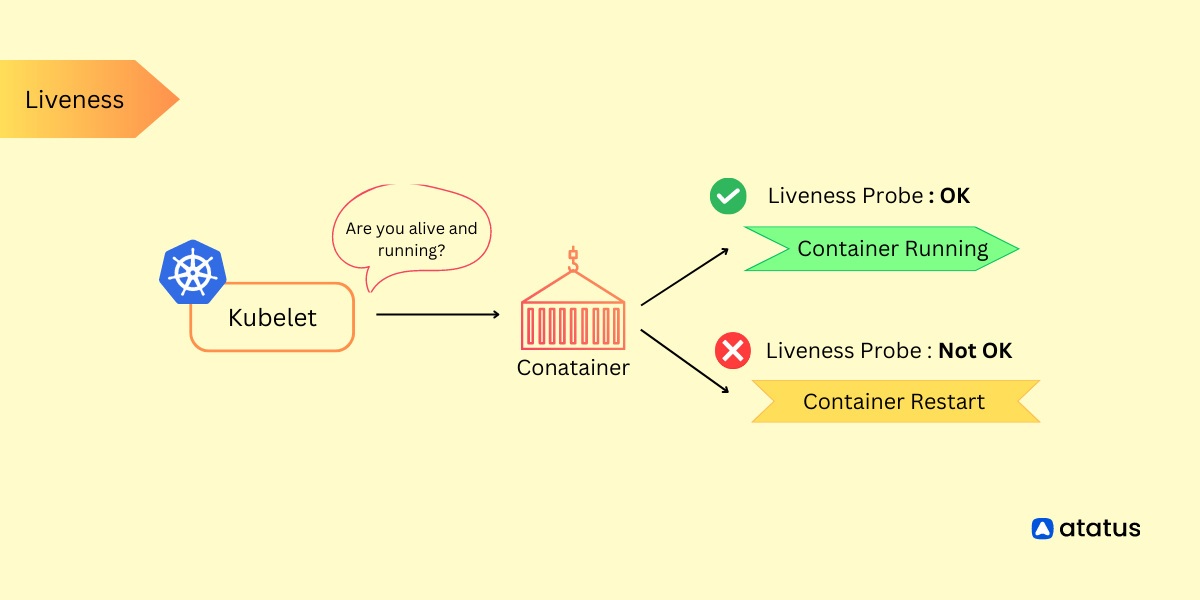

A resource limit defines the maximum amount of a specific resource that a container is allowed to consume. It acts as a safeguard against resource-hungry containers consuming all available resources on a node.

- Setting resource limits prevents a single container from negatively impacting the overall performance and stability of the cluster by using up more than its fair share of resources.

- If a container exceeds its specified resource limit, Kubernetes takes actions to control the container's resource usage. This might involve throttling, eviction, or even restarting the container, depending on how your cluster is configured.

Let's continue with the example of a Python application and specify resource limits for it:

apiVersion: v1

kind: Pod

metadata:

name: my-python-app

spec:

containers:

- name: python-app-container

image: python-app-image

resources:

limits:

memory: "512Mi" # Limit memory usage to 512 megabytes

cpu: "500m" # Limit CPU usage to 500 milliCPU (0.5 CPU)In this example:

- memory: "512Mi" specifies that the container's memory usage should not exceed 512 megabytes.

- cpu: "500m" specifies that the container's CPU usage should not exceed 500 milliCPU (which is equivalent to 0.5 CPU).

These limits indicate to Kubernetes that the container should not consume more than 512MB of memory and 500 milliCPU (0.5 CPU). If the container's resource usage goes beyond these limits, Kubernetes will take actions to manage and control its resource consumption, such as throttling or evicting the container.

Setting resource limits is crucial for preventing containers from consuming excessive resources and affecting the stability and performance of the cluster. Like with resource requests, you can adjust these values based on your application's requirements and performance characteristics.

By specifying both resource requests and limits, you provide Kubernetes with information about how much resources your container needs and how much it can use at maximum. This helps the scheduler make informed placement decisions and ensures that your application's resource usage is controlled within acceptable bounds.

Key Functions of Requests and Limits in Kubernetes

Now lets look at the activities and functions of resource requests and limits feature in Kubernetes:

Functions Of Resource Requests

- Resource requests define the minimum amount of resources (CPU and memory) that a container needs to operate effectively. Kubernetes scheduler uses this information to decide which node to place the pod on.

- Pods with higher resource requests are given priority when allocating nodes, ensuring that pods get placed on nodes that can satisfy their resource requirements.

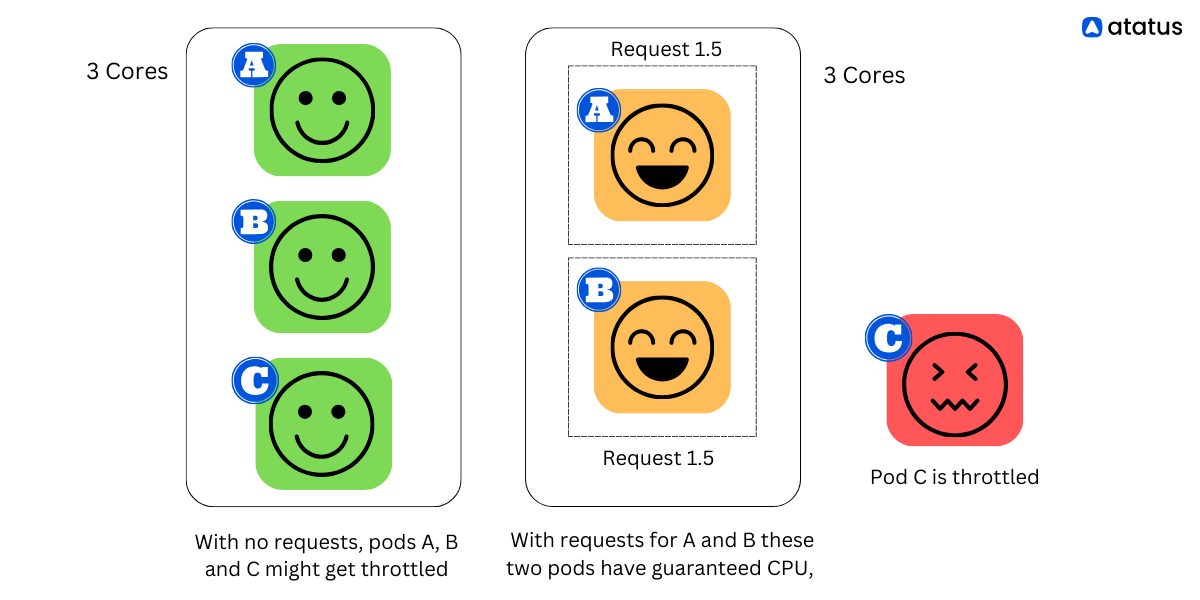

- By setting resource requests, you provide a predictable baseline for your application's resource needs. This prevents resource contention and ensures that your application receives the resources it needs to run consistently.

- Resource requests help avoid resource starvation by ensuring that pods are only placed on nodes where sufficient resources are available to meet their demands.

Functions Of Resource Limits

- Resource limits define the maximum amount of resources (CPU and memory) that a container is allowed to use. They prevent containers from using more resources than they are allocated.

- Limits prevent a single container from monopolizing resources and negatively impacting the performance and stability of other containers on the same node.

- By enforcing resource limits, you ensure that your application has a guaranteed share of resources, even in scenarios where other containers or pods are using significant resources.

- Without limits, a single container with irregular resource consumption patterns (a "noisy neighbor") could affect the performance of other containers on the same node. Limits prevent such scenarios.

Activities of Requests and Limits:

- Scheduling Decisions: Kubernetes scheduler uses resource requests to make informed decisions about where to place pods. It selects nodes that can meet the resource requirements of the pod.

- Resource Allocation: Once a pod is scheduled to a node, the resources that the pod's containers requested are reserved on that node. This ensures that the pod has the necessary resources available to it.

- Resource Isolation: Resource limits enforce boundaries on container resource usage. If a container exceeds its limits, Kubernetes can take actions to prevent resource contention and potential crashes.

- Throttling and Eviction: When a container exceeds its memory limit, Kubernetes can trigger throttling, which slows down the container's resource consumption. If it persists, Kubernetes might evict the pod to ensure node stability.

- Fair Sharing: By setting limits, Kubernetes enforces fair sharing of resources among pods on a node. Each pod gets its allocated share, preventing one pod from using up all available resources.

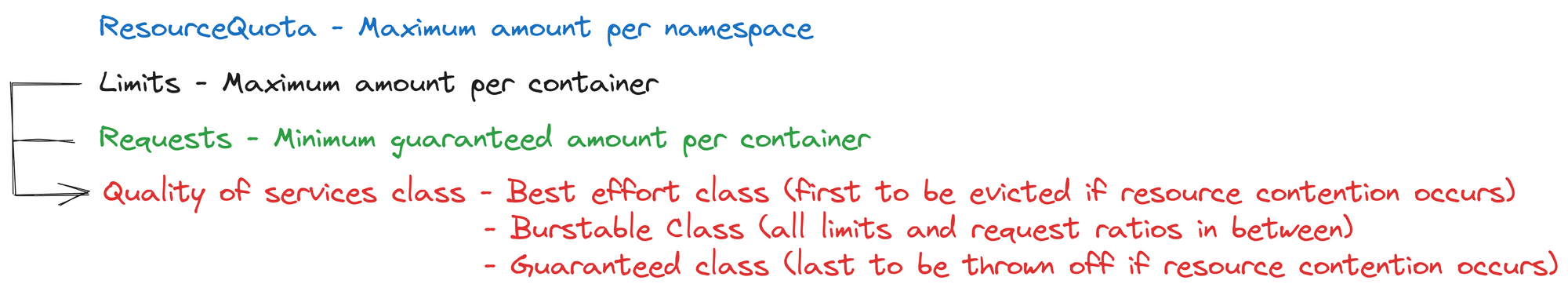

- Quality of Service (QoS) Classes: Kubernetes uses resource requests to classify pods into Quality of Service classes: Guaranteed, Burstable, and Best Effort. This classification affects how pods are treated during resource contention scenarios.

- Horizontal Pod Autoscaling (HPA): Horizontal Pod Autoscaling (HPA) adjusts the number of pod replicas based on observed metrics such as CPU utilization. Accurate resource requests are essential for HPA to make scaling decisions effectively.

- Resource Quotas: Resource requests are used in conjunction with resource quotas to ensure that namespaces do not exceed their allocated resource limits. This helps manage resource consumption at a namespace level.

More on Resource Quota and Limit Ranges

In Kubernetes, both ResourceQuota and LimitRange are mechanisms that allow you to control and manage resource usage within namespaces. They provide different ways to set limits, quotas, and default values for resource consumption in a Kubernetes cluster.

Resource Quota

A ResourceQuota is a Kubernetes object that defines limits on resource consumption within a namespace. It helps ensure that resource consumption by objects (such as pods, services, and configmaps) in the namespace remains within the defined limits. ResourceQuotas are used to prevent excessive resource usage that could potentially affect the stability and performance of the cluster.

Key features of ResourceQuota:

- Resource Limits: You can specify limits for CPU, memory, and the number of objects (such as pods, services) in a namespace.

- Hard Limits: ResourceQuota enforces hard limits that cannot be exceeded by any object in the namespace.

- Usage Monitoring: It tracks resource usage within the namespace and prevents the creation of new objects or requests that exceed the quota limits.

- Namespaces: ResourceQuotas are applied at the namespace level, allowing different namespaces to have different limits.

Example ResourceQuota YAML:

apiVersion: v1

kind: ResourceQuota

metadata:

name: my-resource-quota

spec:

hard:

pods: "10"

requests.cpu: "1"

requests.memory: 1Gi

limits.cpu: "2"

limits.memory: 2GiLimit Range

A LimitRange is a Kubernetes object that sets default values and ranges for resource requests and limits on containers within a namespace. It's useful for ensuring consistent resource settings across pods within a namespace and for preventing containers from specifying unreasonable resource requests and limits.

Key features of LimitRange:

- Default Values: LimitRange allows you to set default values for resource requests and limits for containers that do not specify them explicitly.

- Min and Max Limits: You can define minimum and maximum resource limits to prevent containers from requesting too little or too much resources.

- Prevent Resource Exhaustion: LimitRange helps prevent containers from making unreasonable demands that could lead to resource exhaustion.

- Namespace Level: Like ResourceQuota, LimitRange is applied at the namespace level.

Example LimitRange YAML:

apiVersion: v1

kind: LimitRange

metadata:

name: my-limit-range

spec:

limits:

- type: Container

max:

memory: 1Gi

min:

cpu: 100mResourceQuota and LimitRange are both important tools for managing and controlling resource usage within Kubernetes namespaces. ResourceQuota sets hard limits on resource consumption, while LimitRange provides defaults and ranges for resource requests and limits. These mechanisms help maintain stability, fairness, and predictability in multi-tenant Kubernetes clusters.

Best Practices for Setting Requests and Limits

Remember that efficient resource management is a continuous process. Regularly reviewing and adjusting your resource requests and limits based on changes in your application's workload is necessary to maintain optimum application/ system performance.

i.) Requests should be set realistically: Set requests close to your application's average CPU usage, ensuring it gets enough resources for normal operation.

ii.) Limits should be set to prevent resource hogging: Limits prevent containers from using excessive resources and potentially affecting other pods on the same node.

iii.) Requests <= Limits: Ensure that requests are less than or equal to limits. This allows Kubernetes to make accurate scheduling decisions.

iv.) Include resource requests and limits in your deployment or pod configuration. Here's an example:

resources:

requests:

cpu: "100m" # 100 milliCPU (0.1 CPU)

limits:

cpu: "200m" # 200 milliCPU (0.2 CPU)v.) Continuously monitor your application's CPU usage and cluster performance. Adjust resource requests and limits as needed to maintain efficiency.

vi.) Utilize tools like Prometheus and Grafana for monitoring and alerting on CPU utilization. These tools can help you gather insights into your application's resource consumption patterns.

vii.) For applications with sporadic or bursty CPU usage, you can consider using burstable CPUs (e.g., cpu: "100m"). These allow your application to occasionally exceed its request for short bursts.

viii.) If available, enable cluster autoscaling to dynamically adjust the number of nodes in your cluster based on resource demands. This complements pod-level autoscaling.

Conclusion

Resource requests in Kubernetes provide essential information to the cluster scheduler, helping it make informed decisions about pod placement, resource allocation, and scaling. Accurate and meaningful resource requests contribute to the efficient utilization of cluster resources, stable application performance, and optimal use of the infrastructure.

In summary, resource requests and limits provide a way to communicate your application's resource requirements and constraints to Kubernetes. They enable efficient resource allocation, ensure stability, and protect the overall health of your cluster by preventing resource contention and overconsumption.

Whether you're a Kubernetes novice or a professional, this article will let you unlock the true potential of your containerized workloads using resource requests and limits commands!

Monitor Kubernetes Cluster with Atatus

Ensuring the optimal performance and health of your Kubernetes cluster is essential for smooth operations. With Atatus, you can simplify and enhance your Kubernetes cluster monitoring experience.

Atatus offers a comprehensive monitoring solution designed to empower you with real-time insights into your cluster's performance, resource utilization, and potential issues.

- Real-time insights for proactive issue resolution.

- Customize dashboards for focused metrics.

- Set alerts for critical thresholds.

- Optimize resource usage and scaling.

- Analyze historical data for informed decisions.

You can also easily track the performance of individual Kubernetes containers and pods. This granular level of monitoring helps to pinpoint resource-heavy containers or problematic pods affecting the overall cluster performance.

#1 Solution for Logs, Traces & Metrics

APM

Kubernetes

Logs

Synthetics

RUM

Serverless

Security

More