5 Performance Measurement Metrics for Node.js Applications

Node.js applications are those that are created on the Node.js platform, which is an event-driven I/O server-side JavaScript environment based on Google Chrome's V8 engine.

Since both the server-side and client-side are written in JavaScript, Node.js allows for easier and faster code implementation, as well as processing requests quickly and simultaneously. This is especially useful for developing real-time applications, such as chat and streaming.

Large-scale enterprise Node.js applications need not only scalability and flexibility but also the assurance that services will be available and function as intended.

Customers may be lost if companies fail to meet service-level agreement (SLA) terms owing to downtime or poor performance of production applications. Because development costs are a major consideration, it's critical to track the performance of critical applications and identify errors as soon as possible.

This article will go over the top five Node.js performance metrics you should keep an eye on on a frequent basis, which are:

#1 User-facing Latencies

Any API's purpose is to pass data from one interface to another, such as a mobile app. As a result, it's vital to ensure that the API works as intended from the user's perspective. During user interactions, you want to avoid any unwanted latencies or delays. The purpose of evaluating user-facing latencies is to check that the API is functioning properly.

You must first set a baseline before you can evaluate the application's performance. Any departure from the baseline of two standard deviations or less is considered normal. If any Node.js performance measurement exceeds this, your API is acting strangely.

After you've established a baseline, you can begin evaluating transaction performance by determining the entry point or interaction that initiates the user transaction. The entry point for a Node.js API is usually an HTTP request, but depending on the infrastructure, it could also be a WebSocket connection or a service call.

You can now test the performance across the app ecosystem to see if it's running within typical parameters (baseline + 2 standard deviations) after you've identified the entry point. For example, you discover that the response time differs from the average response time for that user transaction by more than two standard deviations.

Excessive deviation shows that the application is behaving abnormally. The user-facing metric may change depending on the time of day and week that the measurement takes place.

The data obtained during this measurement will have an impact on future baselines for that time of day and week. The progression reflects the user experience better than any other metric since it accounts for the evolution of the user-facing application.

You must monitor all components of the user interface while assessing user-facing latencies, including:

- Content size

- Error rates

- Response times

- Request rates

#2 External Dependencies

External dependencies can take many forms, including dependent web services, legacy systems, and databases. They are systems with which your application interacts.

We may not have control over the code that runs inside external dependencies, but we do typically have control over their configuration. So it's crucial to know when they're working well and when they're not. We also need to be able to distinguish between issues with our application and issues with dependencies.

External or backend services are used by all applications, and these interactions can have a significant impact on the overall performance of your application. Legacy systems, caching layers, databases, queue servers, and web services are examples of external dependencies.

Despite the fact that developers have no control over the code of these backend services, they can manage their configurations. As a result, it's vital to evaluate the performance of these backend services to see if they need to be reconfigured. You'll also need to figure out whether the application's slowness is due to internal issues or external requirements.

An APM tool should be able to automatically identify between the application and external services. To discover external dependency behaviour, you may need to alter the monitoring program in some circumstances.

You'll need a baseline to measure the performance of these external dependencies, just like you do with user-facing latencies.

Despite connecting asynchronously, latency in backend services can impair performance and the user experience in a Node.js application. The following are some examples of exit calls:

- External web-service APIs

- Internal web-services

- NoSQL servers

- SQL databases

You can detect trouble areas and enhance performance by measuring the response timings of your application as it communicates with external dependencies.

#3 Event Loop

It's easier to figure out what metrics to collect when it comes to event loop behaviour if you first understand what the event loop is and how it can affect your application's performance. You can conceive of the event loop as an infinite loop that executes code in a queue for illustration purposes.

The event loop performs a block of synchronous code for each iteration of the infinite loop. Because Node.js is single-threaded and non-blocking, it will continue to execute more code while picking up the next block of code, or tick, waiting in the queue. Despite the fact that it is a non-blocking model, the following events could be considered blocking:

- Accessing a file on disk

- Querying a database

- Requesting data from a remote Webservice

You can use callbacks to do all of your I/O operations with Javascript (the Node.js language).

This has the benefit of allowing the execution stream to move on to other code while your I/O is running in the background. Node.js will run the code in the Event Queue that is ready to be executed, assigning it to a thread from the available thread pool, and then moving on to the next code in the queue. When your code is finished, it returns to the callback, which is then prompted to execute more code until the transaction is completed.

It's worth noting that, unlike other languages like PHP or Python, the execution stream of code in Node.js is not per request. To put it another way, say you have two transactions, A and B, that an end-user has requested.

When Node.js starts executing code from transaction A, it also starts executing code from transaction B, and because Node.js is asynchronous, the code from transaction A merges with the code from transaction B in the queue.

Both transactions' code is essentially waiting in a queue to be performed by the event loop at the same time. As a result, if the event loop is blocked by code from transaction A, the execution slowness may have an influence on transaction B's performance.

The basic distinction between how code execution in Node.js may potentially affect all of the requests within the queue and how it does not in other languages is the non-blocking, single-threaded nature of the language.

As a result, in order to ensure the event loop's health, a modern Node.js application must monitor the event loop and collect crucial information around behaviour that may harm the pace of your Node.js application.

#4 Memory Leaks

V8's built-in Garbage Collector (GC) manages memory without the requirement for the developer to do so. Memory management in V8 is similar to that of other programming languages, and it is also vulnerable to memory leaks.

Application code reserves memory in the heap and fails to free it when it is no longer needed, resulting in memory leaks. Failure to free reserved memory over time causes memory utilisation to escalate, resulting in a spike in memory usage on the machine. If you choose to disregard memory leaks, Node.js will ultimately throw an error and shut down because the process has run out of memory.

To understand how GC works, you must first comprehend the distinction between active and dead memory regions. V8 considers any object referenced in memory as a living object.

Live objects include root objects and any object pointed to by a chain of pointers. Everything else is considered dead, and the GC cycle is tasked with cleaning it up. The V8 GC engine looks for dead memory locations and tries to free them so that the operating system can use them again.

The heap memory use should theoretically be flushed away after each V8 Garbage Collection cycle. Unfortunately, some objects remain in memory after the GC cycle and are never cleared. These objects are regarded as a "leak" and will continue to expand over time.

The memory leak will eventually raise your memory utilisation, affecting the performance of your application and server. You should pay attention to the GC cycle before and after you check your heap memory use.

You should pay special attention to a complete GC cycle and heap used. If the heap consumption increases over time, it's a good sign that there's a memory leak. As a general guideline, if heap consumption exceeds a few GC cycles and does not clear up, you should be concerned.

After you've determined that a memory leak is there, you can take heap snapshots and analyse the differences over time. You might be particularly interested in learning which items and classes have been continuously increasing.

Because doing a heap dump might be taxing on your application, diagnosing a memory leak in a pre-production environment is recommended to ensure that your production applications are not harmed.

#5 Application Topology

The topology of your application is the final performance component to consider. Because of the cloud, applications can now have elasticity in nature: your application environment can grow and shrink to suit the demands of your users.

As a result, it's critical to perform an inventory of your application topology to see if your environment is properly sized. Your cloud-hosting costs will rise if you have too many virtual server instances, but if you don't have enough, your business transactions will suffer.

During this evaluation, it's critical to track two metrics:

- Business Transaction Load

- Container Performance

Business transactions should be baselined, and you should know how many servers you'll need at any given time to meet that baseline.

If your company transaction demand unexpectedly surges, such as by more than two times the standard deviation of regular load, you may need to install more servers to accommodate those users.

The performance of your containers is another parameter to track. You want to see whether any of the server levels are under duress and if they are, you might want to add more servers to that tier.

Because an individual server may be under duress owing to things such as garbage collection, looking at the servers throughout a tier is crucial because if a substantial percentage of servers in a tier are under duress, it may signal that the tier cannot manage the load it is receiving.

Because your application components can scale independently, it's critical to assess each one's performance and adapt your structure accordingly.

Summary

Scalability is a feature of application programming interfaces (APIs) that employ the Node.js runtime environment. The application can manage numerous connections at the same time since Node.js is asynchronous and event-driven.

Because of these qualities, Node.js does not require thread-based networking protocols, which saves CPU time and makes the application more efficient overall.

This article provided a top-five list of metrics to consider while evaluating the health of your application. There are certain benefits to using Node.js as a JavaScript runtime. It does, however, require a lot of upkeep to keep it running smoothly.

Monitor Your Node.js Backend with Atatus

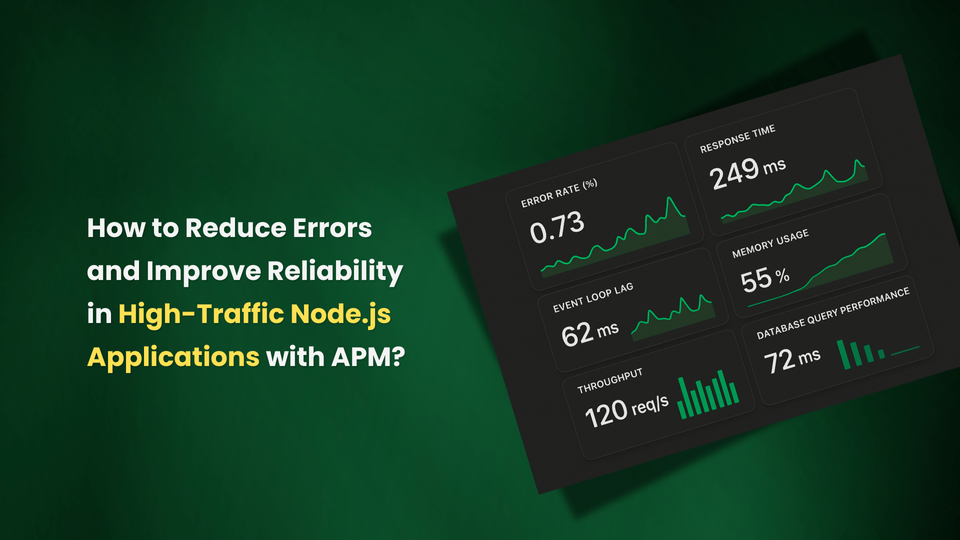

Atatus keeps track of your Node.js backend to give you a complete picture of your clients' end-user experience. You can determine the source of slow response times, database queries, external calls and other issues by identifying performance bottlenecks for each API request.

Atatus visualises end-to-end business transactions in your Node.js application automatically. With Node.js Monitoring, you can able to monitor the amount and type of failed HTTP status codes and application crashes. Also, you can analyze response time for each business transaction to uncover Node.js performance issues and Node.js errors.

Atatus can be beneficial to your business, which provides a comprehensive view of your application, including how it works, where performance bottlenecks exist, which users are most impacted, and which errors break your code for your frontend, backend, and infrastructure.

#1 Solution for Logs, Traces & Metrics

APM

Kubernetes

Logs

Synthetics

RUM

Serverless

Security

More

![Application Performance Monitoring (APM) Guide: Monitor and Optimize Application Performance - [2026 Update]](/blog/content/images/size/w960/2025/10/APM-GUIDE---Monitor-and-Optimize-Application-Performance.png)